Philipp Brauner

Trust and Human Autonomy after Cobot Failures: Communication is Key for Industry 5.0

Sep 26, 2025Abstract:Collaborative robots (cobots) are a core technology of Industry 4.0. Industry 4.0 uses cyber-physical systems, IoT and smart automation to improve efficiency and data-driven decision-making. Cobots, as cyber-physical systems, enable the introduction of lightweight automation to smaller companies through their flexibility, low cost and ability to work alongside humans, while keeping humans and their skills in the loop. Industry 5.0, the evolution of Industry 4.0, places the worker at the centre of its principles: The physical and mental well-being of the worker is the main goal of new technology design, not just productivity, efficiency and safety standards. Within this concept, human trust in cobots and human autonomy are important. While trust is essential for effective and smooth interaction, the workers' perception of autonomy is key to intrinsic motivation and overall well-being. As failures are an inevitable part of technological systems, this study aims to answer the question of how system failures affect trust in cobots as well as human autonomy, and how they can be recovered afterwards. Therefore, a VR experiment (n = 39) was set up to investigate the influence of a cobot failure and its severity on human autonomy and trust in the cobot. Furthermore, the influence of transparent communication about the failure and next steps was investigated. The results show that both trust and autonomy suffer after cobot failures, with the severity of the failure having a stronger negative impact on trust, but not on autonomy. Both trust and autonomy can be partially restored by transparent communication.

Human Autonomy and Sense of Agency in Human-Robot Interaction: A Systematic Literature Review

Sep 26, 2025Abstract:Human autonomy and sense of agency are increasingly recognised as critical for user well-being, motivation, and the ethical deployment of robots in human-robot interaction (HRI). Given the rapid development of artificial intelligence, robot capabilities and their potential to function as colleagues and companions are growing. This systematic literature review synthesises 22 empirical studies selected from an initial pool of 728 articles published between 2011 and 2024. Articles were retrieved from major scientific databases and identified based on empirical focus and conceptual relevance, namely, how to preserve and promote human autonomy and sense of agency in HRI. Derived through thematic synthesis, five clusters of potentially influential factors are revealed: robot adaptiveness, communication style, anthropomorphism, presence of a robot and individual differences. Measured through psychometric scales or the intentional binding paradigm, perceptions of autonomy and agency varied across industrial, educational, healthcare, care, and hospitality settings. The review underscores the theoretical differences between both concepts, but their yet entangled use in HRI. Despite increasing interest, the current body of empirical evidence remains limited and fragmented, underscoring the necessity for standardised definitions, more robust operationalisations, and further exploratory and qualitative research. By identifying existing gaps and highlighting emerging trends, this review contributes to the development of human-centered, autonomy-supportive robot design strategies that uphold ethical and psychological principles, ultimately supporting well-being in human-robot interaction.

AI Perceptions Across Cultures: Similarities and Differences in Expectations, Risks, Benefits, Tradeoffs, and Value in Germany and China

Dec 18, 2024

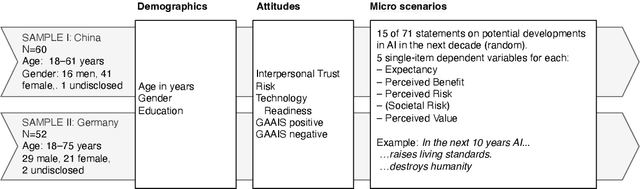

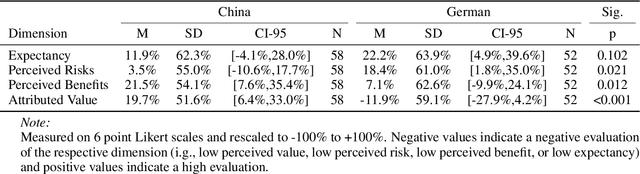

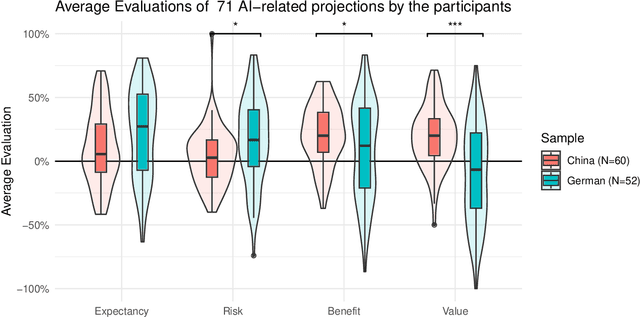

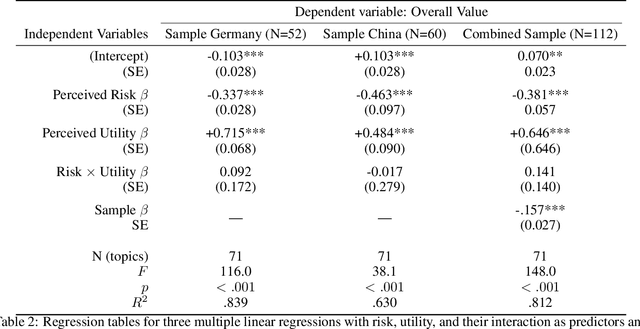

Abstract:As artificial intelligence (AI) continues to advance, understanding public perceptions -- including biases, risks, and benefits -- is critical for guiding research priorities, shaping public discourse, and informing policy. This study explores public mental models of AI using micro scenarios to assess reactions to 71 statements about AI's potential future impacts. Drawing on cross-cultural samples from Germany (N=52) and China (N=60), we identify significant differences in expectations, evaluations, and risk-utility tradeoffs. German participants tended toward more cautious assessments, whereas Chinese participants expressed greater optimism regarding AI's societal benefits. Chinese participants exhibited relatively balanced risk-benefit tradeoffs ($\beta=-0.463$ for risk and $\beta=+0.484$ for benefit, $r^2=.630$). In contrast, German participants showed a stronger emphasis on AI benefits and less on risks ($\beta=-0.337$ for risk and $\beta=+0.715$ for benefit, $r^2=.839$). Visual cognitive maps illustrate these contrasts, offering new perspectives on how cultural contexts shape AI acceptance. Our findings underline key factors influencing public perception and provide actionable insights for fostering equitable and culturally sensitive integration of AI technologies.

Demonstrating Data-to-Knowledge Pipelines for Connecting Production Sites in the World Wide Lab

Dec 16, 2024Abstract:The digital transformation of production requires new methods of data integration and storage, as well as decision making and support systems that work vertically and horizontally throughout the development, production, and use cycle. In this paper, we propose Data-to-Knowledge (and Knowledge-to-Data) pipelines for production as a universal concept building on a network of Digital Shadows (a concept augmenting Digital Twins). We show a proof of concept that builds on and bridges existing infrastructure to 1) capture and semantically annotates trajectory data from multiple similar but independent robots in different organisations and use cases in a data lakehouse and 2) an independent process that dynamically queries matching data for training an inverse dynamic foundation model for robotic control. The article discusses the challenges and benefits of this approach and how Data-to-Knowledge pipelines contribute efficiency gains and industrial scalability in a World Wide Lab as a research outlook.

Misalignments in AI Perception: Quantitative Findings and Visual Mapping of How Experts and the Public Differ in Expectations and Risks, Benefits, and Value Judgments

Dec 02, 2024

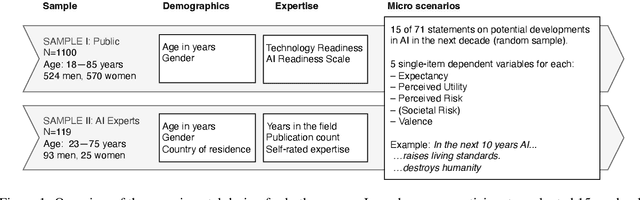

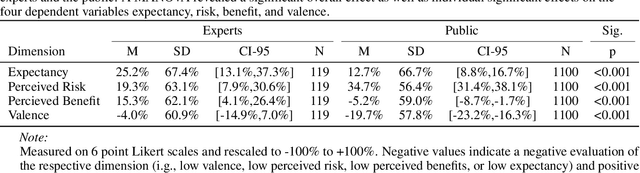

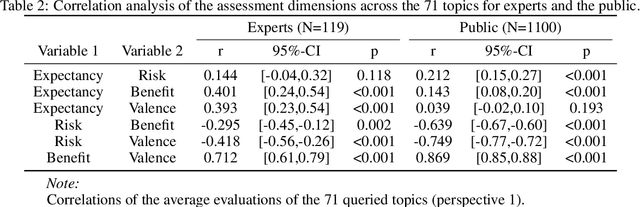

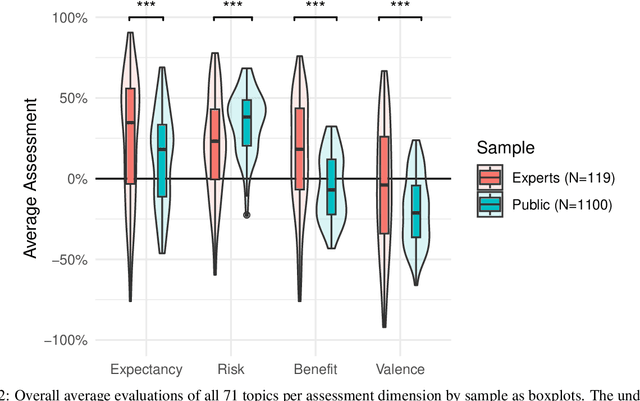

Abstract:Artificial Intelligence (AI) is transforming diverse societal domains, raising critical questions about its risks and benefits and the misalignments between public expectations and academic visions. This study examines how the general public (N=1110) -- people using or being affected by AI -- and academic AI experts (N=119) -- people shaping AI development -- perceive AI's capabilities and impact across 71 scenarios, including sustainability, healthcare, job performance, societal divides, art, and warfare. Participants evaluated each scenario on four dimensions: expected probability, perceived risk and benefit, and overall sentiment (or value). The findings reveal significant quantitative differences: experts anticipate higher probabilities, perceive lower risks, report greater utility, and express more favorable sentiment toward AI compared to the non-experts. Notably, risk-benefit tradeoffs differ: the public assigns risk half the weight of benefits, while experts assign it only a third. Visual maps of these evaluations highlight areas of convergence and divergence, identifying potential sources of public concern. These insights offer actionable guidance for researchers and policymakers to align AI development with societal values, fostering public trust and informed governance.

Mapping Public Perception of Artificial Intelligence: Expectations, Risk-Benefit Tradeoffs, and Value As Determinants for Societal Acceptance

Nov 28, 2024Abstract:Understanding public perception of artificial intelligence (AI) and the tradeoffs between potential risks and benefits is crucial, as these perceptions might shape policy decisions, influence innovation trajectories for successful market strategies, and determine individual and societal acceptance of AI technologies. Using a representative sample of 1100 participants from Germany, this study examines mental models of AI. Participants quantitatively evaluated 71 statements about AI's future capabilities (e.g., autonomous driving, medical care, art, politics, warfare, and societal divides), assessing the expected likelihood of occurrence, perceived risks, benefits, and overall value. We present rankings of these projections alongside visual mappings illustrating public risk-benefit tradeoffs. While many scenarios were deemed likely, participants often associated them with high risks, limited benefits, and low overall value. Across all scenarios, 96.4% ($r^2=96.4\%$) of the variance in value assessment can be explained by perceived risks ($\beta=-.504$) and perceived benefits ($\beta=+.710$), with no significant relation to expected likelihood. Demographics and personality traits influenced perceptions of risks, benefits, and overall evaluations, underscoring the importance of increasing AI literacy and tailoring public information to diverse user needs. These findings provide actionable insights for researchers, developers, and policymakers by highlighting critical public concerns and individual factors essential to align AI development with individual values.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge