P. M. A van Ooijen

The Hidden Adversarial Vulnerabilities of Medical Federated Learning

Oct 21, 2023

Abstract:In this paper, we delve into the susceptibility of federated medical image analysis systems to adversarial attacks. Our analysis uncovers a novel exploitation avenue: using gradient information from prior global model updates, adversaries can enhance the efficiency and transferability of their attacks. Specifically, we demonstrate that single-step attacks (e.g. FGSM), when aptly initialized, can outperform the efficiency of their iterative counterparts but with reduced computational demand. Our findings underscore the need to revisit our understanding of AI security in federated healthcare settings.

Tackling Heterogeneity in Medical Federated learning via Vision Transformers

Oct 13, 2023Abstract:Optimization-based regularization methods have been effective in addressing the challenges posed by data heterogeneity in medical federated learning, particularly in improving the performance of underrepresented clients. However, these methods often lead to lower overall model accuracy and slower convergence rates. In this paper, we demonstrate that using Vision Transformers can substantially improve the performance of underrepresented clients without a significant trade-off in overall accuracy. This improvement is attributed to the Vision transformer's ability to capture long-range dependencies within the input data.

Fed-Safe: Securing Federated Learning in Healthcare Against Adversarial Attacks

Oct 12, 2023Abstract:This paper explores the security aspects of federated learning applications in medical image analysis. Current robustness-oriented methods like adversarial training, secure aggregation, and homomorphic encryption often risk privacy compromises. The central aim is to defend the network against potential privacy breaches while maintaining model robustness against adversarial manipulations. We show that incorporating distributed noise, grounded in the privacy guarantees in federated settings, enables the development of a adversarially robust model that also meets federated privacy standards. We conducted comprehensive evaluations across diverse attack scenarios, parameters, and use cases in cancer imaging, concentrating on pathology, meningioma, and glioma. The results reveal that the incorporation of distributed noise allows for the attainment of security levels comparable to those of conventional adversarial training while requiring fewer retraining samples to establish a robust model.

Exploring adversarial attacks in federated learning for medical imaging

Oct 10, 2023Abstract:Federated learning offers a privacy-preserving framework for medical image analysis but exposes the system to adversarial attacks. This paper aims to evaluate the vulnerabilities of federated learning networks in medical image analysis against such attacks. Employing domain-specific MRI tumor and pathology imaging datasets, we assess the effectiveness of known threat scenarios in a federated learning environment. Our tests reveal that domain-specific configurations can increase the attacker's success rate significantly. The findings emphasize the urgent need for effective defense mechanisms and suggest a critical re-evaluation of current security protocols in federated medical image analysis systems.

A Comparative Study of Federated Learning Models for COVID-19 Detection

Mar 28, 2023

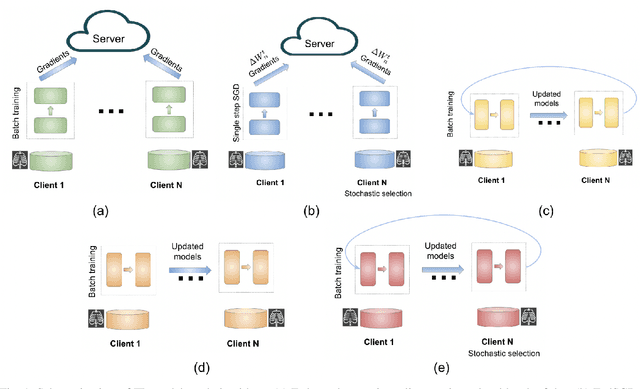

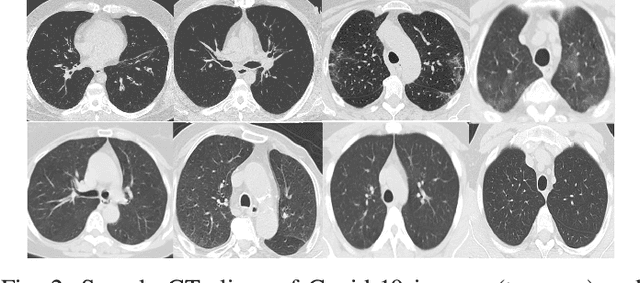

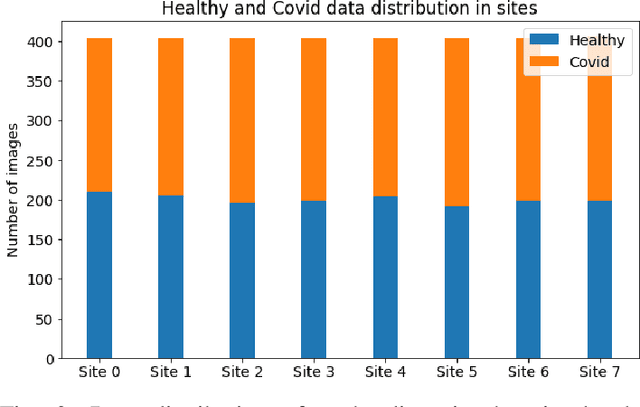

Abstract:Deep learning is effective in diagnosing COVID-19 and requires a large amount of data to be effectively trained. Due to data and privacy regulations, hospitals generally have no access to data from other hospitals. Federated learning (FL) has been used to solve this problem, where it utilizes a distributed setting to train models in hospitals in a privacy-preserving manner. Deploying FL is not always feasible as it requires high computation and network communication resources. This paper evaluates five FL algorithms' performance and resource efficiency for Covid-19 detection. A decentralized setting with CNN networks is set up, and the performance of FL algorithms is compared with a centralized environment. We examined the algorithms with varying numbers of participants, federated rounds, and selection algorithms. Our results show that cyclic weight transfer can have better overall performance, and results are better with fewer participating hospitals. Our results demonstrate good performance for detecting COVID-19 patients and might be useful in deploying FL algorithms for covid-19 detection and medical image analysis in general.

AI Technical Considerations: Data Storage, Cloud usage and AI Pipeline

Jan 20, 2022Abstract:Artificial intelligence (AI), especially deep learning, requires vast amounts of data for training, testing, and validation. Collecting these data and the corresponding annotations requires the implementation of imaging biobanks that provide access to these data in a standardized way. This requires careful design and implementation based on the current standards and guidelines and complying with the current legal restrictions. However, the realization of proper imaging data collections is not sufficient to train, validate and deploy AI as resource demands are high and require a careful hybrid implementation of AI pipelines both on-premise and in the cloud. This chapter aims to help the reader when technical considerations have to be made about the AI environment by providing a technical background of different concepts and implementation aspects involved in data storage, cloud usage, and AI pipelines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge