Oscar Mendez Maldonado

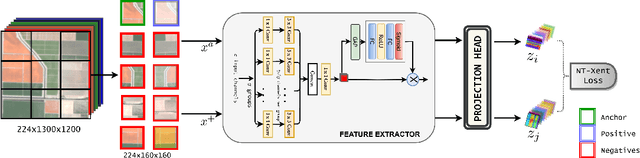

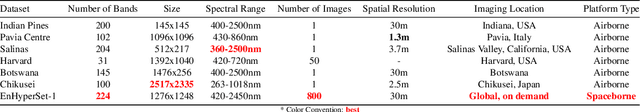

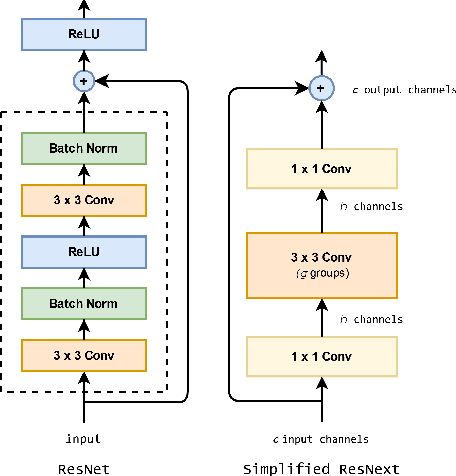

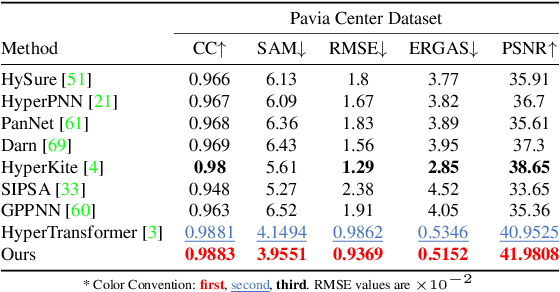

HyperKon: A Self-Supervised Contrastive Network for Hyperspectral Image Analysis

Nov 26, 2023

Abstract:The exceptional spectral resolution of hyperspectral imagery enables material insights that are not possible with RGB or multispectral images. Yet, the full potential of this data is often underutilized by deep learning techniques due to the scarcity of hyperspectral-native CNN backbones. To bridge this gap, we introduce HyperKon, a self-supervised contrastive learning network designed and trained on hyperspectral data from the EnMAP Hyperspectral Satellite\cite{kaufmann2012environmental}. HyperKon uniquely leverages the high spectral continuity, range, and resolution of hyperspectral data through a spectral attention mechanism and specialized convolutional layers. We also perform a thorough ablation study on different kinds of layers, showing their performance in understanding hyperspectral layers. It achieves an outstanding 98% Top-1 retrieval accuracy and outperforms traditional RGB-trained backbones in hyperspectral pan-sharpening tasks. Additionally, in hyperspectral image classification, HyperKon surpasses state-of-the-art methods, indicating a paradigm shift in hyperspectral image analysis and underscoring the importance of hyperspectral-native backbones.

G-CMP: Graph-enhanced Contextual Matrix Profile for unsupervised anomaly detection in sensor-based remote health monitoring

Nov 29, 2022Abstract:Sensor-based remote health monitoring is used in industrial, urban and healthcare settings to monitor ongoing operation of equipment and human health. An important aim is to intervene early if anomalous events or adverse health is detected. In the wild, these anomaly detection approaches are challenged by noise, label scarcity, high dimensionality, explainability and wide variability in operating environments. The Contextual Matrix Profile (CMP) is a configurable 2-dimensional version of the Matrix Profile (MP) that uses the distance matrix of all subsequences of a time series to discover patterns and anomalies. The CMP is shown to enhance the effectiveness of the MP and other SOTA methods at detecting, visualising and interpreting true anomalies in noisy real world data from different domains. It excels at zooming out and identifying temporal patterns at configurable time scales. However, the CMP does not address cross-sensor information, and cannot scale to high dimensional data. We propose a novel, self-supervised graph-based approach for temporal anomaly detection that works on context graphs generated from the CMP distance matrix. The learned graph embeddings encode the anomalous nature of a time context. In addition, we evaluate other graph outlier algorithms for the same task. Given our pipeline is modular, graph construction, generation of graph embeddings, and pattern recognition logic can all be chosen based on the specific pattern detection application. We verified the effectiveness of graph-based anomaly detection and compared it with the CMP and 3 state-of-the art methods on two real-world healthcare datasets with different anomalies. Our proposed method demonstrated better recall, alert rate and generalisability.

Translating Images into Maps

Oct 03, 2021

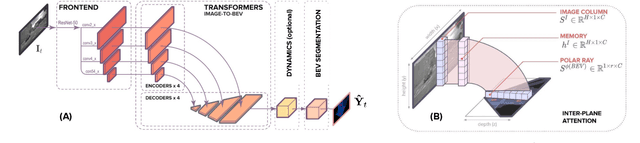

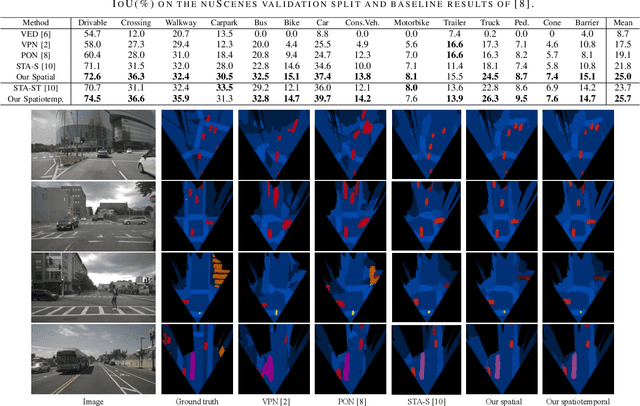

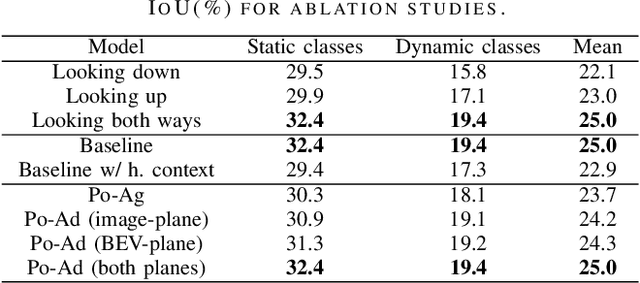

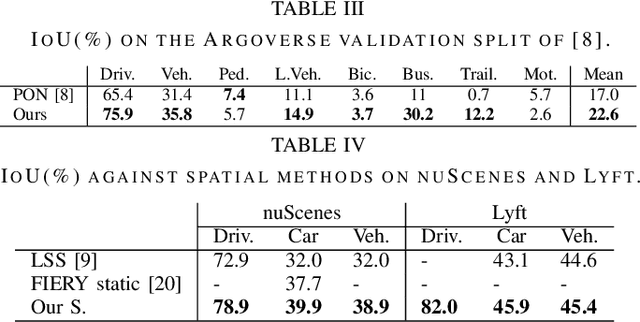

Abstract:We approach instantaneous mapping, converting images to a top-down view of the world, as a translation problem. We show how a novel form of transformer network can be used to map from images and video directly to an overhead map or bird's-eye-view (BEV) of the world, in a single end-to-end network. We assume a 1-1 correspondence between a vertical scanline in the image, and rays passing through the camera location in an overhead map. This lets us formulate map generation from an image as a set of sequence-to-sequence translations. Posing the problem as translation allows the network to use the context of the image when interpreting the role of each pixel. This constrained formulation, based upon a strong physical grounding of the problem, leads to a restricted transformer network that is convolutional in the horizontal direction only. The structure allows us to make efficient use of data when training, and obtains state-of-the-art results for instantaneous mapping of three large-scale datasets, including a 15% and 30% relative gain against existing best performing methods on the nuScenes and Argoverse datasets, respectively. We make our code available on https://github.com/avishkarsaha/translating-images-into-maps.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge