Ori Nizan

FPGAN-Control: A Controllable Fingerprint Generator for Training with Synthetic Data

Oct 29, 2023

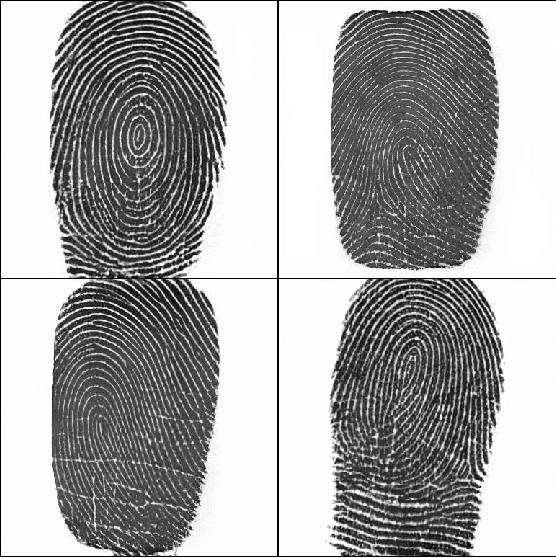

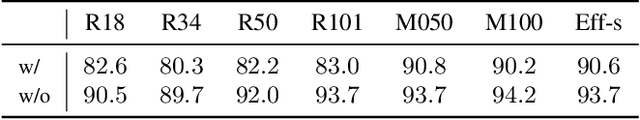

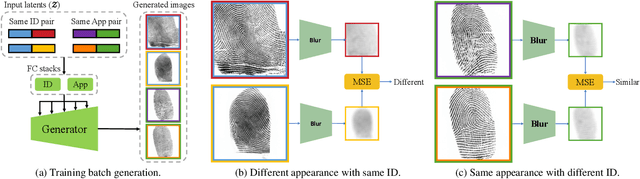

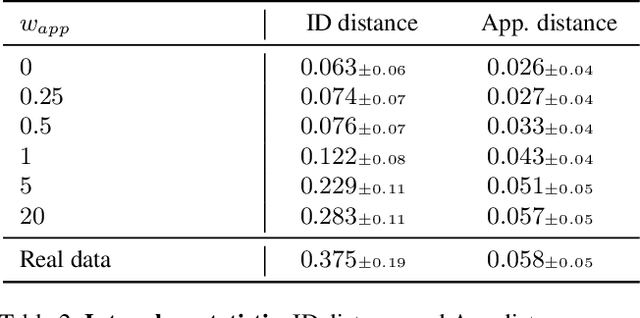

Abstract:Training fingerprint recognition models using synthetic data has recently gained increased attention in the biometric community as it alleviates the dependency on sensitive personal data. Existing approaches for fingerprint generation are limited in their ability to generate diverse impressions of the same finger, a key property for providing effective data for training recognition models. To address this gap, we present FPGAN-Control, an identity preserving image generation framework which enables control over the fingerprint's image appearance (e.g., fingerprint type, acquisition device, pressure level) of generated fingerprints. We introduce a novel appearance loss that encourages disentanglement between the fingerprint's identity and appearance properties. In our experiments, we used the publicly available NIST SD302 (N2N) dataset for training the FPGAN-Control model. We demonstrate the merits of FPGAN-Control, both quantitatively and qualitatively, in terms of identity preservation level, degree of appearance control, and low synthetic-to-real domain gap. Finally, training recognition models using only synthetic datasets generated by FPGAN-Control lead to recognition accuracies that are on par or even surpass models trained using real data. To the best of our knowledge, this is the first work to demonstrate this.

k-NNN: Nearest Neighbors of Neighbors for Anomaly Detection

May 28, 2023

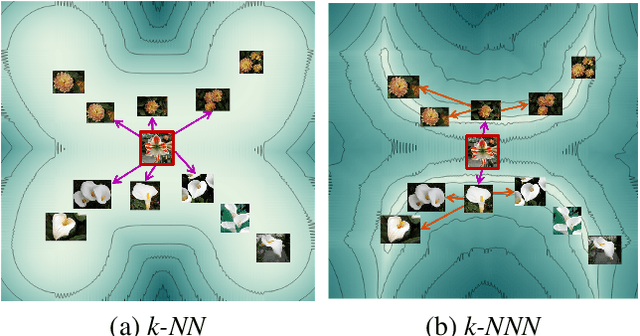

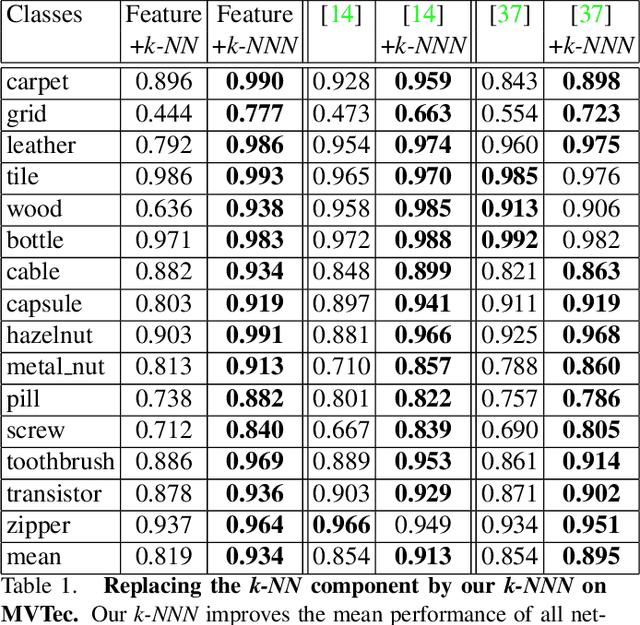

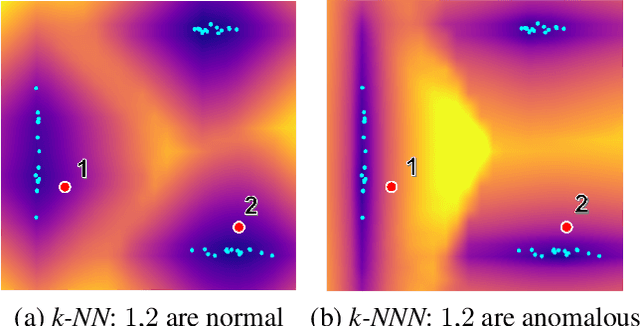

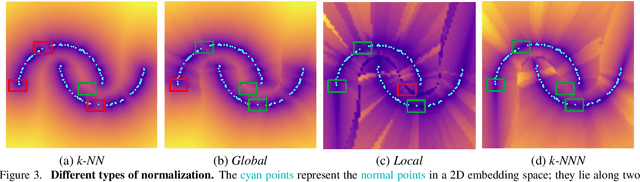

Abstract:Anomaly detection aims at identifying images that deviate significantly from the norm. We focus on algorithms that embed the normal training examples in space and when given a test image, detect anomalies based on the features distance to the k-nearest training neighbors. We propose a new operator that takes into account the varying structure & importance of the features in the embedding space. Interestingly, this is done by taking into account not only the nearest neighbors, but also the neighbors of these neighbors (k-NNN). We show that by simply replacing the nearest neighbor component in existing algorithms by our k-NNN operator, while leaving the rest of the algorithms untouched, each algorithms own results are improved. This is the case both for common homogeneous datasets, such as flowers or nuts of a specific type, as well as for more diverse datasets

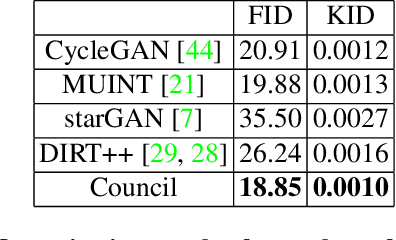

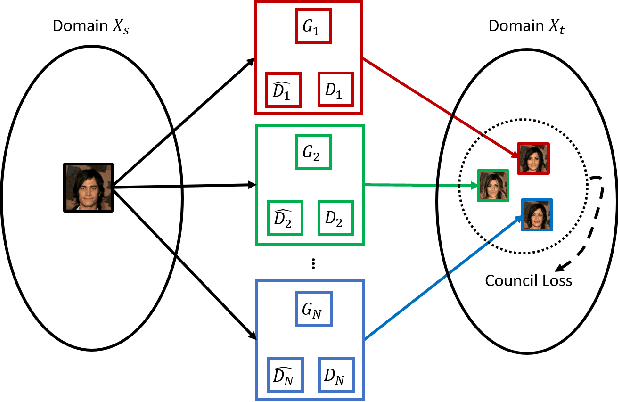

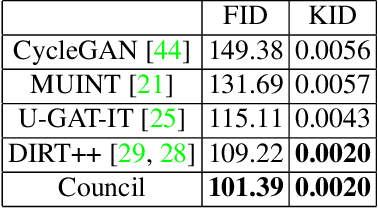

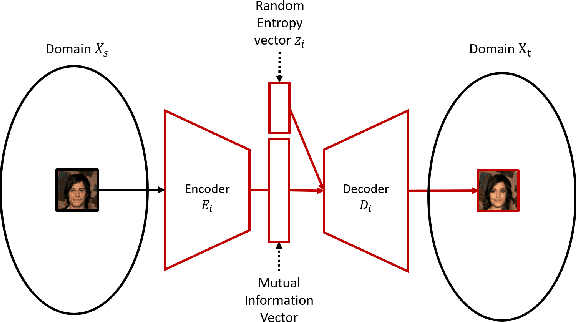

Breaking the cycle -- Colleagues are all you need

Nov 24, 2019

Abstract:This paper proposes a novel approach to performing image-to-image translation between unpaired domains. Rather than relying on a cycle constraint, our method takes advantage of collaboration between various GANs. This results in a multi-modal method, in which multiple optional and diverse images are produced for a given image. Our model addresses some of the shortcomings of classical GANs: (1) It is able to remove large objects, such as glasses. (2) Since it does not need to support the cycle constraint, no irrelevant traces of the input are left on the generated image. (3) It manages to translate between domains that require large shape modifications. Our results are shown to outperform those generated by state-of-the-art methods for several challenging applications on commonly-used datasets, both qualitatively and quantitatively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge