Omar ElSamadisy

SECRM-2D: RL-Based Efficient and Comfortable Route-Following Autonomous Driving with Analytic Safety Guarantees

Jul 23, 2024

Abstract:Over the last decade, there has been increasing interest in autonomous driving systems. Reinforcement Learning (RL) shows great promise for training autonomous driving controllers, being able to directly optimize a combination of criteria such as efficiency comfort, and stability. However, RL- based controllers typically offer no safety guarantees, making their readiness for real deployment questionable. In this paper, we propose SECRM-2D (the Safe, Efficient and Comfortable RL- based driving Model with Lane-Changing), an RL autonomous driving controller (both longitudinal and lateral) that balances optimization of efficiency and comfort and follows a fixed route, while being subject to hard analytic safety constraints. The aforementioned safety constraints are derived from the criterion that the follower vehicle must have sufficient headway to be able to avoid a crash if the leader vehicle brakes suddenly. We evaluate SECRM-2D against several learning and non-learning baselines in simulated test scenarios, including freeway driving, exiting, merging, and emergency braking. Our results confirm that representative previously-published RL AV controllers may crash in both training and testing, even if they are optimizing a safety objective. By contrast, our controller SECRM-2D is successful in avoiding crashes during both training and testing, improves over the baselines in measures of efficiency and comfort, and is more faithful in following the prescribed route. In addition, we achieve a good theoretical understanding of the longitudinal steady-state of a collection of SECRM-2D vehicles.

Bilateral Deep Reinforcement Learning Approach for Better-than-human Car Following Model

Mar 03, 2022

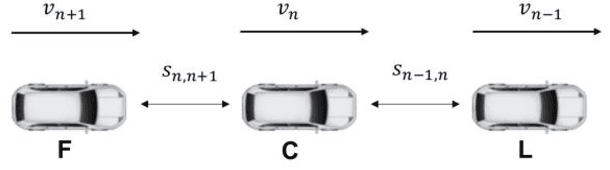

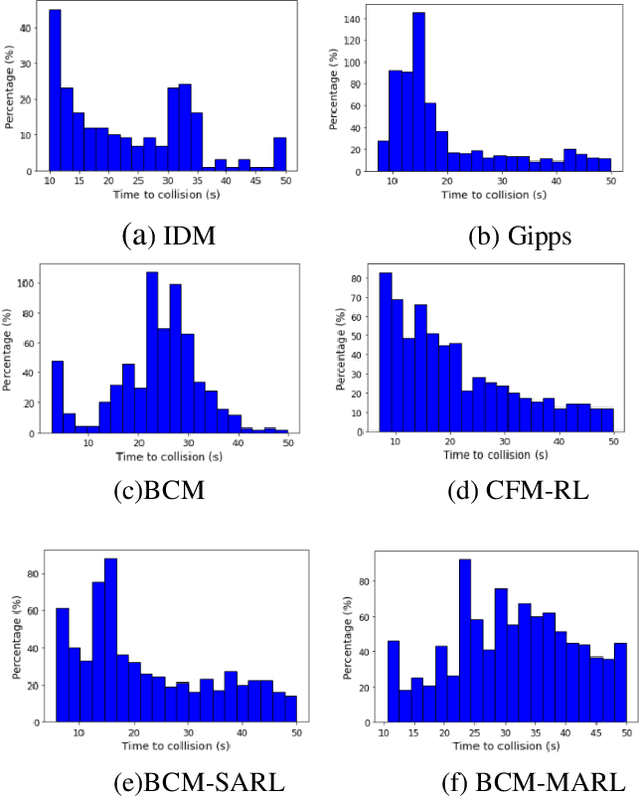

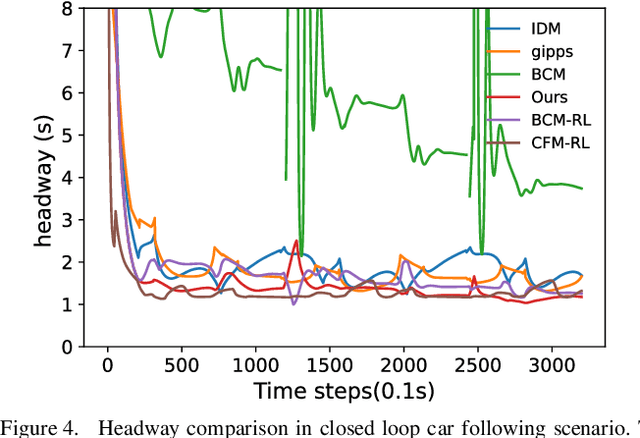

Abstract:In the coming years and decades, autonomous vehicles (AVs) will become increasingly prevalent, offering new opportunities for safer and more convenient travel and potentially smarter traffic control methods exploiting automation and connectivity. Car following is a prime function in autonomous driving. Car following based on reinforcement learning has received attention in recent years with the goal of learning and achieving performance levels comparable to humans. However, most existing RL methods model car following as a unilateral problem, sensing only the vehicle ahead. Recent literature, however, Wang and Horn [16] has shown that bilateral car following that considers the vehicle ahead and the vehicle behind exhibits better system stability. In this paper we hypothesize that this bilateral car following can be learned using RL, while learning other goals such as efficiency maximisation, jerk minimization, and safety rewards leading to a learned model that outperforms human driving. We propose and introduce a Deep Reinforcement Learning (DRL) framework for car following control by integrating bilateral information into both state and reward function based on the bilateral control model (BCM) for car following control. Furthermore, we use a decentralized multi-agent reinforcement learning framework to generate the corresponding control action for each agent. Our simulation results demonstrate that our learned policy is better than the human driving policy in terms of (a) inter-vehicle headways, (b) average speed, (c) jerk, (d) Time to Collision (TTC) and (e) string stability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge