Niloofar Shadab

A Systems-Theoretical Formalization of Closed Systems

Nov 16, 2023Abstract:There is a lack of formalism for some key foundational concepts in systems engineering. One of the most recently acknowledged deficits is the inadequacy of systems engineering practices for engineering intelligent systems. In our previous works, we proposed that closed systems precepts could be used to accomplish a required paradigm shift for the systems engineering of intelligent systems. However, to enable such a shift, formal foundations for closed systems precepts that expand the theory of systems engineering are needed. The concept of closure is a critical concept in the formalism underlying closed systems precepts. In this paper, we provide formal, systems- and information-theoretic definitions of closure to identify and distinguish different types of closed systems. Then, we assert a mathematical framework to evaluate the subjective formation of the boundaries and constraints of such systems. Finally, we argue that engineering an intelligent system can benefit from appropriate closed and open systems paradigms on multiple levels of abstraction of the system. In the main, this framework will provide the necessary fundamentals to aid in systems engineering of intelligent systems.

Core and Periphery as Closed-System Precepts for Engineering General Intelligence

Aug 04, 2022

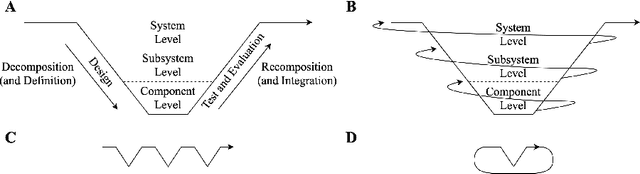

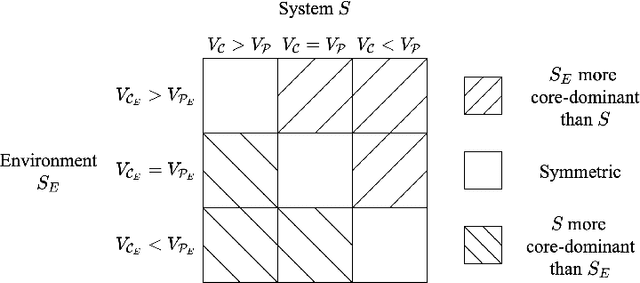

Abstract:Engineering methods are centered around traditional notions of decomposition and recomposition that rely on partitioning the inputs and outputs of components to allow for component-level properties to hold after their composition. In artificial intelligence (AI), however, systems are often expected to influence their environments, and, by way of their environments, to influence themselves. Thus, it is unclear if an AI system's inputs will be independent of its outputs, and, therefore, if AI systems can be treated as traditional components. This paper posits that engineering general intelligence requires new general systems precepts, termed the core and periphery, and explores their theoretical uses. The new precepts are elaborated using abstract systems theory and the Law of Requisite Variety. By using the presented material, engineers can better understand the general character of regulating the outcomes of AI to achieve stakeholder needs and how the general systems nature of embodiment challenges traditional engineering practice.

Towards an Interface Description Template for AI-enabled Systems

Jul 13, 2020

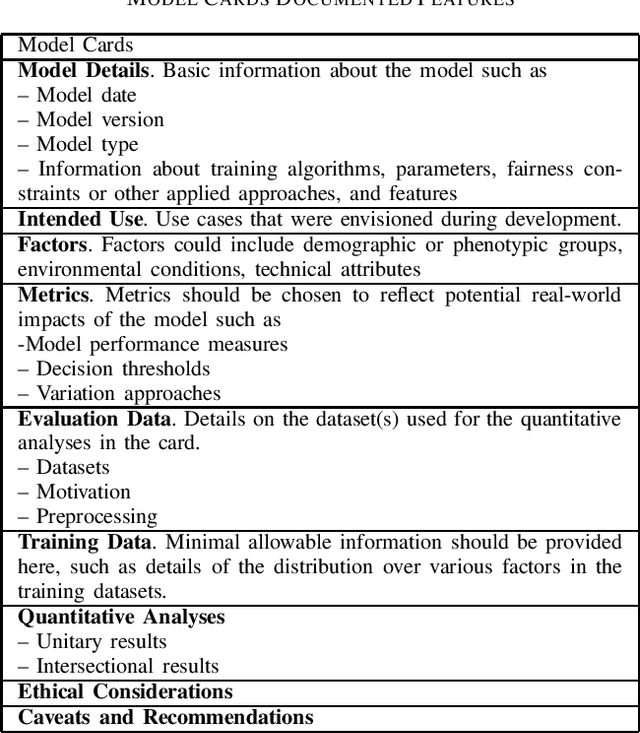

Abstract:Reuse is a common system architecture approach that seeks to instantiate a system architecture with existing components. However, reusing components with AI capabilities might introduce new risks as there is currently no framework that guides the selection of necessary information to assess their portability to operate in a system different than the one for which the component was originally purposed. We know from SW-intensive systems that AI algorithms are generally fragile and behave unexpectedly to changes in context and boundary conditions. The question we address in this paper is, what type of information should be captured in the Interface Control Document (ICD) of an AI-enabled system or component to assess its compatibility with a system for which it was not designed originally. We present ongoing work on establishing an interface description template that captures the main information of an AI-enabled component to facilitate its adequate reuse across different systems and operational contexts. Our work is inspired by Google's Model Card concept, which was developed with the same goal but focused on the reusability of AI algorithms. We extend that concept to address system-level autonomy capabilities of AI-enabled cyber-physical systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge