Nidhi Munikote

The American Sign Language Knowledge Graph: Infusing ASL Models with Linguistic Knowledge

Nov 06, 2024

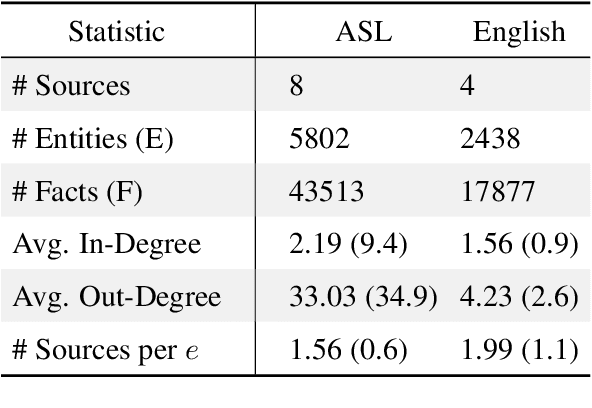

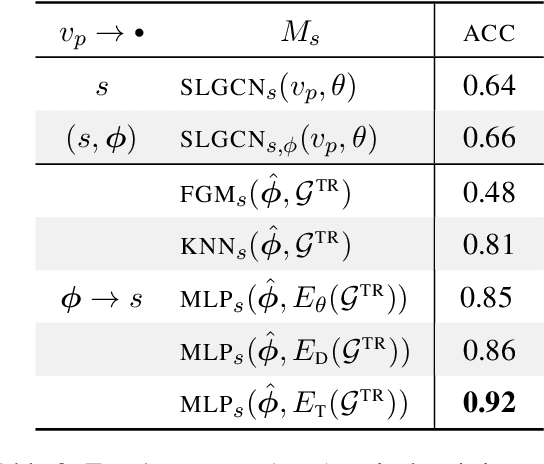

Abstract:Language models for American Sign Language (ASL) could make language technologies substantially more accessible to those who sign. To train models on tasks such as isolated sign recognition (ISR) and ASL-to-English translation, datasets provide annotated video examples of ASL signs. To facilitate the generalizability and explainability of these models, we introduce the American Sign Language Knowledge Graph (ASLKG), compiled from twelve sources of expert linguistic knowledge. We use the ASLKG to train neuro-symbolic models for 3 ASL understanding tasks, achieving accuracies of 91% on ISR, 14% for predicting the semantic features of unseen signs, and 36% for classifying the topic of Youtube-ASL videos.

Comparing Quantum Encoding Techniques

Oct 11, 2024

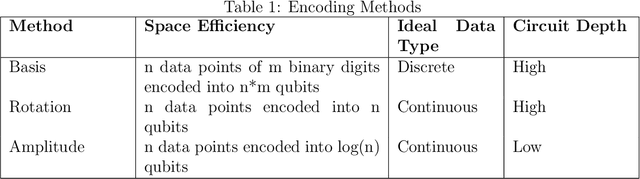

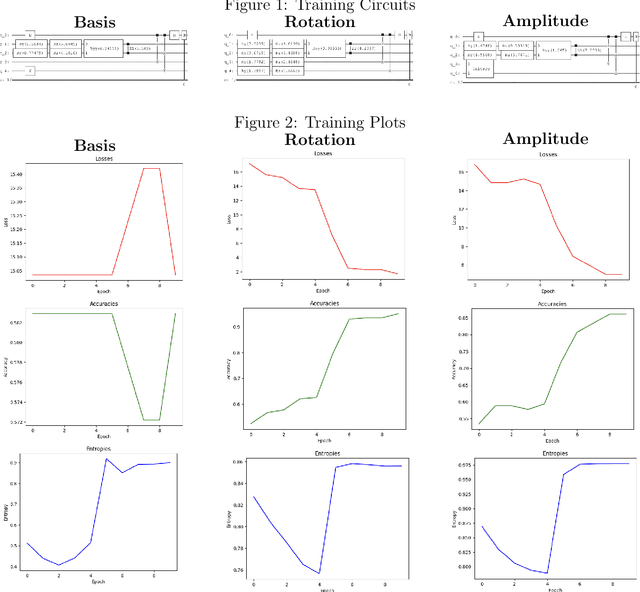

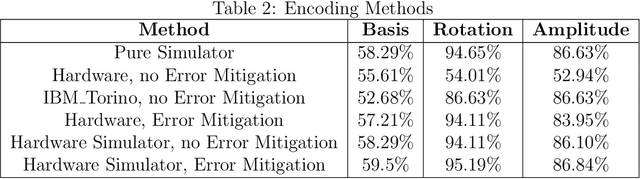

Abstract:As quantum computers continue to become more capable, the possibilities of their applications increase. For example, quantum techniques are being integrated with classical neural networks to perform machine learning. In order to be used in this way, or for any other widespread use like quantum chemistry simulations or cryptographic applications, classical data must be converted into quantum states through quantum encoding. There are three fundamental encoding methods: basis, amplitude, and rotation, as well as several proposed combinations. This study explores the encoding methods, specifically in the context of hybrid quantum-classical machine learning. Using the QuClassi quantum neural network architecture to perform binary classification of the `3' and `6' digits from the MNIST datasets, this study obtains several metrics such as accuracy, entropy, loss, and resistance to noise, while considering resource usage and computational complexity to compare the three main encoding methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge