Nicola Tosi

Physics-based machine learning for mantle convection simulations

May 21, 2025Abstract:Mantle convection simulations are an essential tool for understanding how rocky planets evolve. However, the poorly known input parameters to these simulations, the non-linear dependence of transport properties on pressure and temperature, and the long integration times in excess of several billion years all pose a computational challenge for numerical solvers. We propose a physics-based machine learning approach that predicts creeping flow velocities as a function of temperature while conserving mass, thereby bypassing the numerical solution of the Stokes problem. A finite-volume solver then uses the predicted velocities to advect and diffuse the temperature field to the next time-step, enabling autoregressive rollout at inference. For training, our model requires temperature-velocity snapshots from a handful of simulations (94). We consider mantle convection in a two-dimensional rectangular box with basal and internal heating, pressure- and temperature-dependent viscosity. Overall, our model is up to 89 times faster than the numerical solver. We also show the importance of different components in our convolutional neural network architecture such as mass conservation, learned paddings on the boundaries, and loss scaling for the overall rollout performance. Finally, we test our approach on unseen scenarios to demonstrate some of its strengths and weaknesses.

Accelerating the discovery of steady-states of planetary interior dynamics with machine learning

Aug 30, 2024

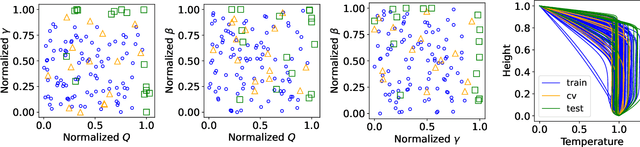

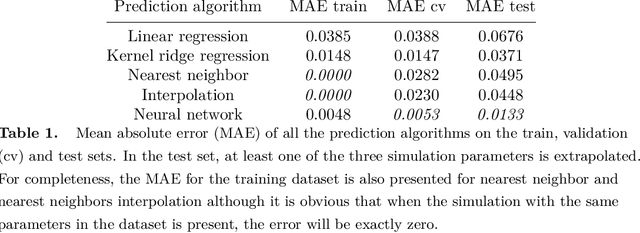

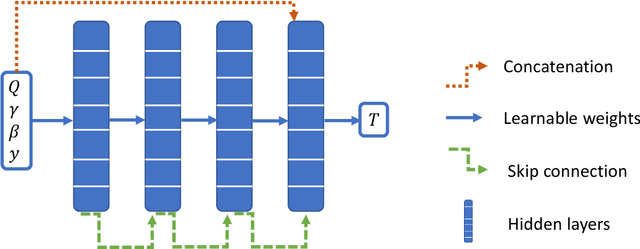

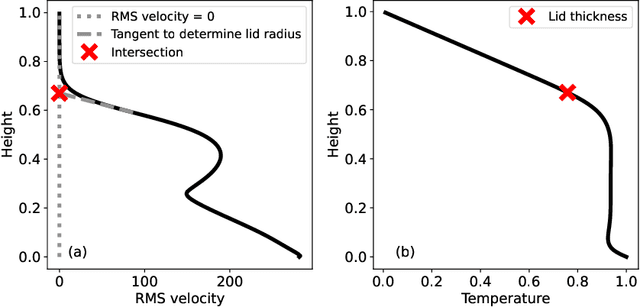

Abstract:Simulating mantle convection often requires reaching a computationally expensive steady-state, crucial for deriving scaling laws for thermal and dynamical flow properties and benchmarking numerical solutions. The strong temperature dependence of the rheology of mantle rocks causes viscosity variations of several orders of magnitude, leading to a slow-evolving stagnant lid where heat conduction dominates, overlying a rapidly-evolving and strongly convecting region. Time-stepping methods, while effective for fluids with constant viscosity, are hindered by the Courant criterion, which restricts the time step based on the system's maximum velocity and grid size. Consequently, achieving steady-state requires a large number of time steps due to the disparate time scales governing the stagnant and convecting regions. We present a concept for accelerating mantle convection simulations using machine learning. We generate a dataset of 128 two-dimensional simulations with mixed basal and internal heating, and pressure- and temperature-dependent viscosity. We train a feedforward neural network on 97 simulations to predict steady-state temperature profiles. These can then be used to initialize numerical time stepping methods for different simulation parameters. Compared to typical initializations, the number of time steps required to reach steady-state is reduced by a median factor of 3.75. The benefit of this method lies in requiring very few simulations to train on, providing a solution with no prediction error as we initialize a numerical method, and posing minimal computational overhead at inference time. We demonstrate the effectiveness of our approach and discuss the potential implications for accelerated simulations for advancing mantle convection research.

ExoMDN: Rapid characterization of exoplanet interior structures with Mixture Density Networks

Jun 15, 2023

Abstract:Characterizing the interior structure of exoplanets is essential for understanding their diversity, formation, and evolution. As the interior of exoplanets is inaccessible to observations, an inverse problem must be solved, where numerical structure models need to conform to observable parameters such as mass and radius. This is a highly degenerate problem whose solution often relies on computationally-expensive and time-consuming inference methods such as Markov Chain Monte Carlo. We present ExoMDN, a machine-learning model for the interior characterization of exoplanets based on Mixture Density Networks (MDN). The model is trained on a large dataset of more than 5.6 million synthetic planets below 25 Earth masses consisting of an iron core, a silicate mantle, a water and high-pressure ice layer, and a H/He atmosphere. We employ log-ratio transformations to convert the interior structure data into a form that the MDN can easily handle. Given mass, radius, and equilibrium temperature, we show that ExoMDN can deliver a full posterior distribution of mass fractions and thicknesses of each planetary layer in under a second on a standard Intel i5 CPU. Observational uncertainties can be easily accounted for through repeated predictions from within the uncertainties. We use ExoMDN to characterize the interior of 22 confirmed exoplanets with mass and radius uncertainties below 10% and 5% respectively, including the well studied GJ 1214 b, GJ 486 b, and the TRAPPIST-1 planets. We discuss the inclusion of the fluid Love number $k_2$ as an additional (potential) observable, showing how it can significantly reduce the degeneracy of interior structures. Utilizing the fast predictions of ExoMDN, we show that measuring $k_2$ with an accuracy of 10% can constrain the thickness of core and mantle of an Earth analog to $\approx13\%$ of the true values.

Deep learning for surrogate modelling of 2D mantle convection

Aug 23, 2021

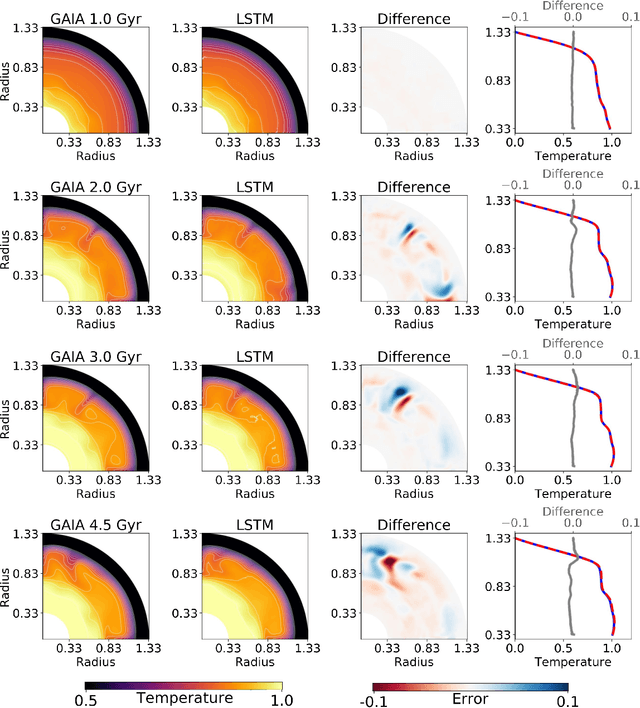

Abstract:Traditionally, 1D models based on scaling laws have been used to parameterized convective heat transfer rocks in the interior of terrestrial planets like Earth, Mars, Mercury and Venus to tackle the computational bottleneck of high-fidelity forward runs in 2D or 3D. However, these are limited in the amount of physics they can model (e.g. depth dependent material properties) and predict only mean quantities such as the mean mantle temperature. We recently showed that feedforward neural networks (FNN) trained using a large number of 2D simulations can overcome this limitation and reliably predict the evolution of entire 1D laterally-averaged temperature profile in time for complex models [Agarwal et al. 2020]. We now extend that approach to predict the full 2D temperature field, which contains more information in the form of convection structures such as hot plumes and cold downwellings. Using a dataset of 10,525 two-dimensional simulations of the thermal evolution of the mantle of a Mars-like planet, we show that deep learning techniques can produce reliable parameterized surrogates (i.e. surrogates that predict state variables such as temperature based only on parameters) of the underlying partial differential equations. We first use convolutional autoencoders to compress the temperature fields by a factor of 142 and then use FNN and long-short term memory networks (LSTM) to predict the compressed fields. On average, the FNN predictions are 99.30% and the LSTM predictions are 99.22% accurate with respect to unseen simulations. Proper orthogonal decomposition (POD) of the LSTM and FNN predictions shows that despite a lower mean absolute relative accuracy, LSTMs capture the flow dynamics better than FNNs. When summed, the POD coefficients from FNN predictions and from LSTM predictions amount to 96.51% and 97.66% relative to the coefficients of the original simulations, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge