Nicholas Ichien

Large Language Model Displays Emergent Ability to Interpret Novel Literary Metaphors

Aug 03, 2023

Abstract:Recent advances in the performance of large language models (LLMs) have sparked debate over whether, given sufficient training, high-level human abilities emerge in such generic forms of artificial intelligence (AI). Despite the exceptional performance of LLMs on a wide range of tasks involving natural language processing and reasoning, there has been sharp disagreement as to whether their abilities extend to more creative human abilities. A core example is the ability to interpret novel metaphors. Given the enormous and non-curated text corpora used to train LLMs, a serious obstacle to designing tests is the requirement of finding novel yet high-quality metaphors that are unlikely to have been included in the training data. Here we assessed the ability of GPT-4, a state-of-the-art large language model, to provide natural-language interpretations of novel literary metaphors drawn from Serbian poetry and translated into English. Despite exhibiting no signs of having been exposed to these metaphors previously, the AI system consistently produced detailed and incisive interpretations. Human judge - blind to the fact that an AI model was involved - rated metaphor interpretations generated by GPT-4 as superior to those provided by a group of college students. In interpreting reversed metaphors, GPT-4, as well as humans, exhibited signs of sensitivity to the Gricean cooperative principle. These results indicate that LLMs such as GPT-4 have acquired an emergent ability to interpret complex novel metaphors.

Visual analogy: Deep learning versus compositional models

May 14, 2021

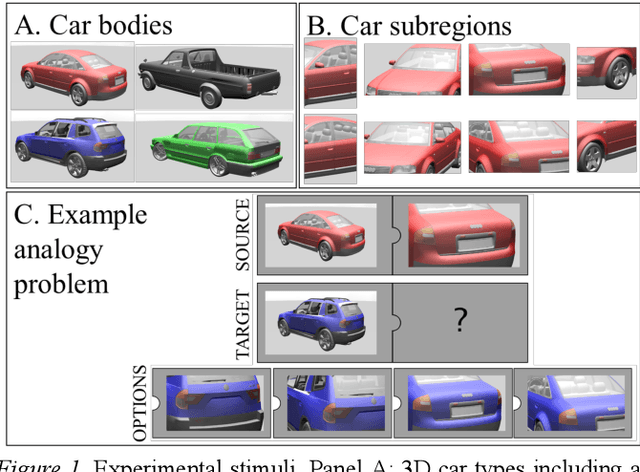

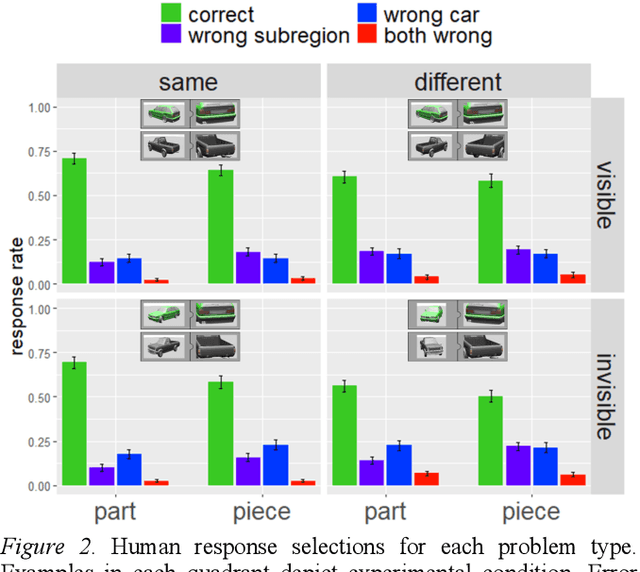

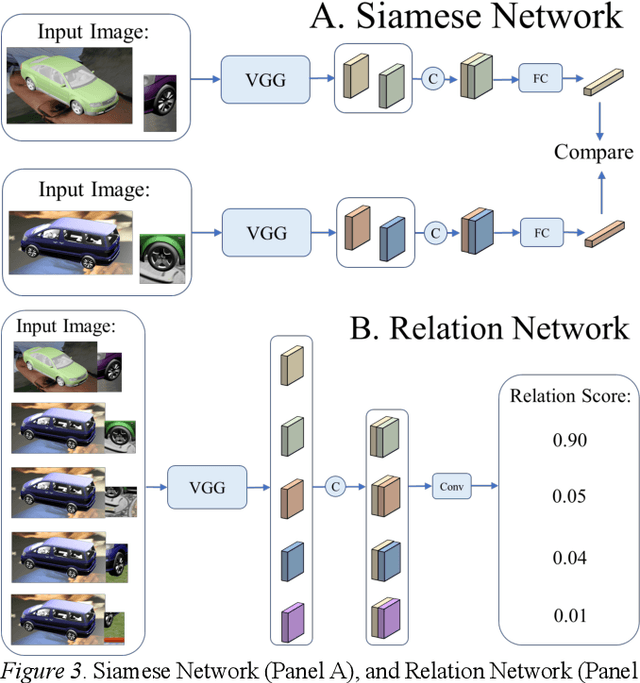

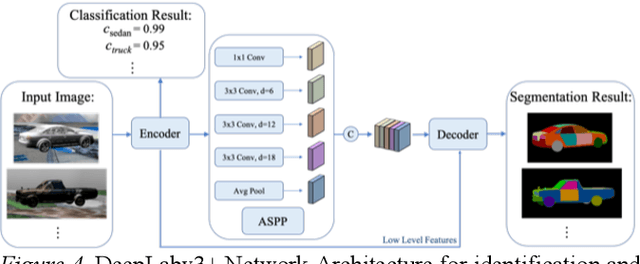

Abstract:Is analogical reasoning a task that must be learned to solve from scratch by applying deep learning models to massive numbers of reasoning problems? Or are analogies solved by computing similarities between structured representations of analogs? We address this question by comparing human performance on visual analogies created using images of familiar three-dimensional objects (cars and their subregions) with the performance of alternative computational models. Human reasoners achieved above-chance accuracy for all problem types, but made more errors in several conditions (e.g., when relevant subregions were occluded). We compared human performance to that of two recent deep learning models (Siamese Network and Relation Network) directly trained to solve these analogy problems, as well as to that of a compositional model that assesses relational similarity between part-based representations. The compositional model based on part representations, but not the deep learning models, generated qualitative performance similar to that of human reasoners.

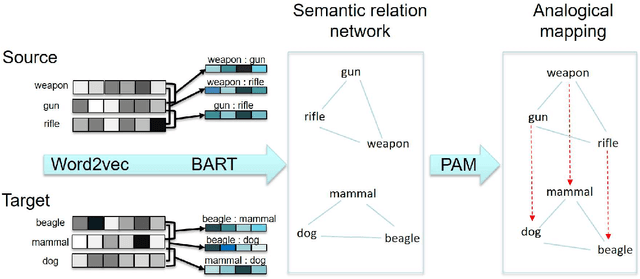

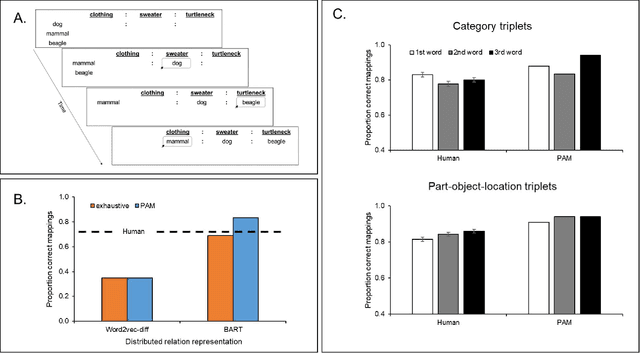

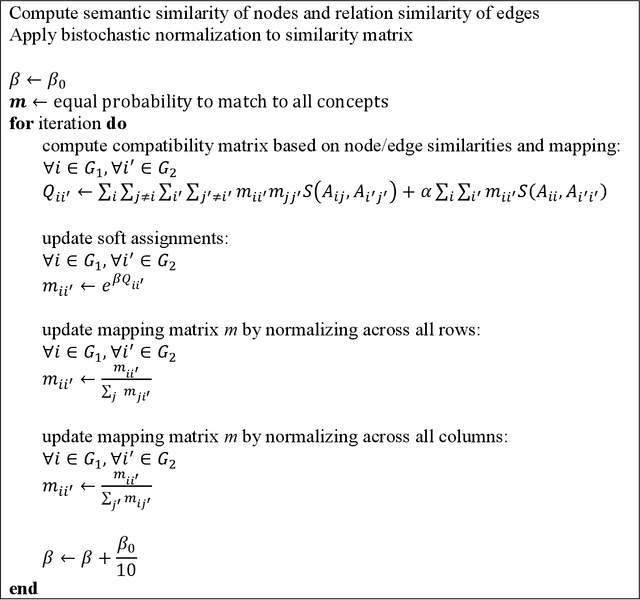

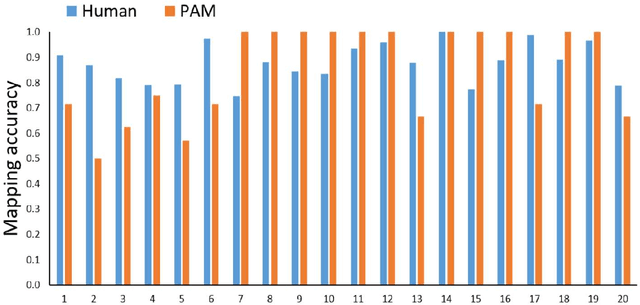

Probabilistic Analogical Mapping with Semantic Relation Networks

Mar 30, 2021

Abstract:The human ability to flexibly reason with cross-domain analogies depends on mechanisms for identifying relations between concepts and for mapping concepts and their relations across analogs. We present a new computational model of analogical mapping, based on semantic relation networks constructed from distributed representations of individual concepts and of relations between concepts. Through comparisons with human performance in a new analogy experiment with 1,329 participants, as well as in four classic studies, we demonstrate that the model accounts for a broad range of phenomena involving analogical mapping by both adults and children. The key insight is that rich semantic representations of individual concepts and relations, coupled with a generic prior favoring isomorphic mappings, yield human-like analogical mapping.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge