Nibedita Roy

Tight Convergence Rates for Online Distributed Linear Estimation with Adversarial Measurements

Apr 07, 2026Abstract:We study mean estimation of a random vector $X$ in a distributed parameter-server-worker setup. Worker $i$ observes samples of $a_i^\top X$, where $a_i^\top$ is the $i$th row of a known sensing matrix $A$. The key challenges are adversarial measurements and asynchrony: a fixed subset of workers may transmit corrupted measurements, and workers are activated asynchronously--only one is active at any time. In our previous work, we proposed a two-timescale $\ell_1$-minimization algorithm and established asymptotic recovery under a null-space-property-like condition on $A$. In this work, we establish tight non-asymptotic convergence rates under the same null-space-property-like condition. We also identify relaxed conditions on $A$ under which exact recovery may fail but recovery of a projected component of $\mathbb{E}[X]$ remains possible. Overall, our results provide a unified finite-time characterization of robustness, identifiability, and statistical efficiency in distributed linear estimation with adversarial workers, with implications for network tomography and related distributed sensing problems.

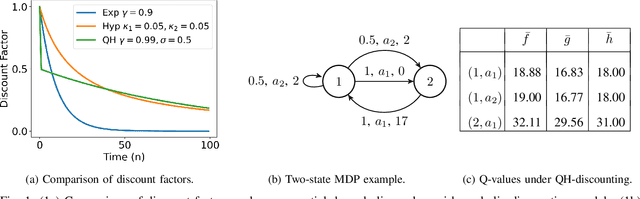

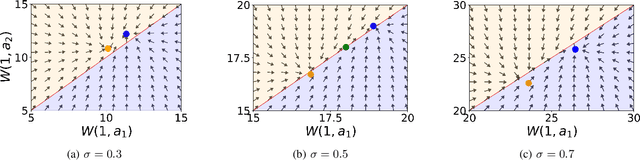

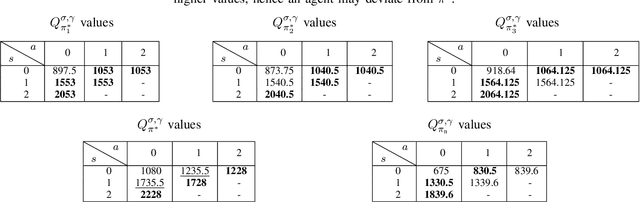

Reinforcement Learning with Quasi-Hyperbolic Discounting

Sep 16, 2024

Abstract:Reinforcement learning has traditionally been studied with exponential discounting or the average reward setup, mainly due to their mathematical tractability. However, such frameworks fall short of accurately capturing human behavior, which has a bias towards immediate gratification. Quasi-Hyperbolic (QH) discounting is a simple alternative for modeling this bias. Unlike in traditional discounting, though, the optimal QH-policy, starting from some time $t_1,$ can be different to the one starting from $t_2.$ Hence, the future self of an agent, if it is naive or impatient, can deviate from the policy that is optimal at the start, leading to sub-optimal overall returns. To prevent this behavior, an alternative is to work with a policy anchored in a Markov Perfect Equilibrium (MPE). In this work, we propose the first model-free algorithm for finding an MPE. Using a two-timescale analysis, we show that, if our algorithm converges, then the limit must be an MPE. We also validate this claim numerically for the standard inventory system with stochastic demands. Our work significantly advances the practical application of reinforcement learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge