Narinder Singh Punn

Anomaly detection in surveillance videos using transformer based attention model

Jun 06, 2022

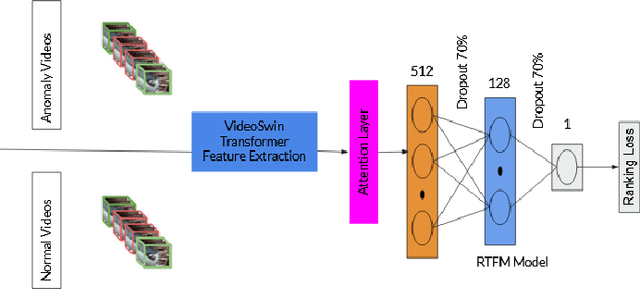

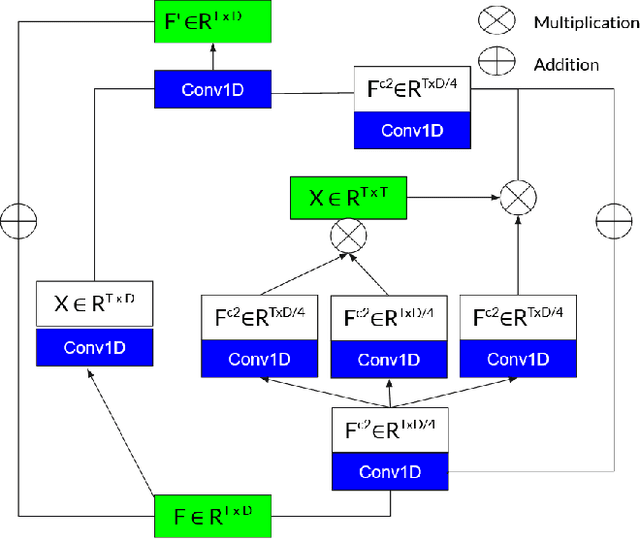

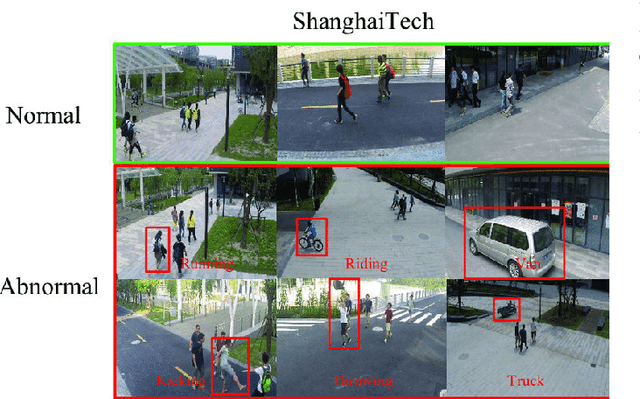

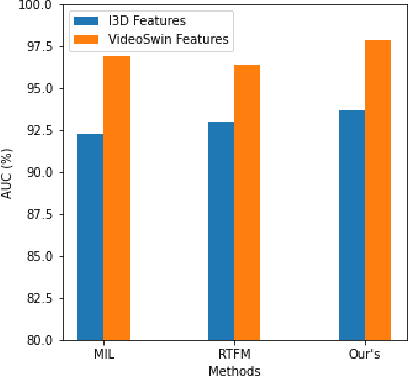

Abstract:Surveillance footage can catch a wide range of realistic anomalies. This research suggests using a weakly supervised strategy to avoid annotating anomalous segments in training videos, which is time consuming. In this approach only video level labels are used to obtain frame level anomaly scores. Weakly supervised video anomaly detection (WSVAD) suffers from the wrong identification of abnormal and normal instances during the training process. Therefore it is important to extract better quality features from the available videos. WIth this motivation, the present paper uses better quality transformer-based features named Videoswin Features followed by the attention layer based on dilated convolution and self attention to capture long and short range dependencies in temporal domain. This gives us a better understanding of available videos. The proposed framework is validated on real-world dataset i.e. ShanghaiTech Campus dataset which results in competitive performance than current state-of-the-art methods. The model and the code are available at https://github.com/kapildeshpande/Anomaly-Detection-in-Surveillance-Videos

Impact of the composition of feature extraction and class sampling in medicare fraud detection

Jun 03, 2022

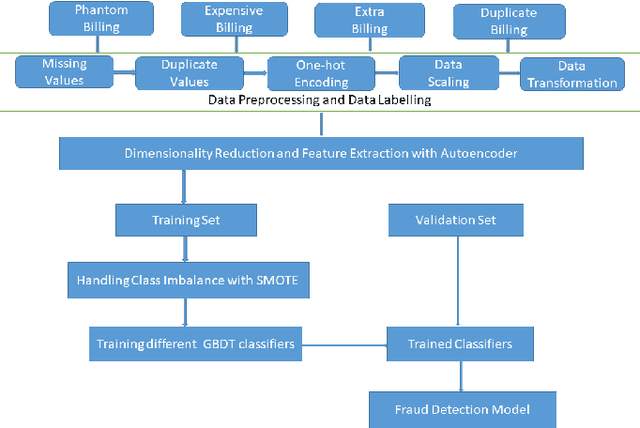

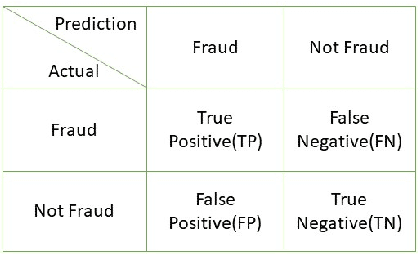

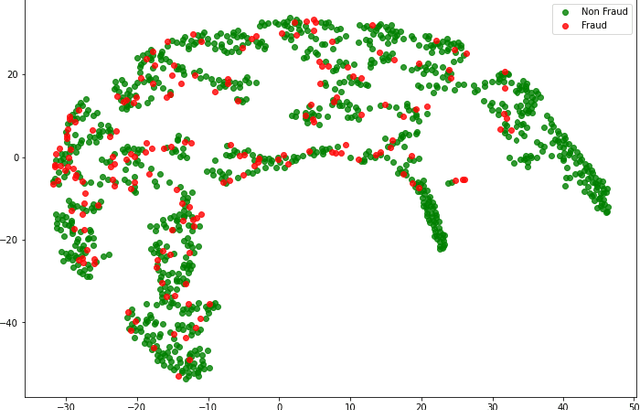

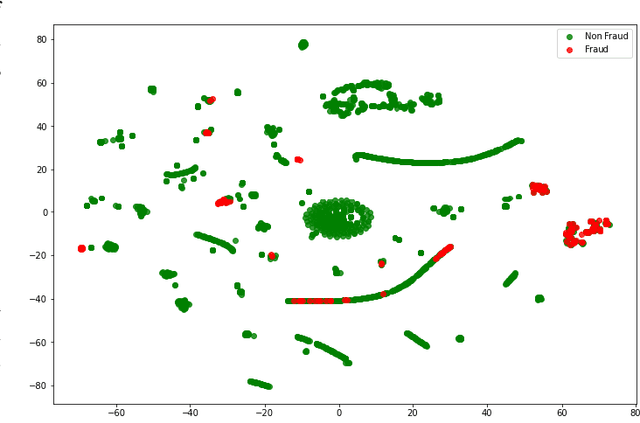

Abstract:With healthcare being critical aspect, health insurance has become an important scheme in minimizing medical expenses. Following this, the healthcare industry has seen a significant increase in fraudulent activities owing to increased insurance, and fraud has become a significant contributor to rising medical care expenses, although its impact can be mitigated using fraud detection techniques. To detect fraud, machine learning techniques are used. The Centers for Medicaid and Medicare Services (CMS) of the United States federal government released "Medicare Part D" insurance claims is utilized in this study to develop fraud detection system. Employing machine learning algorithms on a class-imbalanced and high dimensional medicare dataset is a challenging task. To compact such challenges, the present work aims to perform feature extraction following data sampling, afterward applying various classification algorithms, to get better performance. Feature extraction is a dimensionality reduction approach that converts attributes into linear or non-linear combinations of the actual attributes, generating a smaller and more diversified set of attributes and thus reducing the dimensions. Data sampling is commonlya used to address the class imbalance either by expanding the frequency of minority class or reducing the frequency of majority class to obtain approximately equal numbers of occurrences for both classes. The proposed approach is evaluated through standard performance metrics. Thus, to detect fraud efficiently, this study applies autoencoder as a feature extraction technique, synthetic minority oversampling technique (SMOTE) as a data sampling technique, and various gradient boosted decision tree-based classifiers as a classification algorithm. The experimental results show the combination of autoencoders followed by SMOTE on the LightGBM classifier achieved best results.

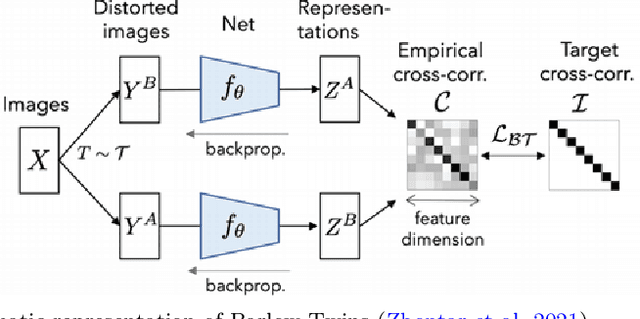

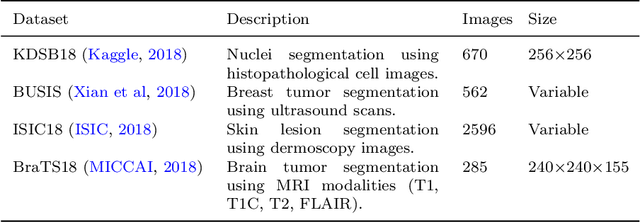

BT-Unet: A self-supervised learning framework for biomedical image segmentation using Barlow Twins with U-Net models

Jan 02, 2022

Abstract:Deep learning has brought the most profound contribution towards biomedical image segmentation to automate the process of delineation in medical imaging. To accomplish such task, the models are required to be trained using huge amount of annotated or labelled data that highlights the region of interest with a binary mask. However, efficient generation of the annotations for such huge data requires expert biomedical analysts and extensive manual effort. It is a tedious and expensive task, while also being vulnerable to human error. To address this problem, a self-supervised learning framework, BT-Unet is proposed that uses the Barlow Twins approach to pre-train the encoder of a U-Net model via redundancy reduction in an unsupervised manner to learn data representation. Later, complete network is fine-tuned to perform actual segmentation. The BT-Unet framework can be trained with a limited number of annotated samples while having high number of unannotated samples, which is mostly the case in real-world problems. This framework is validated over multiple U-Net models over diverse datasets by generating scenarios of a limited number of labelled samples using standard evaluation metrics. With exhaustive experiment trials, it is observed that the BT-Unet framework enhances the performance of the U-Net models with significant margin under such circumstances.

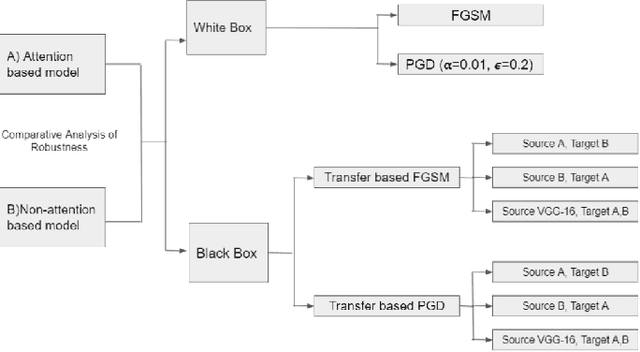

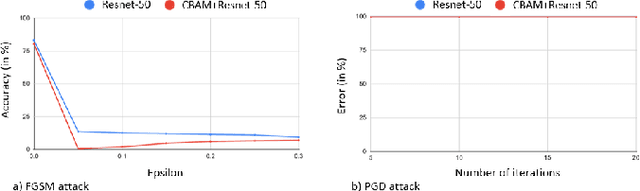

Impact of Attention on Adversarial Robustness of Image Classification Models

Sep 02, 2021

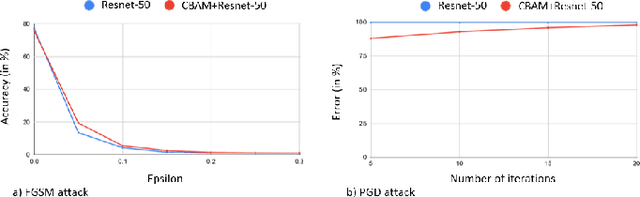

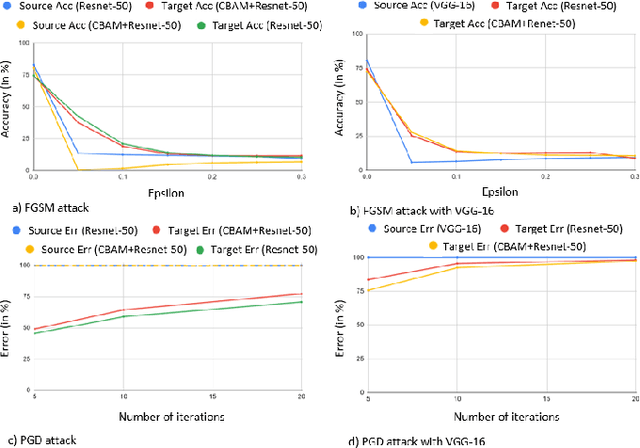

Abstract:Adversarial attacks against deep learning models have gained significant attention and recent works have proposed explanations for the existence of adversarial examples and techniques to defend the models against these attacks. Attention in computer vision has been used to incorporate focused learning of important features and has led to improved accuracy. Recently, models with attention mechanisms have been proposed to enhance adversarial robustness. Following this context, this work aims at a general understanding of the impact of attention on adversarial robustness. This work presents a comparative study of adversarial robustness of non-attention and attention based image classification models trained on CIFAR-10, CIFAR-100 and Fashion MNIST datasets under the popular white box and black box attacks. The experimental results show that the robustness of attention based models may be dependent on the datasets used i.e. the number of classes involved in the classification. In contrast to the datasets with less number of classes, attention based models are observed to show better robustness towards classification.

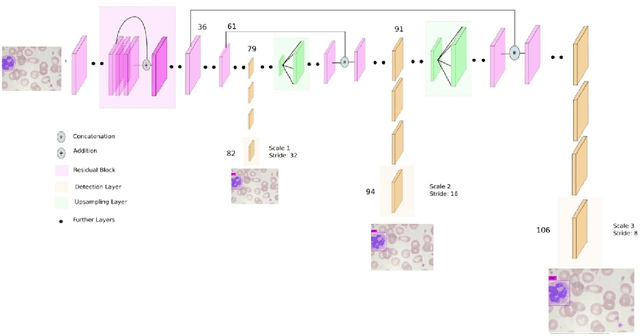

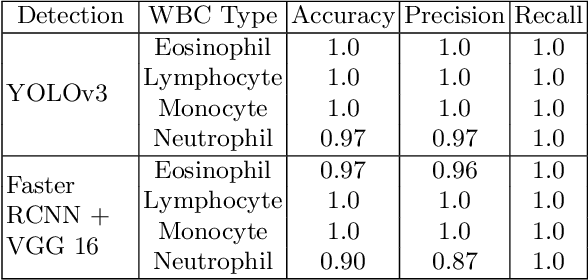

White blood cell subtype detection and classification

Aug 10, 2021

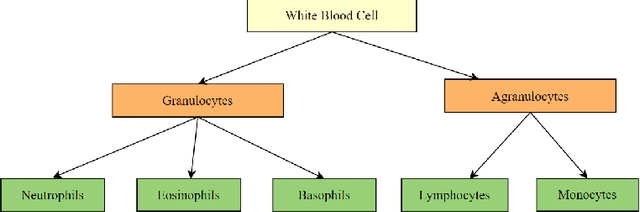

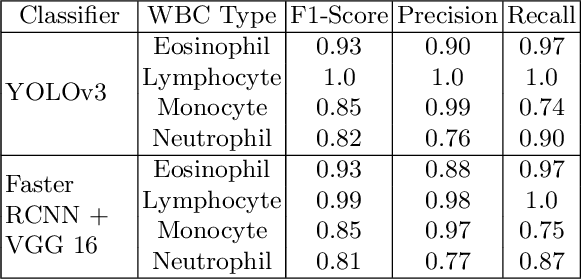

Abstract:Machine learning has endless applications in the health care industry. White blood cell classification is one of the interesting and promising area of research. The classification of the white blood cells plays an important part in the medical diagnosis. In practise white blood cell classification is performed by the haematologist by taking a small smear of blood and careful examination under the microscope. The current procedures to identify the white blood cell subtype is more time taking and error-prone. The computer aided detection and diagnosis of the white blood cells tend to avoid the human error and reduce the time taken to classify the white blood cells. In the recent years several deep learning approaches have been developed in the context of classification of the white blood cells that are able to identify but are unable to localize the positions of white blood cells in the blood cell image. Following this, the present research proposes to utilize YOLOv3 object detection technique to localize and classify the white blood cells with bounding boxes. With exhaustive experimental analysis, the proposed work is found to detect the white blood cell with 99.2% accuracy and classify with 90% accuracy.

RCA-IUnet: A residual cross-spatial attention guided inception U-Net model for tumor segmentation in breast ultrasound imaging

Aug 05, 2021

Abstract:The advancements in deep learning technologies have produced immense contribution to biomedical image analysis applications. With breast cancer being the common deadliest disease among women, early detection is the key means to improve survivability. Medical imaging like ultrasound presents an excellent visual representation of the functioning of the organs; however, for any radiologist analysing such scans is challenging and time consuming which delays the diagnosis process. Although various deep learning based approaches are proposed that achieved promising results, the present article introduces an efficient residual cross-spatial attention guided inception U-Net (RCA-IUnet) model with minimal training parameters for tumor segmentation using breast ultrasound imaging to further improve the segmentation performance of varying tumor sizes. The RCA-IUnet model follows U-Net topology with residual inception depth-wise separable convolution and hybrid pooling (max pooling and spectral pooling) layers. In addition, cross-spatial attention filters are added to suppress the irrelevant features and focus on the target structure. The segmentation performance of the proposed model is validated on two publicly available datasets using standard segmentation evaluation metrics, where it outperformed the other state-of-the-art segmentation models.

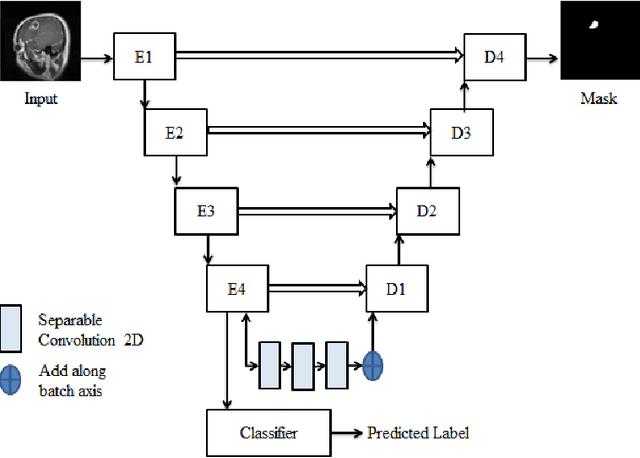

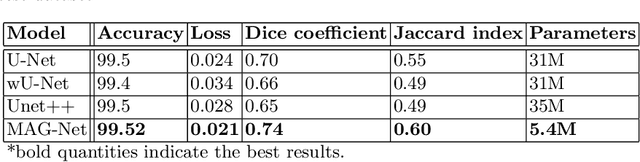

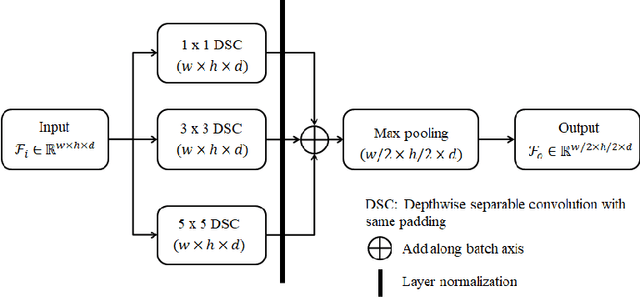

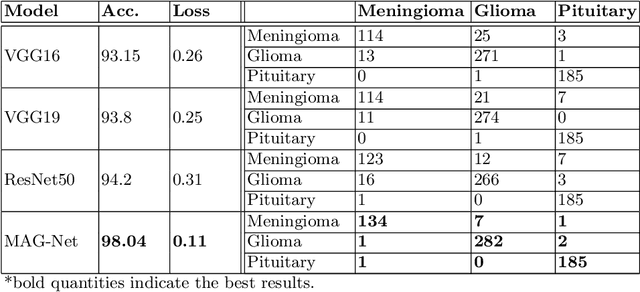

MAG-Net: Mutli-task attention guided network for brain tumor segmentation and classification

Jul 26, 2021

Abstract:Brain tumor is the most common and deadliest disease that can be found in all age groups. Generally, MRI modality is adopted for identifying and diagnosing tumors by the radiologists. The correct identification of tumor regions and its type can aid to diagnose tumors with the followup treatment plans. However, for any radiologist analysing such scans is a complex and time-consuming task. Motivated by the deep learning based computer-aided-diagnosis systems, this paper proposes multi-task attention guided encoder-decoder network (MAG-Net) to classify and segment the brain tumor regions using MRI images. The MAG-Net is trained and evaluated on the Figshare dataset that includes coronal, axial, and sagittal views with 3 types of tumors meningioma, glioma, and pituitary tumor. With exhaustive experimental trials the model achieved promising results as compared to existing state-of-the-art models, while having least number of training parameters among other state-of-the-art models.

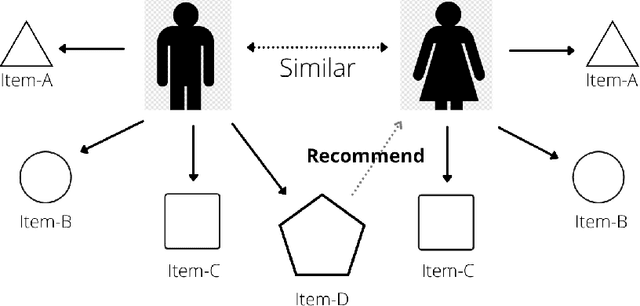

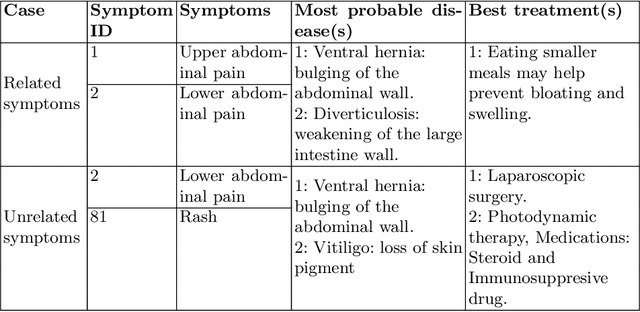

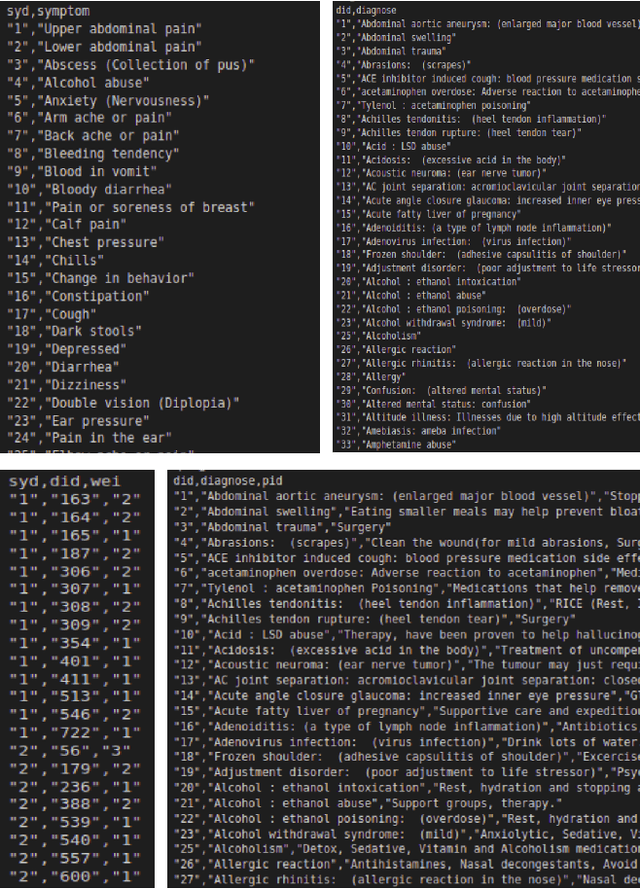

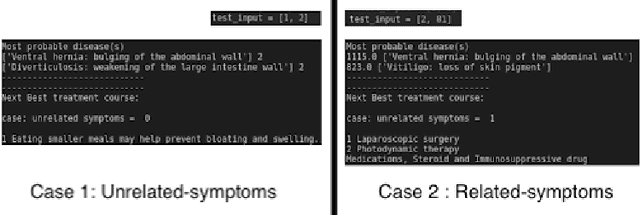

Recommending best course of treatment based on similarities of prognostic markers

Jul 19, 2021

Abstract:With the advancement in the technology sector spanning over every field, a huge influx of information is inevitable. Among all the opportunities that the advancements in the technology have brought, one of them is to propose efficient solutions for data retrieval. This means that from an enormous pile of data, the retrieval methods should allow the users to fetch the relevant and recent data over time. In the field of entertainment and e-commerce, recommender systems have been functioning to provide the aforementioned. Employing the same systems in the medical domain could definitely prove to be useful in variety of ways. Following this context, the goal of this paper is to propose collaborative filtering based recommender system in the healthcare sector to recommend remedies based on the symptoms experienced by the patients. Furthermore, a new dataset is developed consisting of remedies concerning various diseases to address the limited availability of the data. The proposed recommender system accepts the prognostic markers of a patient as the input and generates the best remedy course. With several experimental trials, the proposed model achieved promising results in recommending the possible remedy for given prognostic markers.

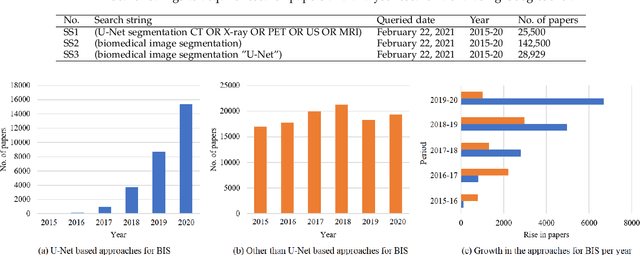

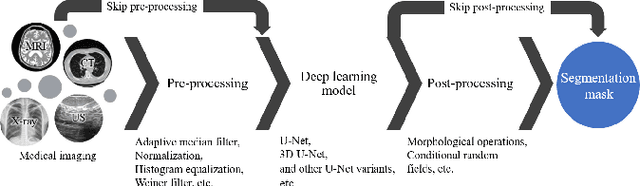

Modality specific U-Net variants for biomedical image segmentation: A survey

Jul 09, 2021

Abstract:With the advent of advancements in deep learning approaches, such as deep convolution neural network, residual neural network, adversarial network; U-Net architectures are most widely utilized in biomedical image segmentation to address the automation in identification and detection of the target regions or sub-regions. In recent studies, U-Net based approaches have illustrated state-of-the-art performance in different applications for the development of computer-aided diagnosis systems for early diagnosis and treatment of diseases such as brain tumor, lung cancer, alzheimer, breast cancer, etc. This article contributes to present the success of these approaches by describing the U-Net framework, followed by the comprehensive analysis of the U-Net variants for different medical imaging or modalities such as magnetic resonance imaging, X-ray, computerized tomography/computerized axial tomography, ultrasound, positron emission tomography, etc. Besides, this article also highlights the contribution of U-Net based frameworks in the on-going pandemic, severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) also known as COVID-19.

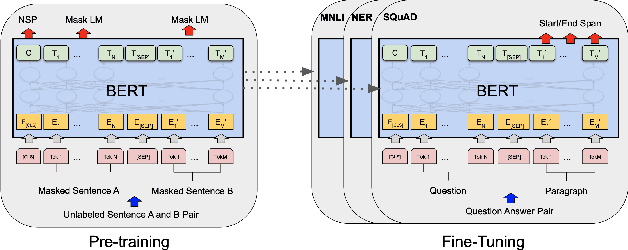

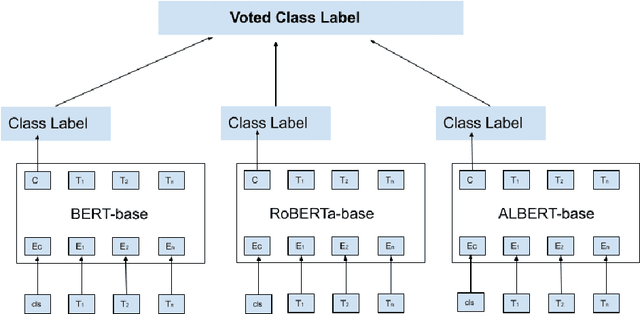

BERT based sentiment analysis: A software engineering perspective

Jun 28, 2021

Abstract:Sentiment analysis can provide a suitable lead for the tools used in software engineering along with the API recommendation systems and relevant libraries to be used. In this context, the existing tools like SentiCR, SentiStrength-SE, etc. exhibited low f1-scores that completely defeats the purpose of deployment of such strategies, thereby there is enough scope for performance improvement. Recent advancements show that transformer based pre-trained models (e.g., BERT, RoBERTa, ALBERT, etc.) have displayed better results in the text classification task. Following this context, the present research explores different BERT-based models to analyze the sentences in GitHub comments, Jira comments, and Stack Overflow posts. The paper presents three different strategies to analyse BERT based model for sentiment analysis, where in the first strategy the BERT based pre-trained models are fine-tuned; in the second strategy an ensemble model is developed from BERT variants, and in the third strategy a compressed model (Distil BERT) is used. The experimental results show that the BERT based ensemble approach and the compressed BERT model attain improvements by 6-12% over prevailing tools for the F1 measure on all three datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge