Mona Fathollahi

Video-based Surgical Skills Assessment using Long term Tool Tracking

Jul 05, 2022

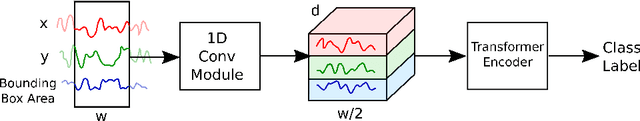

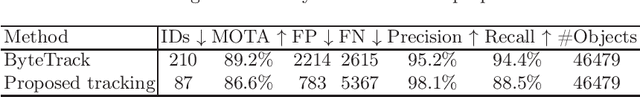

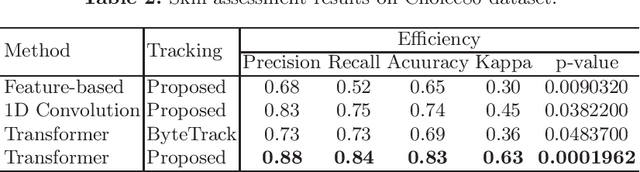

Abstract:Mastering the technical skills required to perform surgery is an extremely challenging task. Video-based assessment allows surgeons to receive feedback on their technical skills to facilitate learning and development. Currently, this feedback comes primarily from manual video review, which is time-intensive and limits the feasibility of tracking a surgeon's progress over many cases. In this work, we introduce a motion-based approach to automatically assess surgical skills from surgical case video feed. The proposed pipeline first tracks surgical tools reliably to create motion trajectories and then uses those trajectories to predict surgeon technical skill levels. The tracking algorithm employs a simple yet effective re-identification module that improves ID-switch compared to other state-of-the-art methods. This is critical for creating reliable tool trajectories when instruments regularly move on- and off-screen or are periodically obscured. The motion-based classification model employs a state-of-the-art self-attention transformer network to capture short- and long-term motion patterns that are essential for skill evaluation. The proposed method is evaluated on an in-vivo (Cholec80) dataset where an expert-rated GOALS skill assessment of the Calot Triangle Dissection is used as a quantitative skill measure. We compare transformer-based skill assessment with traditional machine learning approaches using the proposed and state-of-the-art tracking. Our result suggests that using motion trajectories from reliable tracking methods is beneficial for assessing surgeon skills based solely on video streams.

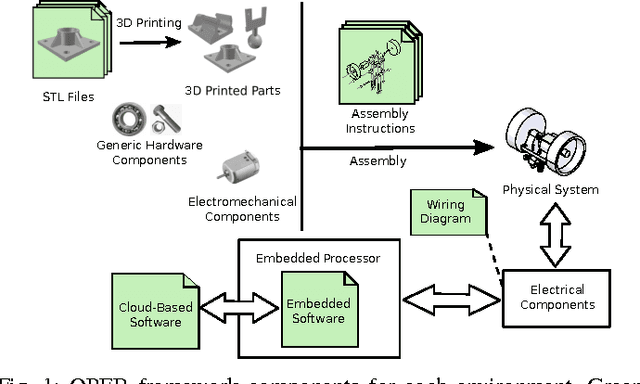

OPEB: Open Physical Environment Benchmark for Artificial Intelligence

Jul 04, 2017

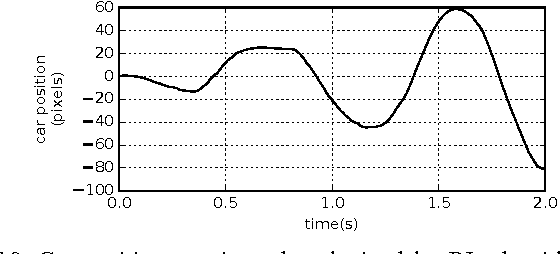

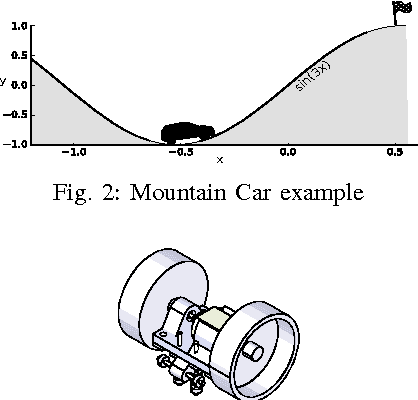

Abstract:Artificial Intelligence methods to solve continuous- control tasks have made significant progress in recent years. However, these algorithms have important limitations and still need significant improvement to be used in industry and real- world applications. This means that this area is still in an active research phase. To involve a large number of research groups, standard benchmarks are needed to evaluate and compare proposed algorithms. In this paper, we propose a physical environment benchmark framework to facilitate collaborative research in this area by enabling different research groups to integrate their designed benchmarks in a unified cloud-based repository and also share their actual implemented benchmarks via the cloud. We demonstrate the proposed framework using an actual implementation of the classical mountain-car example and present the results obtained using a Reinforcement Learning algorithm.

Autonomous driving challenge: To Infer the property of a dynamic object based on its motion pattern using recurrent neural network

Sep 10, 2016

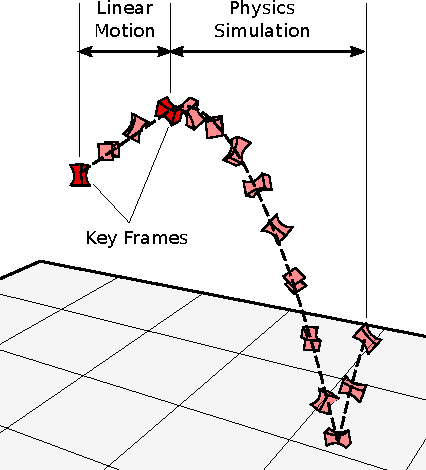

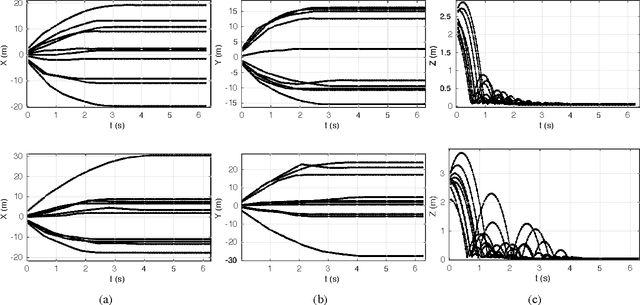

Abstract:In autonomous driving applications a critical challenge is to identify action to take to avoid an obstacle on collision course. For example, when a heavy object is suddenly encountered it is critical to stop the vehicle or change the lane even if it causes other traffic disruptions. However,there are situations when it is preferable to collide with the object rather than take an action that would result in a much more serious accident than collision with the object. For example, a heavy object which falls from a truck should be avoided whereas a bouncing ball or a soft target such as a foam box need not be.We present a novel method to discriminate between the motion characteristics of these types of objects based on their physical properties such as bounciness, elasticity, etc.In this preliminary work, we use recurrent neural net-work with LSTM cells to train a classifier to classify objects based on their motion trajectories. We test the algorithm on synthetic data, and, as a proof of concept, demonstrate its effectiveness on a limited set of real-world data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge