Jocelyn Barker

Video-based Surgical Skills Assessment using Long term Tool Tracking

Jul 05, 2022

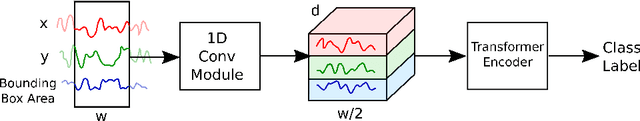

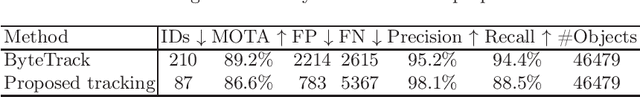

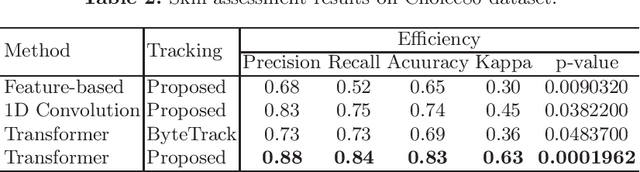

Abstract:Mastering the technical skills required to perform surgery is an extremely challenging task. Video-based assessment allows surgeons to receive feedback on their technical skills to facilitate learning and development. Currently, this feedback comes primarily from manual video review, which is time-intensive and limits the feasibility of tracking a surgeon's progress over many cases. In this work, we introduce a motion-based approach to automatically assess surgical skills from surgical case video feed. The proposed pipeline first tracks surgical tools reliably to create motion trajectories and then uses those trajectories to predict surgeon technical skill levels. The tracking algorithm employs a simple yet effective re-identification module that improves ID-switch compared to other state-of-the-art methods. This is critical for creating reliable tool trajectories when instruments regularly move on- and off-screen or are periodically obscured. The motion-based classification model employs a state-of-the-art self-attention transformer network to capture short- and long-term motion patterns that are essential for skill evaluation. The proposed method is evaluated on an in-vivo (Cholec80) dataset where an expert-rated GOALS skill assessment of the Calot Triangle Dissection is used as a quantitative skill measure. We compare transformer-based skill assessment with traditional machine learning approaches using the proposed and state-of-the-art tracking. Our result suggests that using motion trajectories from reliable tracking methods is beneficial for assessing surgeon skills based solely on video streams.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge