Mithun Lal

Learning Dense Correspondence from Synthetic Environments

Mar 24, 2022

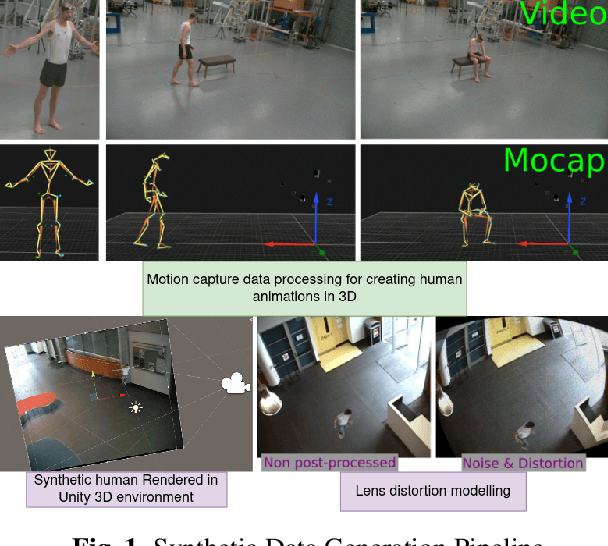

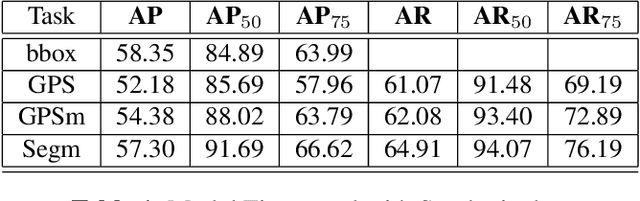

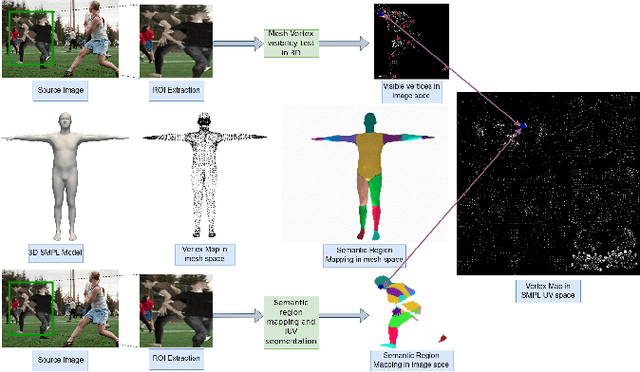

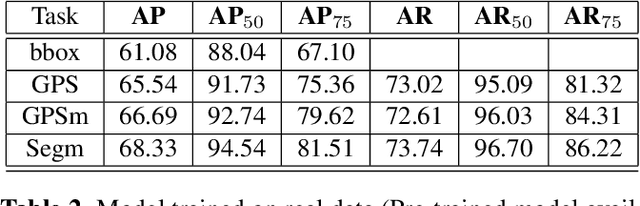

Abstract:Estimation of human shape and pose from a single image is a challenging task. It is an even more difficult problem to map the identified human shape onto a 3D human model. Existing methods map manually labelled human pixels in real 2D images onto the 3D surface, which is prone to human error, and the sparsity of available annotated data often leads to sub-optimal results. We propose to solve the problem of data scarcity by training 2D-3D human mapping algorithms using automatically generated synthetic data for which exact and dense 2D-3D correspondence is known. Such a learning strategy using synthetic environments has a high generalisation potential towards real-world data. Using different camera parameter variations, background and lighting settings, we created precise ground truth data that constitutes a wider distribution. We evaluate the performance of models trained on synthetic using the COCO dataset and validation framework. Results show that training 2D-3D mapping network models on synthetic data is a viable alternative to using real data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge