Ming C. Leu

Advancements in Repetitive Action Counting: Joint-Based PoseRAC Model With Improved Performance

Aug 15, 2023

Abstract:Repetitive counting (RepCount) is critical in various applications, such as fitness tracking and rehabilitation. Previous methods have relied on the estimation of red-green-and-blue (RGB) frames and body pose landmarks to identify the number of action repetitions, but these methods suffer from a number of issues, including the inability to stably handle changes in camera viewpoints, over-counting, under-counting, difficulty in distinguishing between sub-actions, inaccuracy in recognizing salient poses, etc. In this paper, based on the work done by [1], we integrate joint angles with body pose landmarks to address these challenges and achieve better results than the state-of-the-art RepCount methods, with a Mean Absolute Error (MAE) of 0.211 and an Off-By-One (OBO) counting accuracy of 0.599 on the RepCount data set [2]. Comprehensive experimental results demonstrate the effectiveness and robustness of our method.

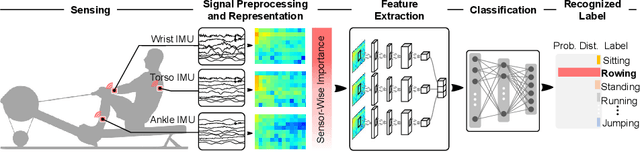

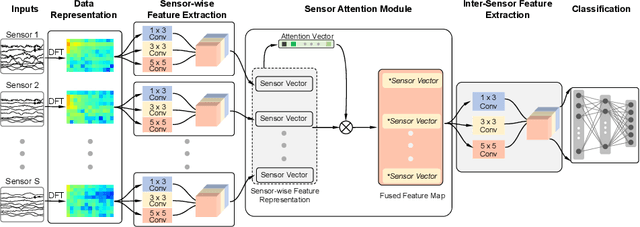

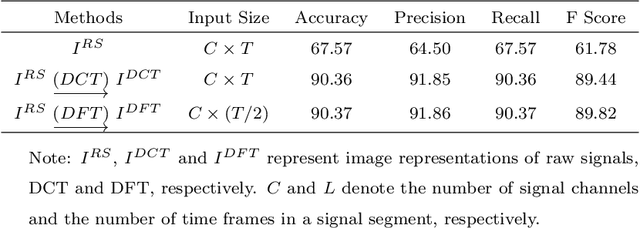

Attention-Based Sensor Fusion for Human Activity Recognition Using IMU Signals

Dec 20, 2021

Abstract:Human Activity Recognition (HAR) using wearable devices such as smart watches embedded with Inertial Measurement Unit (IMU) sensors has various applications relevant to our daily life, such as workout tracking and health monitoring. In this paper, we propose a novel attention-based approach to human activity recognition using multiple IMU sensors worn at different body locations. Firstly, a sensor-wise feature extraction module is designed to extract the most discriminative features from individual sensors with Convolutional Neural Networks (CNNs). Secondly, an attention-based fusion mechanism is developed to learn the importance of sensors at different body locations and to generate an attentive feature representation. Finally, an inter-sensor feature extraction module is applied to learn the inter-sensor correlations, which are connected to a classifier to output the predicted classes of activities. The proposed approach is evaluated using five public datasets and it outperforms state-of-the-art methods on a wide variety of activity categories.

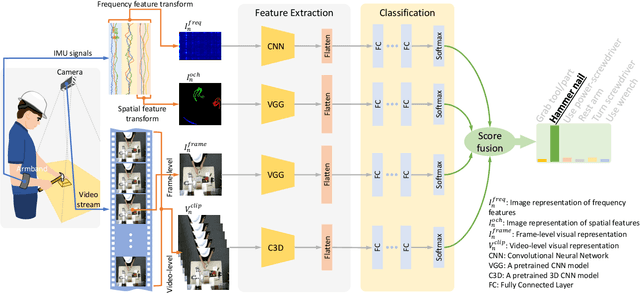

Multi-Modal Recognition of Worker Activity for Human-Centered Intelligent Manufacturing

Aug 20, 2019

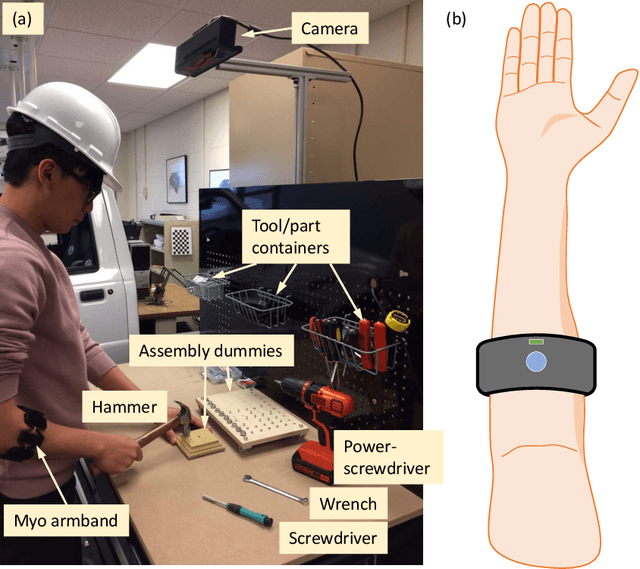

Abstract:In a human-centered intelligent manufacturing system, sensing and understanding of the worker's activity are the primary tasks. In this paper, we propose a novel multi-modal approach for worker activity recognition by leveraging information from different sensors and in different modalities. Specifically, a smart armband and a visual camera are applied to capture Inertial Measurement Unit (IMU) signals and videos, respectively. For the IMU signals, we design two novel feature transform mechanisms, in both frequency and spatial domains, to assemble the captured IMU signals as images, which allow using convolutional neural networks to learn the most discriminative features. Along with the above two modalities, we propose two other modalities for the video data, at the video frame and video clip levels, respectively. Each of the four modalities returns a probability distribution on activity prediction. Then, these probability distributions are fused to output the worker activity classification result. A worker activity dataset of 6 activities is established, which at present contains 6 common activities in assembly tasks, i.e., grab a tool/part, hammer a nail, use a power-screwdriver, rest arms, turn a screwdriver, and use a wrench. The developed multi-modal approach is evaluated on this dataset and achieves recognition accuracies as high as 97% and 100% in the leave-one-out and half-half experiments, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge