Miguel Abreu

Designing a Skilled Soccer Team for RoboCup: Exploring Skill-Set-Primitives through Reinforcement Learning

Dec 22, 2023Abstract:The RoboCup 3D Soccer Simulation League serves as a competitive platform for showcasing innovation in autonomous humanoid robot agents through simulated soccer matches. Our team, FC Portugal, developed a new codebase from scratch in Python after RoboCup 2021. The team's performance is based on a set of skills centered around novel unifying primitives and a custom, symmetry-extended version of the Proximal Policy Optimization algorithm. Our methods have been thoroughly tested in official RoboCup matches, where FC Portugal has won the last two main competitions, in 2022 and 2023. This paper presents our training framework, as well as a timeline of skills developed using our skill-set-primitives, which considerably improve the sample efficiency and stability of skills, and motivate seamless transitions. We start with a significantly fast sprint-kick developed in 2021 and progress to the most recent skill set, which includes a multi-purpose omnidirectional walk, a dribble with unprecedented ball control, a solid kick, and a push skill. The push tackles both low-level collision-prone scenarios and high-level strategies to increase ball possession. We address the resource-intensive nature of this task through an innovative multi-agent learning approach. Finally, we release the codebase of our team to the RoboCup community, enabling other teams to transition to Python more easily and providing new teams with a robust and modern foundation upon which they can build new features.

Addressing Imperfect Symmetry: a Novel Symmetry-Learning Actor-Critic Extension

Sep 06, 2023Abstract:Symmetry, a fundamental concept to understand our environment, often oversimplifies reality from a mathematical perspective. Humans are a prime example, deviating from perfect symmetry in terms of appearance and cognitive biases (e.g. having a dominant hand). Nevertheless, our brain can easily overcome these imperfections and efficiently adapt to symmetrical tasks. The driving motivation behind this work lies in capturing this ability through reinforcement learning. To this end, we introduce Adaptive Symmetry Learning (ASL) $\unicode{x2013}$ a model-minimization actor-critic extension that addresses incomplete or inexact symmetry descriptions by adapting itself during the learning process. ASL consists of a symmetry fitting component and a modular loss function that enforces a common symmetric relation across all states while adapting to the learned policy. The performance of ASL is compared to existing symmetry-enhanced methods in a case study involving a four-legged ant model for multidirectional locomotion tasks. The results demonstrate that ASL is capable of recovering from large perturbations and generalizing knowledge to hidden symmetric states. It achieves comparable or better performance than alternative methods in most scenarios, making it a valuable approach for leveraging model symmetry while compensating for inherent perturbations.

Robust Biped Locomotion Using Deep Reinforcement Learning on Top of an Analytical Control Approach

Apr 21, 2021

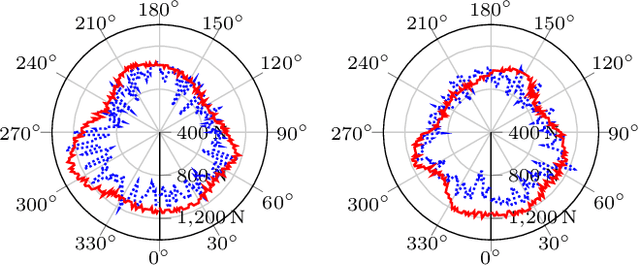

Abstract:This paper proposes a modular framework to generate robust biped locomotion using a tight coupling between an analytical walking approach and deep reinforcement learning. This framework is composed of six main modules which are hierarchically connected to reduce the overall complexity and increase its flexibility. The core of this framework is a specific dynamics model which abstracts a humanoid's dynamics model into two masses for modeling upper and lower body. This dynamics model is used to design an adaptive reference trajectories planner and an optimal controller which are fully parametric. Furthermore, a learning framework is developed based on Genetic Algorithm (GA) and Proximal Policy Optimization (PPO) to find the optimum parameters and to learn how to improve the stability of the robot by moving the arms and changing its center of mass (COM) height. A set of simulations are performed to validate the performance of the framework using the official RoboCup 3D League simulation environment. The results validate the performance of the framework, not only in creating a fast and stable gait but also in learning to improve the upper body efficiency.

A CPG-Based Agile and Versatile Locomotion Framework Using Proximal Symmetry Loss

Mar 01, 2021

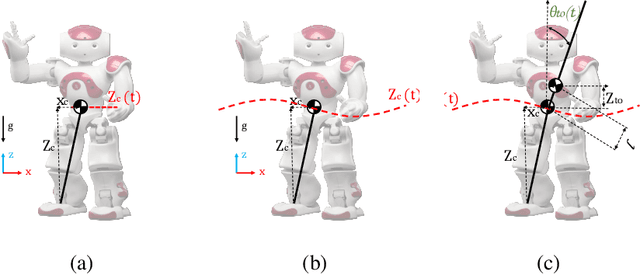

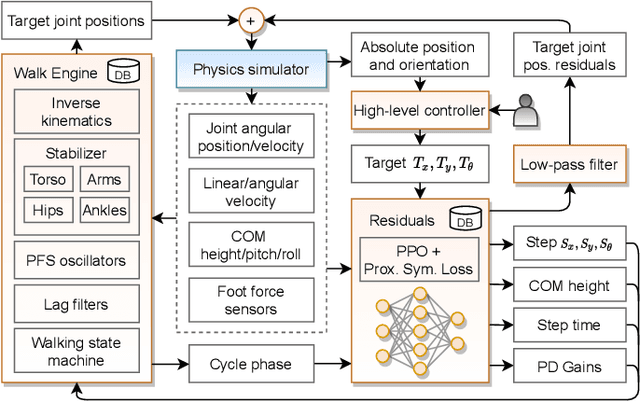

Abstract:Humanoid robots are made to resemble humans but their locomotion abilities are far from ours in terms of agility and versatility. When humans walk on complex terrains, or face external disturbances, they combine a set of strategies, unconsciously and efficiently, to regain stability. This paper tackles the problem of developing a robust omnidirectional walking framework, which is able to generate versatile and agile locomotion on complex terrains. The Linear Inverted Pendulum Model and Central Pattern Generator concepts are used to develop a closed-loop walk engine that is combined with a reinforcement learning module. This module learns to regulate the walk engine parameters adaptively and generates residuals to adjust the robot's target joint positions (residual physics). Additionally, we propose a proximal symmetry loss to increase the sample efficiency of the Proximal Policy Optimization algorithm by leveraging model symmetries. The effectiveness of the proposed framework was demonstrated and evaluated across a set of challenging simulation scenarios. The robot was able to generalize what it learned in one scenario, by displaying human-like locomotion skills in unforeseen circumstances, even in the presence of noise and external pushes.

A Hybrid Biped Stabilizer System Based on Analytical Control and Learning of Symmetrical Residual Physics

Nov 27, 2020

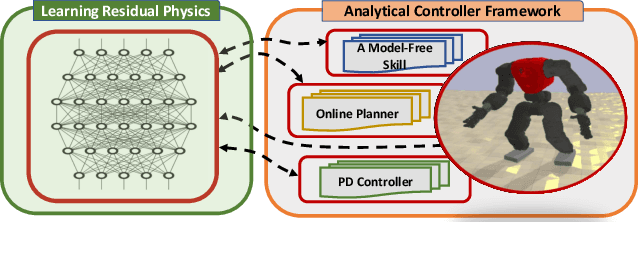

Abstract:Although humanoid robots are made to resemble humans, their stability is not yet comparable to ours. When facing external disturbances, humans efficiently and unconsciously combine a set of strategies to regain stability. This work deals with the problem of developing a robust hybrid stabilizer system for biped robots. The Linear Inverted Pendulum (LIP) and Divergent Component of Motion (DCM) concepts are used to formulate the biped locomotion and stabilization as an analytical control framework. On top of that, a neural network with symmetric partial data augmentation learns residuals to adjust the joint's position, and thus improving the robot's stability when facing external perturbations. The performance of the proposed framework was evaluated across a set of challenging simulation scenarios. The results show a considerable improvement over the baseline in recovering from large external forces. Moreover, the produced behaviors are human-like and robust to considerably noisy environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge