Mehul A. Shah

The Design of an LLM-powered Unstructured Analytics System

Sep 04, 2024

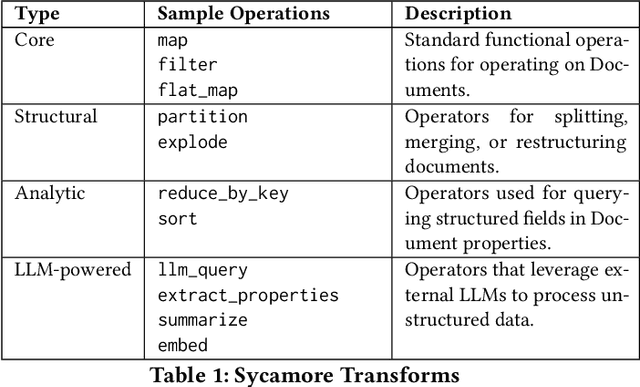

Abstract:LLMs demonstrate an uncanny ability to process unstructured data, and as such, have the potential to go beyond search and run complex, semantic analyses at scale. We describe the design of an unstructured analytics system, Aryn, and the tenets and use cases that motivate its design. With Aryn, users can specify queries in natural language and the system automatically determines a semantic plan and executes it to compute an answer from a large collection of unstructured documents using LLMs. At the core of Aryn is Sycamore, a declarative document processing engine, built using Ray, that provides a reliable distributed abstraction called DocSets. Sycamore allows users to analyze, enrich, and transform complex documents at scale. Aryn also comprises Luna, a query planner that translates natural language queries to Sycamore scripts, and the Aryn Partitioner, which takes raw PDFs and document images, and converts them to DocSets for downstream processing. Using Aryn, we demonstrate a real world use case for analyzing accident reports from the National Transportation Safety Board (NTSB), and discuss some of the major challenges we encountered in deploying Aryn in the wild.

Explaining a machine learning decision to physicians via counterfactuals

Jun 10, 2023

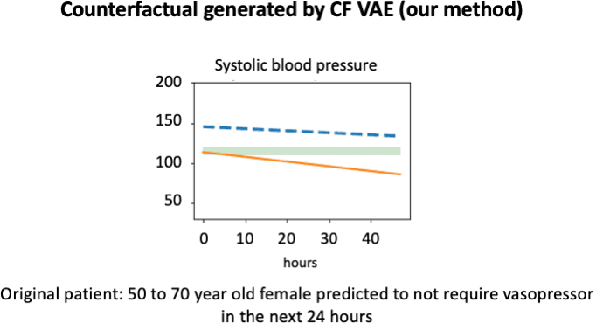

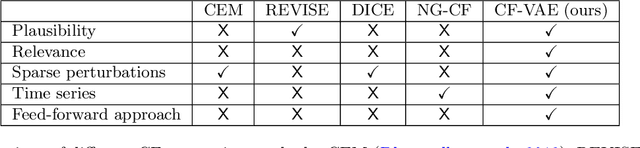

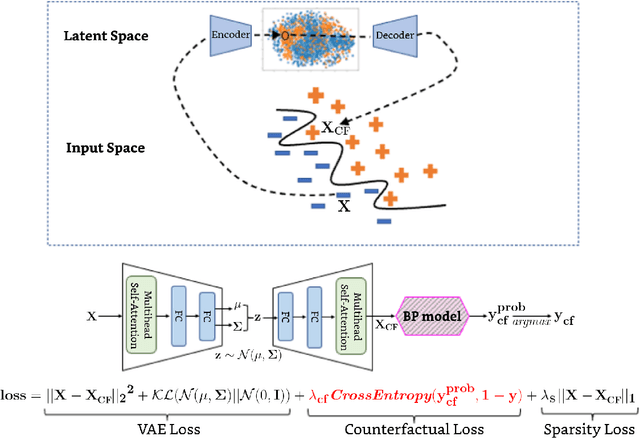

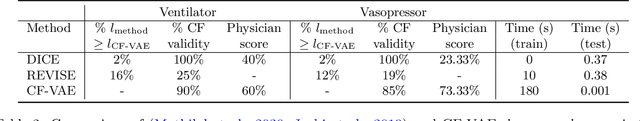

Abstract:Machine learning models perform well on several healthcare tasks and can help reduce the burden on the healthcare system. However, the lack of explainability is a major roadblock to their adoption in hospitals. \textit{How can the decision of an ML model be explained to a physician?} The explanations considered in this paper are counterfactuals (CFs), hypothetical scenarios that would have resulted in the opposite outcome. Specifically, time-series CFs are investigated, inspired by the way physicians converse and reason out decisions `I would have given the patient a vasopressor if their blood pressure was lower and falling'. Key properties of CFs that are particularly meaningful in clinical settings are outlined: physiological plausibility, relevance to the task and sparse perturbations. Past work on CF generation does not satisfy these properties, specifically plausibility in that realistic time-series CFs are not generated. A variational autoencoder (VAE)-based approach is proposed that captures these desired properties. The method produces CFs that improve on prior approaches quantitatively (more plausible CFs as evaluated by their likelihood w.r.t original data distribution, and 100$\times$ faster at generating CFs) and qualitatively (2$\times$ more plausible and relevant) as evaluated by three physicians.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge