Maulana Bisyir Azhari

Robust Tightly-Coupled Filter-Based Monocular Visual-Inertial State Estimation and Graph-Based Evaluation for Autonomous Drone Racing

Mar 03, 2026Abstract:Autonomous drone racing (ADR) demands state estimation that is simultaneously computationally efficient and resilient to the perceptual degradation experienced during extreme velocity and maneuvers. Traditional frameworks typically rely on conventional visual-inertial pipelines with loosely-coupled gate-based Perspective-n-Points (PnP) corrections that suffer from a rigid requirement for four visible features and information loss in intermediate steps. Furthermore, the absence of GNSS and Motion Capture systems in uninstrumented, competitive racing environments makes the objective evaluation of such systems remarkably difficult. To address these limitations, we propose ADR-VINS, a robust, monocular visual-inertial state estimation framework based on an Error-State Kalman Filter (ESKF) tailored for autonomous drone racing. Our approach integrates direct pixel reprojection errors from gate corners features as innovation terms within the filter. By bypassing intermediate PnP solvers, ADR-VINS maintains valid state updates with as few as two visible corners and utilizes robust reweighting instead of RANSAC-based schemes to handle outliers, enhancing computational efficiency. Furthermore, we introduce ADR-FGO, an offline Factor-Graph Optimization framework to generate high-fidelity reference trajectories that facilitate post-flight performance evaluation and analysis on uninstrumented, GNSS-denied environments. The proposed system is validated using TII-RATM dataset, where ADR-VINS achieves an average RMS translation error of 0.134 m, while ADR-FGO yields 0.060 m as a smoothing-based reference. Finally, ADR-VINS was successfully deployed in the A2RL Drone Championship Season 2, maintaining stable and robust estimation despite noisy detections during high-agility flight at top speeds of 20.9 m/s. We further utilize ADR-FGO for post-flight evaluation in uninstrumented racing environments.

Drift-Corrected Monocular VIO and Perception-Aware Planning for Autonomous Drone Racing

Dec 23, 2025

Abstract:The Abu Dhabi Autonomous Racing League(A2RL) x Drone Champions League competition(DCL) requires teams to perform high-speed autonomous drone racing using only a single camera and a low-quality inertial measurement unit -- a minimal sensor set that mirrors expert human drone racing pilots. This sensor limitation makes the system susceptible to drift from Visual-Inertial Odometry (VIO), particularly during long and fast flights with aggressive maneuvers. This paper presents the system developed for the championship, which achieved a competitive performance. Our approach corrected VIO drift by fusing its output with global position measurements derived from a YOLO-based gate detector using a Kalman filter. A perception-aware planner generated trajectories that balance speed with the need to keep gates visible for the perception system. The system demonstrated high performance, securing podium finishes across multiple categories: third place in the AI Grand Challenge with top speed of 43.2 km/h, second place in the AI Drag Race with over 59 km/h, and second place in the AI Multi-Drone Race. We detail the complete architecture and present a performance analysis based on experimental data from the competition, contributing our insights on building a successful system for monocular vision-based autonomous drone flight.

DINO-VO: A Feature-based Visual Odometry Leveraging a Visual Foundation Model

Jul 17, 2025Abstract:Learning-based monocular visual odometry (VO) poses robustness, generalization, and efficiency challenges in robotics. Recent advances in visual foundation models, such as DINOv2, have improved robustness and generalization in various vision tasks, yet their integration in VO remains limited due to coarse feature granularity. In this paper, we present DINO-VO, a feature-based VO system leveraging DINOv2 visual foundation model for its sparse feature matching. To address the integration challenge, we propose a salient keypoints detector tailored to DINOv2's coarse features. Furthermore, we complement DINOv2's robust-semantic features with fine-grained geometric features, resulting in more localizable representations. Finally, a transformer-based matcher and differentiable pose estimation layer enable precise camera motion estimation by learning good matches. Against prior detector-descriptor networks like SuperPoint, DINO-VO demonstrates greater robustness in challenging environments. Furthermore, we show superior accuracy and generalization of the proposed feature descriptors against standalone DINOv2 coarse features. DINO-VO outperforms prior frame-to-frame VO methods on the TartanAir and KITTI datasets and is competitive on EuRoC dataset, while running efficiently at 72 FPS with less than 1GB of memory usage on a single GPU. Moreover, it performs competitively against Visual SLAM systems on outdoor driving scenarios, showcasing its generalization capabilities.

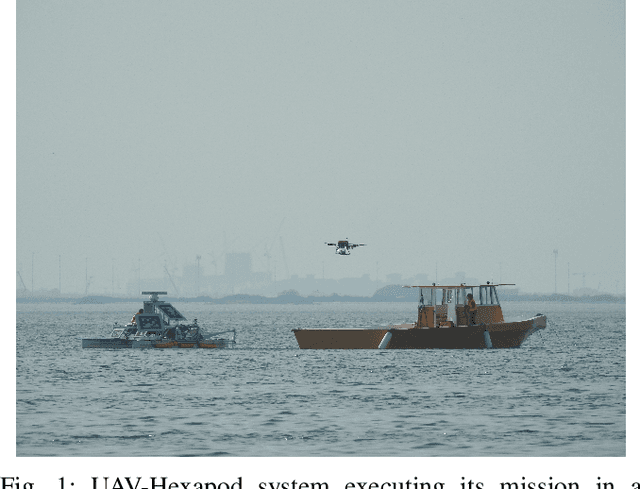

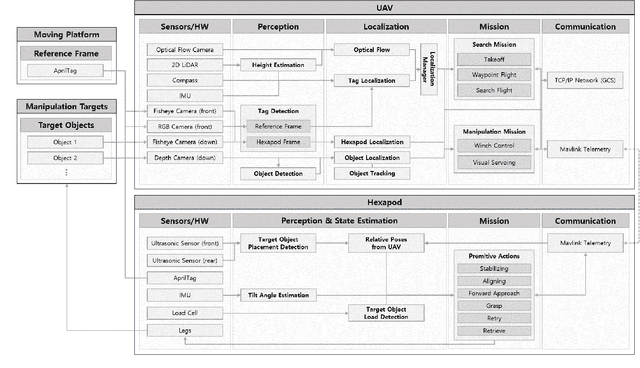

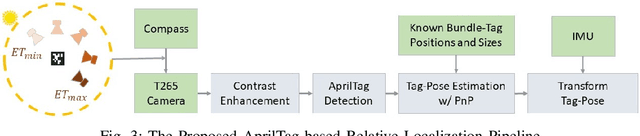

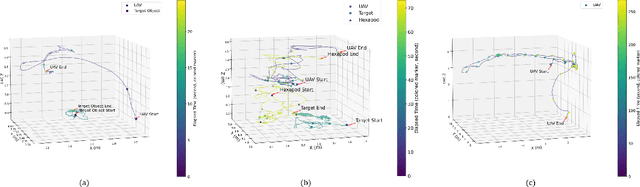

A Collaborative Team of UAV-Hexapod for an Autonomous Retrieval System in GNSS-Denied Maritime Environments

Oct 12, 2024

Abstract:We present an integrated UAV-hexapod robotic system designed for GNSS-denied maritime operations, capable of autonomous deployment and retrieval of a hexapod robot via a winch mechanism installed on a UAV. This system is intended to address the challenges of localization, control, and mobility in dynamic maritime environments. Our solution leverages sensor fusion techniques, combining optical flow, LiDAR, and depth data for precise localization. Experimental results demonstrate the effectiveness of this system in real-world scenarios, validating its performance during field tests in both controlled and operational conditions in the MBZIRC 2023 Maritime Challenge.

SPIBOT: A Drone-Tethered Mobile Gripper for Robust Aerial Object Retrieval in Dynamic Environments

Sep 24, 2024

Abstract:In real-world field operations, aerial grasping systems face significant challenges in dynamic environments due to strong winds, shifting surfaces, and the need to handle heavy loads. Particularly when dealing with heavy objects, the powerful propellers of the drone can inadvertently blow the target object away as it approaches, making the task even more difficult. To address these challenges, we introduce SPIBOT, a novel drone-tethered mobile gripper system designed for robust and stable autonomous target retrieval. SPIBOT operates via a tether, much like a spider, allowing the drone to maintain a safe distance from the target. To ensure both stable mobility and secure grasping capabilities, SPIBOT is equipped with six legs and sensors to estimate the robot's and mission's states. It is designed with a reduced volume and weight compared to other hexapod robots, allowing it to be easily stowed under the drone and reeled in as needed. Designed for the 2024 MBZIRC Maritime Grand Challenge, SPIBOT is built to retrieve a 1kg target object in the highly dynamic conditions of the moving deck of a ship. This system integrates a real-time action selection algorithm that dynamically adjusts the robot's actions based on proximity to the mission goal and environmental conditions, enabling rapid and robust mission execution. Experimental results across various terrains, including a pontoon on a lake, a grass field, and rubber mats on coastal sand, demonstrate SPIBOT's ability to efficiently and reliably retrieve targets. SPIBOT swiftly converges on the target and completes its mission, even when dealing with irregular initial states and noisy information introduced by the drone.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge