Matthias Pallasch

Memory Gym: Partially Observable Challenges to Memory-Based Agents in Endless Episodes

Sep 29, 2023Abstract:Memory Gym introduces a unique benchmark designed to test Deep Reinforcement Learning agents, specifically comparing Gated Recurrent Unit (GRU) against Transformer-XL (TrXL), on their ability to memorize long sequences, withstand noise, and generalize. It features partially observable 2D environments with discrete controls, namely Mortar Mayhem, Mystery Path, and Searing Spotlights. These originally finite environments are extrapolated to novel endless tasks that act as an automatic curriculum, drawing inspiration from the car game ``I packed my bag". These endless tasks are not only beneficial for evaluating efficiency but also intriguingly valuable for assessing the effectiveness of approaches in memory-based agents. Given the scarcity of publicly available memory baselines, we contribute an implementation driven by TrXL and Proximal Policy Optimization. This implementation leverages TrXL as episodic memory using a sliding window approach. In our experiments on the finite environments, TrXL demonstrates superior sample efficiency in Mystery Path and outperforms in Mortar Mayhem. However, GRU is more efficient on Searing Spotlights. Most notably, in all endless tasks, GRU makes a remarkable resurgence, consistently outperforming TrXL by significant margins.

On the Verge of Solving Rocket League using Deep Reinforcement Learning and Sim-to-sim Transfer

May 24, 2022

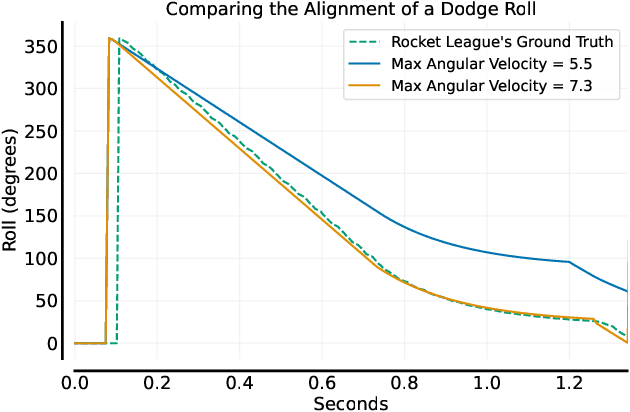

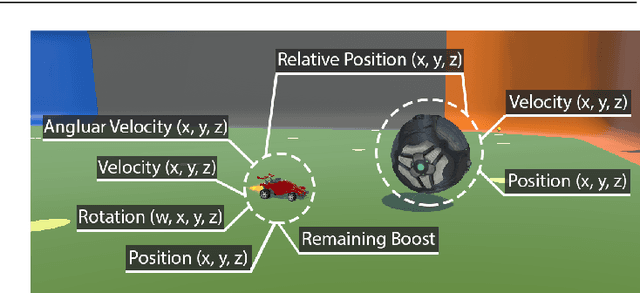

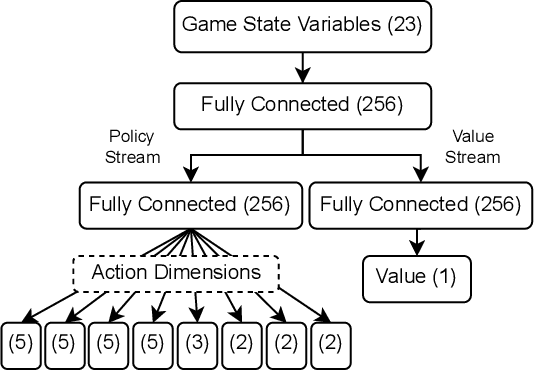

Abstract:Autonomously trained agents that are supposed to play video games reasonably well rely either on fast simulation speeds or heavy parallelization across thousands of machines running concurrently. This work explores a third way that is established in robotics, namely sim-to-real transfer, or if the game is considered a simulation itself, sim-to-sim transfer. In the case of Rocket League, we demonstrate that single behaviors of goalies and strikers can be successfully learned using Deep Reinforcement Learning in the simulation environment and transferred back to the original game. Although the implemented training simulation is to some extent inaccurate, the goalkeeping agent saves nearly 100% of its faced shots once transferred, while the striking agent scores in about 75% of cases. Therefore, the trained agent is robust enough and able to generalize to the target domain of Rocket League.

Generalization, Mayhems and Limits in Recurrent Proximal Policy Optimization

May 23, 2022

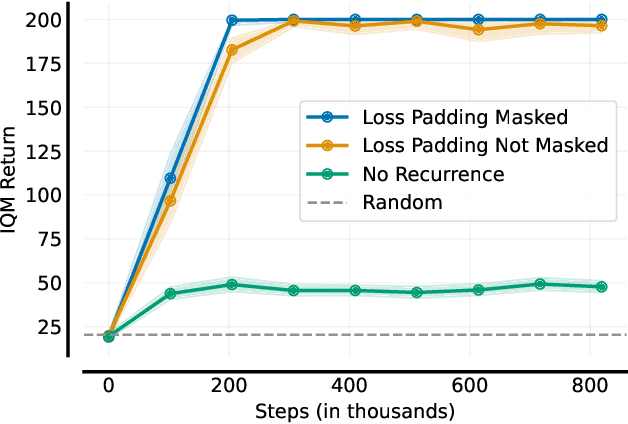

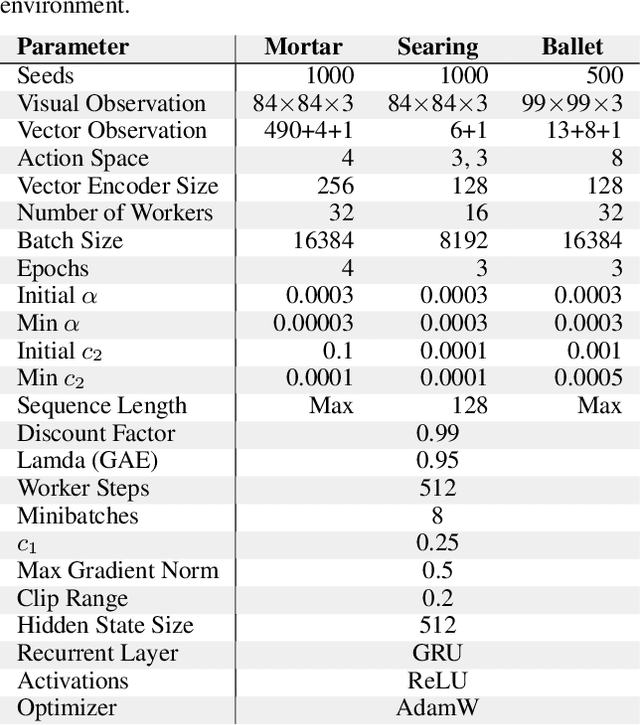

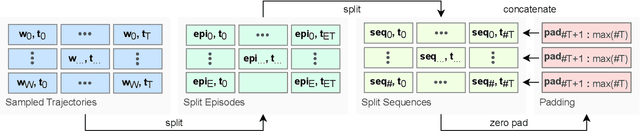

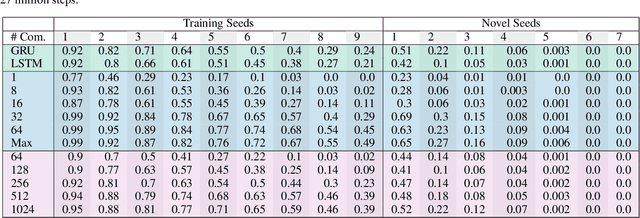

Abstract:At first sight it may seem straightforward to use recurrent layers in Deep Reinforcement Learning algorithms to enable agents to make use of memory in the setting of partially observable environments. Starting from widely used Proximal Policy Optimization (PPO), we highlight vital details that one must get right when adding recurrence to achieve a correct and efficient implementation, namely: properly shaping the neural net's forward pass, arranging the training data, correspondingly selecting hidden states for sequence beginnings and masking paddings for loss computation. We further explore the limitations of recurrent PPO by benchmarking the contributed novel environments Mortar Mayhem and Searing Spotlights that challenge the agent's memory beyond solely capacity and distraction tasks. Remarkably, we can demonstrate a transition to strong generalization in Mortar Mayhem when scaling the number of training seeds, while the agent does not succeed on Searing Spotlights, which seems to be a tough challenge for memory-based agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge