Martin M. Stein

Scaling on-chip photonic neural processors using arbitrarily programmable wave propagation

Feb 27, 2024

Abstract:On-chip photonic processors for neural networks have potential benefits in both speed and energy efficiency but have not yet reached the scale at which they can outperform electronic processors. The dominant paradigm for designing on-chip photonics is to make networks of relatively bulky discrete components connected by one-dimensional waveguides. A far more compact alternative is to avoid explicitly defining any components and instead sculpt the continuous substrate of the photonic processor to directly perform the computation using waves freely propagating in two dimensions. We propose and demonstrate a device whose refractive index as a function of space, $n(x,z)$, can be rapidly reprogrammed, allowing arbitrary control over the wave propagation in the device. Our device, a 2D-programmable waveguide, combines photoconductive gain with the electro-optic effect to achieve massively parallel modulation of the refractive index of a slab waveguide, with an index modulation depth of $10^{-3}$ and approximately $10^4$ programmable degrees of freedom. We used a prototype device with a functional area of $12\,\text{mm}^2$ to perform neural-network inference with up to 49-dimensional input vectors in a single pass, achieving 96% accuracy on vowel classification and 86% accuracy on $7 \times 7$-pixel MNIST handwritten-digit classification. This is a scale beyond that of previous photonic chips relying on discrete components, illustrating the benefit of the continuous-waves paradigm. In principle, with large enough chip area, the reprogrammability of the device's refractive index distribution enables the reconfigurable realization of any passive, linear photonic circuit or device. This promises the development of more compact and versatile photonic systems for a wide range of applications, including optical processing, smart sensing, spectroscopy, and optical communications.

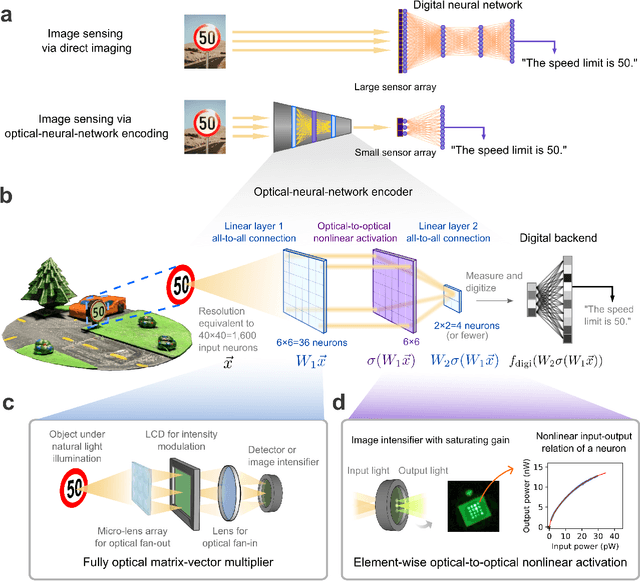

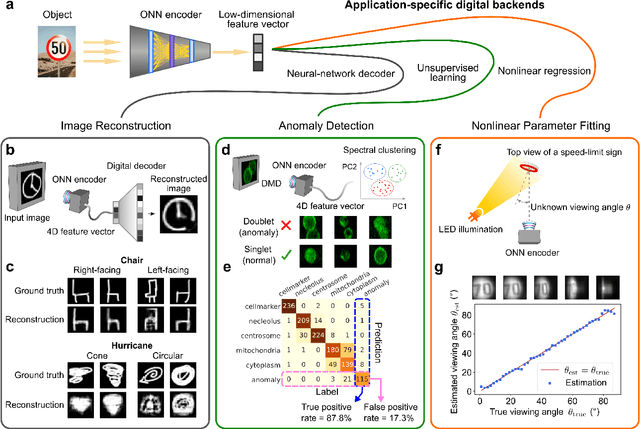

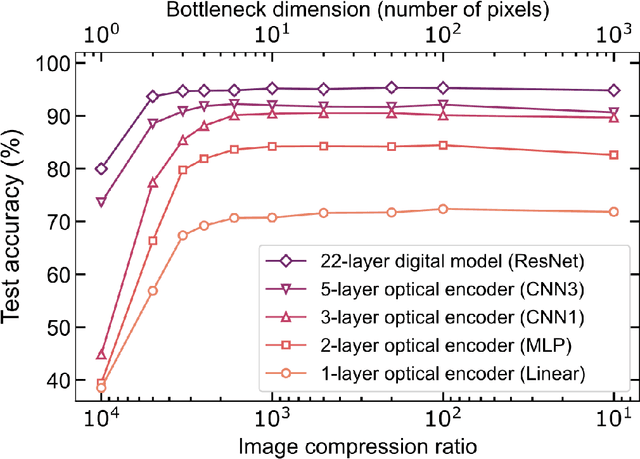

Image sensing with multilayer, nonlinear optical neural networks

Jul 27, 2022

Abstract:Optical imaging is commonly used for both scientific and technological applications across industry and academia. In image sensing, a measurement, such as of an object's position, is performed by computational analysis of a digitized image. An emerging image-sensing paradigm breaks this delineation between data collection and analysis by designing optical components to perform not imaging, but encoding. By optically encoding images into a compressed, low-dimensional latent space suitable for efficient post-analysis, these image sensors can operate with fewer pixels and fewer photons, allowing higher-throughput, lower-latency operation. Optical neural networks (ONNs) offer a platform for processing data in the analog, optical domain. ONN-based sensors have however been limited to linear processing, but nonlinearity is a prerequisite for depth, and multilayer NNs significantly outperform shallow NNs on many tasks. Here, we realize a multilayer ONN pre-processor for image sensing, using a commercial image intensifier as a parallel optoelectronic, optical-to-optical nonlinear activation function. We demonstrate that the nonlinear ONN pre-processor can achieve compression ratios of up to 800:1 while still enabling high accuracy across several representative computer-vision tasks, including machine-vision benchmarks, flow-cytometry image classification, and identification of objects in real scenes. In all cases we find that the ONN's nonlinearity and depth allowed it to outperform a purely linear ONN encoder. Although our experiments are specialized to ONN sensors for incoherent-light images, alternative ONN platforms should facilitate a range of ONN sensors. These ONN sensors may surpass conventional sensors by pre-processing optical information in spatial, temporal, and/or spectral dimensions, potentially with coherent and quantum qualities, all natively in the optical domain.

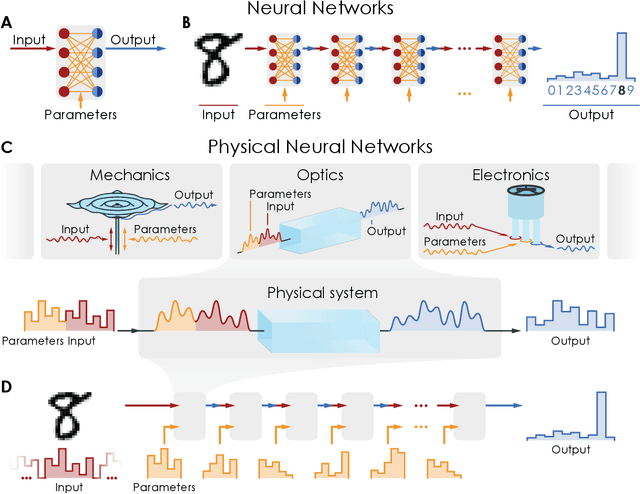

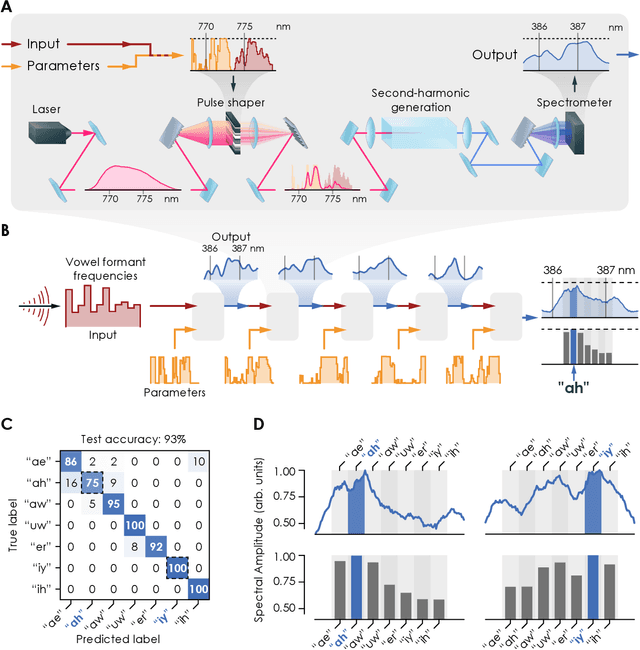

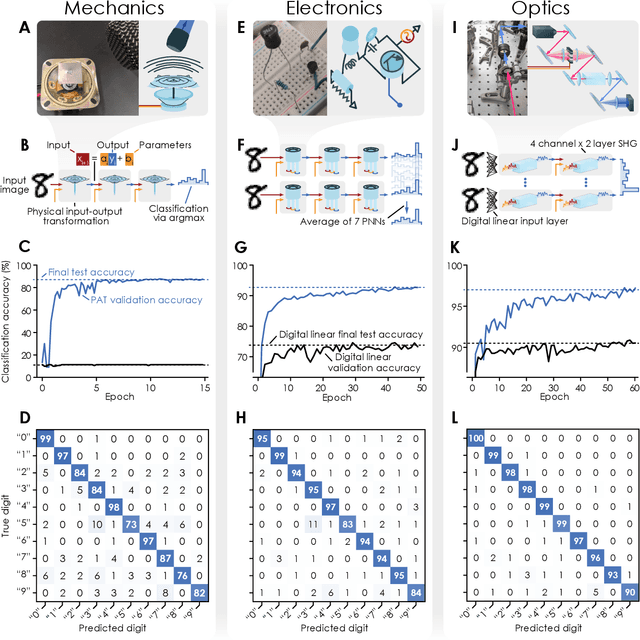

Deep physical neural networks enabled by a backpropagation algorithm for arbitrary physical systems

Apr 27, 2021

Abstract:Deep neural networks have become a pervasive tool in science and engineering. However, modern deep neural networks' growing energy requirements now increasingly limit their scaling and broader use. We propose a radical alternative for implementing deep neural network models: Physical Neural Networks. We introduce a hybrid physical-digital algorithm called Physics-Aware Training to efficiently train sequences of controllable physical systems to act as deep neural networks. This method automatically trains the functionality of any sequence of real physical systems, directly, using backpropagation, the same technique used for modern deep neural networks. To illustrate their generality, we demonstrate physical neural networks with three diverse physical systems-optical, mechanical, and electrical. Physical neural networks may facilitate unconventional machine learning hardware that is orders of magnitude faster and more energy efficient than conventional electronic processors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge