Markus Hanselmann

Deep recurrent Gaussian process with variational Sparse Spectrum approximation

Sep 27, 2019

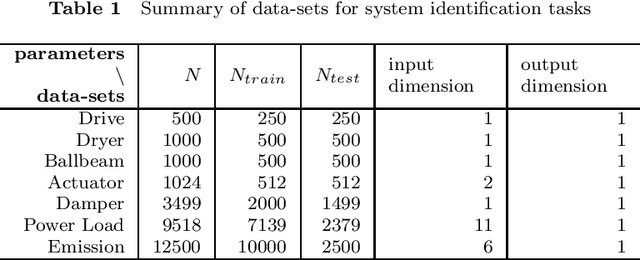

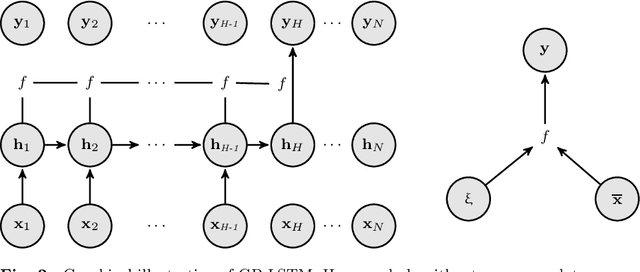

Abstract:Modeling sequential data has become more and more important in practice. Some applications are autonomous driving, virtual sensors and weather forecasting. To model such systems, so called recurrent models are frequently used. In this paper we introduce several new Deep recurrent Gaussian process (DRGP) models based on the Sparse Spectrum Gaussian process (SSGP) and the improved version, called variational Sparse Spectrum Gaussian process (VSSGP). We follow the recurrent structure given by an existing DRGP based on a specific variational sparse Nystr\"om approximation, the recurrent Gaussian process (RGP). Similar to previous work, we also variationally integrate out the input-space and hence can propagate uncertainty through the Gaussian process (GP) layers. Our approach can deal with a larger class of covariance functions than the RGP, because its spectral nature allows variational integration in all stationary cases. Furthermore, we combine the (variational) Sparse Spectrum ((V)SS) approximations with a well known inducing-input regularization framework. We improve over current state of the art methods in prediction accuracy for experimental data-sets used for their evaluation and introduce a new data-set for engine control, named Emission.

CANet: An Unsupervised Intrusion Detection System for High Dimensional CAN Bus Data

Jun 06, 2019

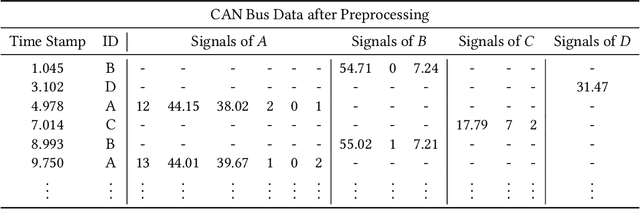

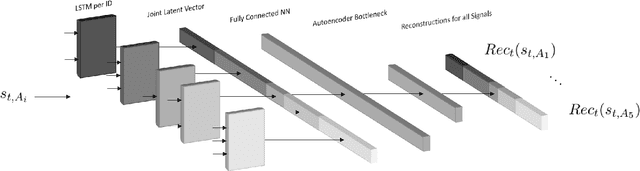

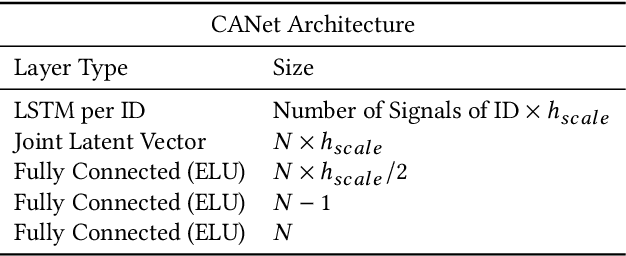

Abstract:We propose a novel neural network architecture for detecting intrusions on the CAN bus. The Controller Area Network (CAN) is the standard communication method between the Electronic Control Units (ECUs) of automobiles. However, CAN lacks security mechanisms and it has recently been shown that it can be attacked remotely. Hence, it is desirable to monitor CAN traffic to detect intrusions. In order to detect both, known and unknown intrusion scenarios, we consider a novel unsupervised learning approach which we call CANet. To our knowledge, this is the first deep learning based intrusion detection system (IDS) that takes individual CAN messages with different IDs and evaluates them in the moment they are received. This is a significant advancement because messages with different IDs are typically sent at different times and with different frequencies. Our method is evaluated on real and synthetic CAN data. For reproducibility of the method, our synthetic data is publicly available. A comparison with previous machine learning based methods shows that CANet outperforms them by a significant margin.

Unpaired High-Resolution and Scalable Style Transfer Using Generative Adversarial Networks

Oct 10, 2018

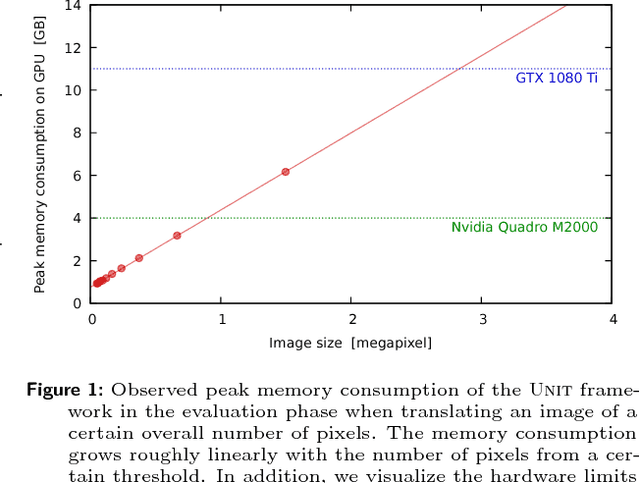

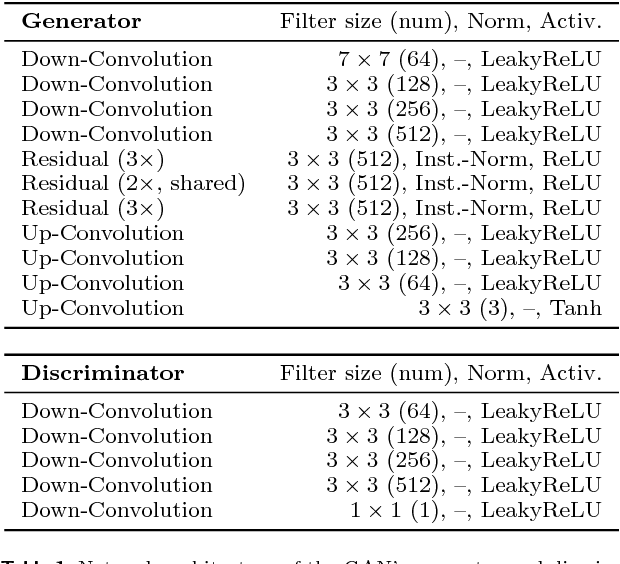

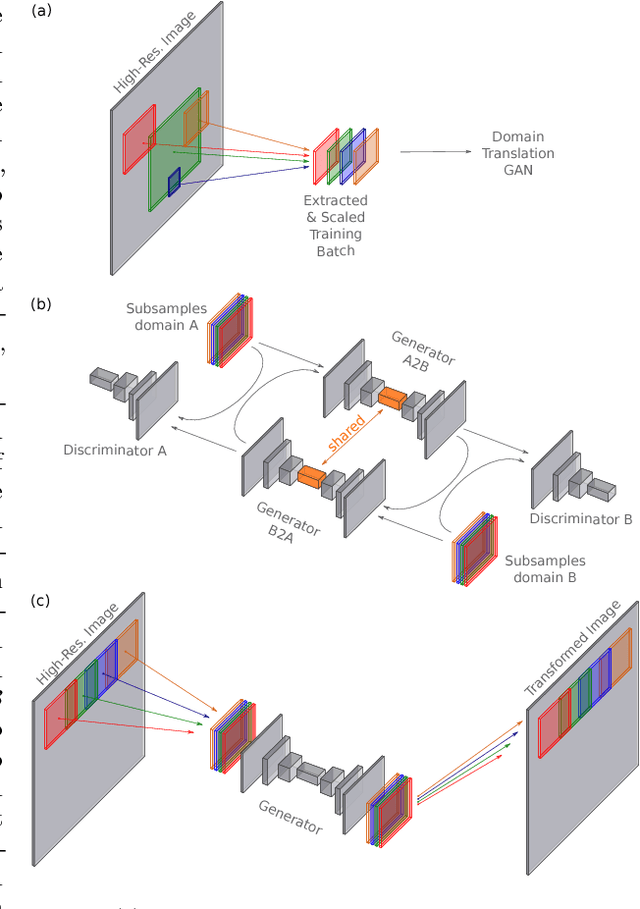

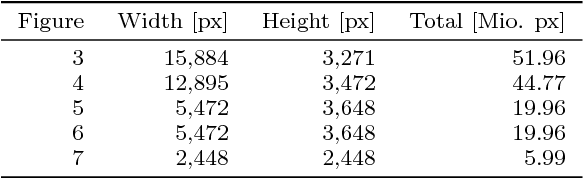

Abstract:Neural networks have proven their capabilities by outperforming many other approaches on regression or classification tasks on various kinds of data. Other astonishing results have been achieved using neural nets as data generators, especially in settings of generative adversarial networks (GANs). One special application is the field of image domain translations. Here, the goal is to take an image with a certain style (e.g. a photography) and transform it into another one (e.g. a painting). If such a task is performed for unpaired training examples, the corresponding GAN setting is complex, the neural networks are large, and this leads to a high peak memory consumption during, both, training and evaluation phase. This sets a limit to the highest processable image size. We address this issue by the idea of not processing the whole image at once, but to train and evaluate the domain translation on the level of overlapping image subsamples. This new approach not only enables us to translate high-resolution images that otherwise cannot be processed by the neural network at once, but also allows us to work with comparably small neural networks and with limited hardware resources. Additionally, the number of images required for the training process is significantly reduced. We present high-quality results on images with a total resolution of up to over 50 megapixels and emonstrate that our method helps to preserve local image details while it also keeps global consistency.

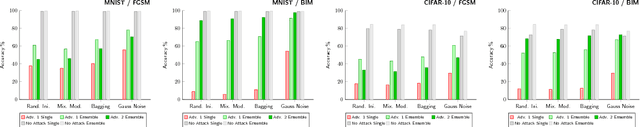

Ensemble Methods as a Defense to Adversarial Perturbations Against Deep Neural Networks

Feb 08, 2018

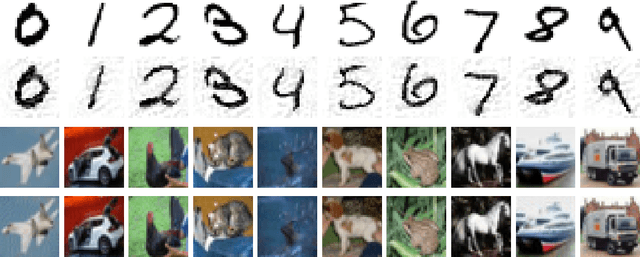

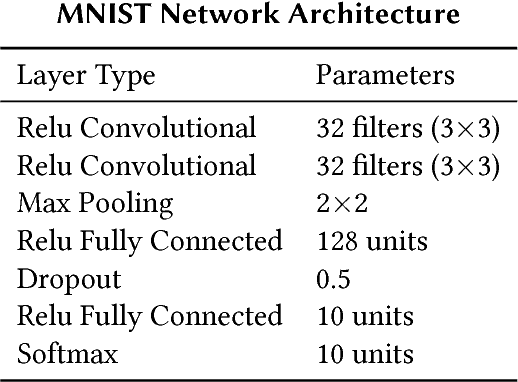

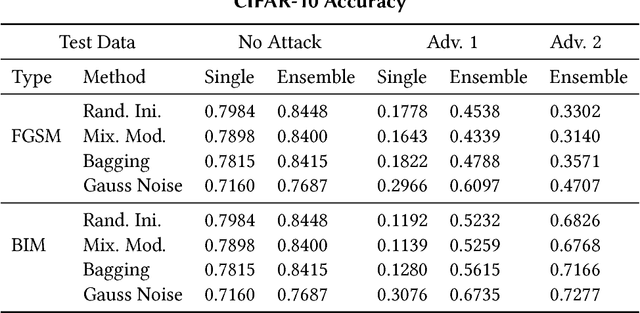

Abstract:Deep learning has become the state of the art approach in many machine learning problems such as classification. It has recently been shown that deep learning is highly vulnerable to adversarial perturbations. Taking the camera systems of self-driving cars as an example, small adversarial perturbations can cause the system to make errors in important tasks, such as classifying traffic signs or detecting pedestrians. Hence, in order to use deep learning without safety concerns a proper defense strategy is required. We propose to use ensemble methods as a defense strategy against adversarial perturbations. We find that an attack leading one model to misclassify does not imply the same for other networks performing the same task. This makes ensemble methods an attractive defense strategy against adversarial attacks. We empirically show for the MNIST and the CIFAR-10 data sets that ensemble methods not only improve the accuracy of neural networks on test data but also increase their robustness against adversarial perturbations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge