Marianela Morales

GenPlanX. Generation of Plans and Execution

Jun 12, 2025Abstract:Classical AI Planning techniques generate sequences of actions for complex tasks. However, they lack the ability to understand planning tasks when provided using natural language. The advent of Large Language Models (LLMs) has introduced novel capabilities in human-computer interaction. In the context of planning tasks, LLMs have shown to be particularly good in interpreting human intents among other uses. This paper introduces GenPlanX that integrates LLMs for natural language-based description of planning tasks, with a classical AI planning engine, alongside an execution and monitoring framework. We demonstrate the efficacy of GenPlanX in assisting users with office-related tasks, highlighting its potential to streamline workflows and enhance productivity through seamless human-AI collaboration.

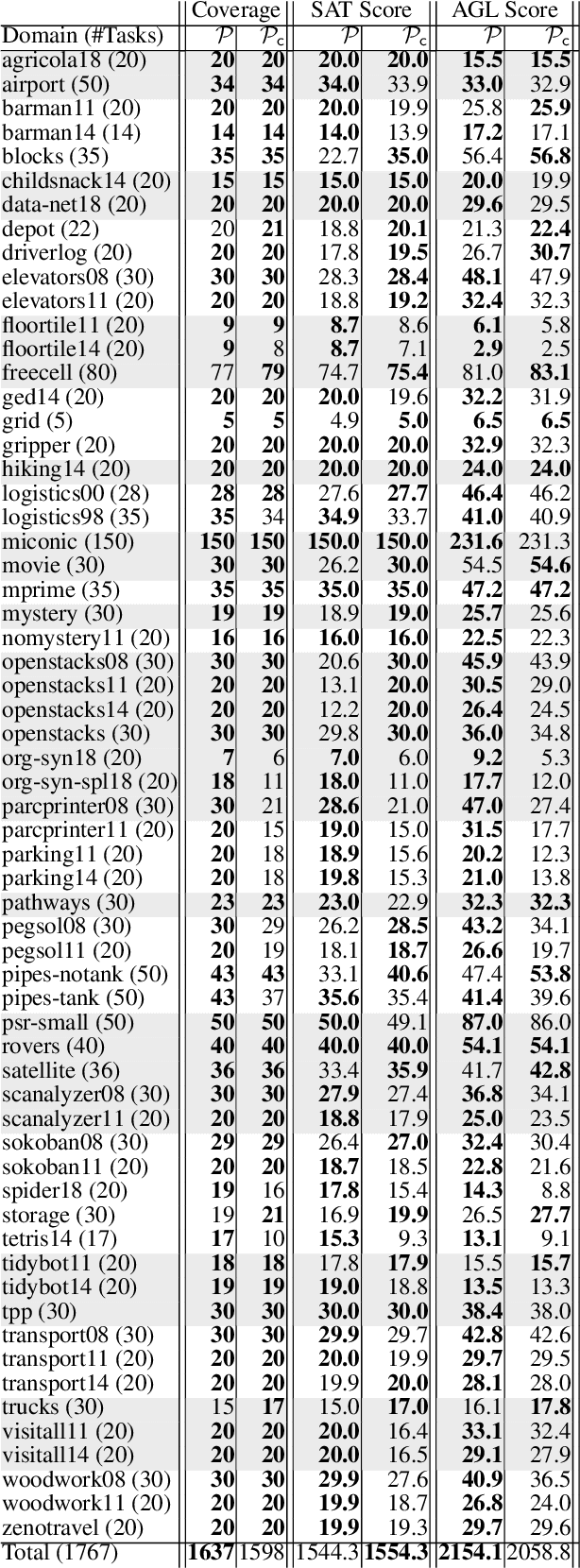

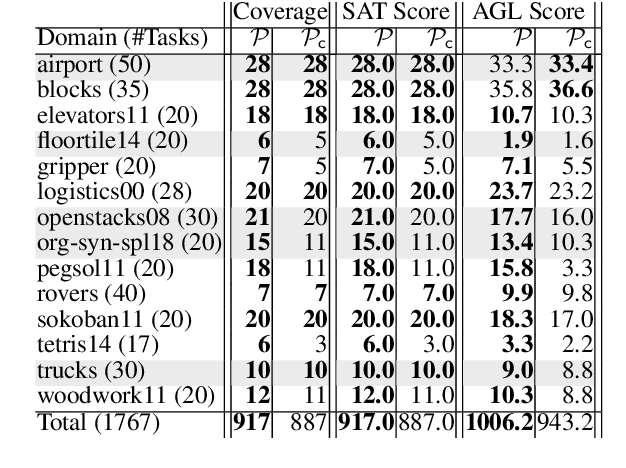

The Value of Goal Commitment in Planning

Mar 12, 2025

Abstract:In this paper, we revisit the concept of goal commitment from early planners in the presence of current forward chaining heuristic planners. We present a compilation that extends the original planning task with commit actions that enforce the persistence of specific goals once achieved, thereby committing to them in the search sub-tree. This approach imposes a specific goal achievement order in parts of the search tree, potentially introducing dead-end states. This can reduce search effort if the goal achievement order is correct. Otherwise, the search algorithm can expand nodes in the open list where goals do not persist. Experimental results demonstrate that the reformulated tasks suit state-of-the-art agile planners, enabling them to find better

On Learning Action Costs from Input Plans

Aug 20, 2024Abstract:Most of the work on learning action models focus on learning the actions' dynamics from input plans. This allows us to specify the valid plans of a planning task. However, very little work focuses on learning action costs, which in turn allows us to rank the different plans. In this paper we introduce a new problem: that of learning the costs of a set of actions such that a set of input plans are optimal under the resulting planning model. To solve this problem we present $LACFIP^k$, an algorithm to learn action's costs from unlabeled input plans. We provide theoretical and empirical results showing how $LACFIP^k$ can successfully solve this task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge