Maike Schwammberger

Karlsruhe Institute of Technology, Germany

ROBOPOL: Social Robotics Meets Vehicular Communications for Cooperative Automated Driving

Dec 30, 2025Abstract:On the way towards full autonomy, sharing roads between automated vehicles and human actors in so-called mixed traffic is unavoidable. Moreover, even if all vehicles on the road were autonomous, pedestrians would still be crossing the streets. We propose social robots as moderators between autonomous vehicles and vulnerable road users (VRU). To this end, we identify four enablers requiring integration: (1) advanced perception, allowing the robot to see the environment; (2) vehicular communications allowing connected vehicles to share intentions and the robot to send guiding commands; (3) social human-robot interaction allowing the robot to effectively communicate with VRUs and drivers; (4) formal specification allowing the robot to understand traffic and plan accordingly. This paper presents an overview of the key enablers and report on a first proof-of-concept integration of the first three enablers envisioning a social robot advising pedestrians in scenarios with a cooperative automated e-bike.

Proceedings Seventh International Workshop on Formal Methods for Autonomous Systems

Nov 17, 2025Abstract:This EPTCS volume contains the papers from the Seventh International Workshop on Formal Methods for Autonomous Systems (FMAS 2025), which was held between the 17th and 19th of November 2025. The goal of the FMAS workshop series is to bring together leading researchers who are using formal methods to tackle the unique challenges that autonomous systems present, so that they can publish and discuss their work with a growing community of researchers. FMAS 2025 was co-located with the 20th International Conference on integrated Formal Methods (iFM'25), hosted by Inria Paris, France at the Inria Paris Center. In total, FMAS 2025 received 16 submissions from researchers at institutions in: Canada, China, France, Germany, Ireland, Italy, Japan, the Netherlands, Portugal, Sweden, the United States of America, and the United Kingdom. Though we received fewer submissions than last year, we are encouraged to see the submissions being sent from a wide range of countries. Submissions come from both past and new FMAS authors, which shows us that the existing community appreciates the network that FMAS has built over the past 7 years, while new authors also show the FMAS community's great potential of growth.

Proceedings Fifth International Workshop on Formal Methods for Autonomous Systems

Nov 15, 2023Abstract:This EPTCS volume contains the proceedings for the Fifth International Workshop on Formal Methods for Autonomous Systems (FMAS 2023), which was held on the 15th and 16th of November 2023. FMAS 2023 was co-located with 18th International Conference on integrated Formal Methods (iFM) (iFM'22), organised by Leiden Institute of Advanced Computer Science of Leiden University. The workshop itself was held at Scheltema Leiden, a renovated 19th Century blanket factory alongside the canal. FMAS 2023 received 25 submissions. We received 11 regular papers, 3 experience reports, 6 research previews, and 5 vision papers. The researchers who submitted papers to FMAS 2023 were from institutions in: Australia, Canada, Colombia, France, Germany, Ireland, Italy, the Netherlands, Sweden, the United Kingdom, and the United States of America. Increasing our number of submissions for the third year in a row is an encouraging sign that FMAS has established itself as a reputable publication venue for research on the formal modelling and verification of autonomous systems. After each paper was reviewed by three members of our Programme Committee we accepted a total of 15 papers: 8 long papers and 7 short papers.

Towards Self-Explainable Cyber-Physical Systems

Aug 13, 2019

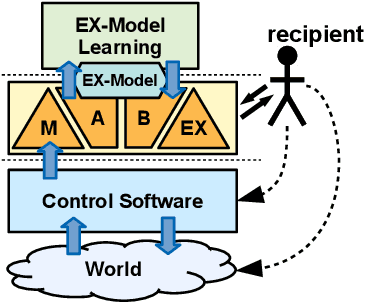

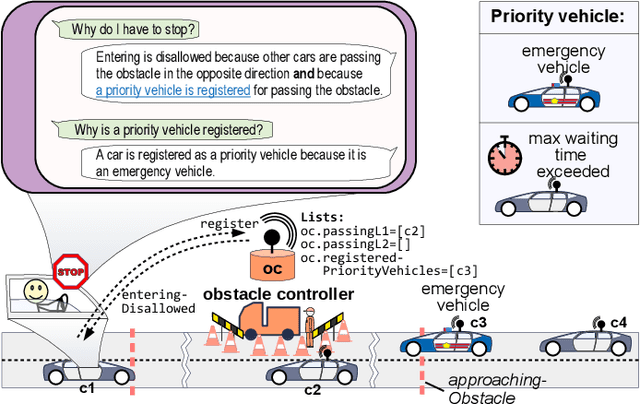

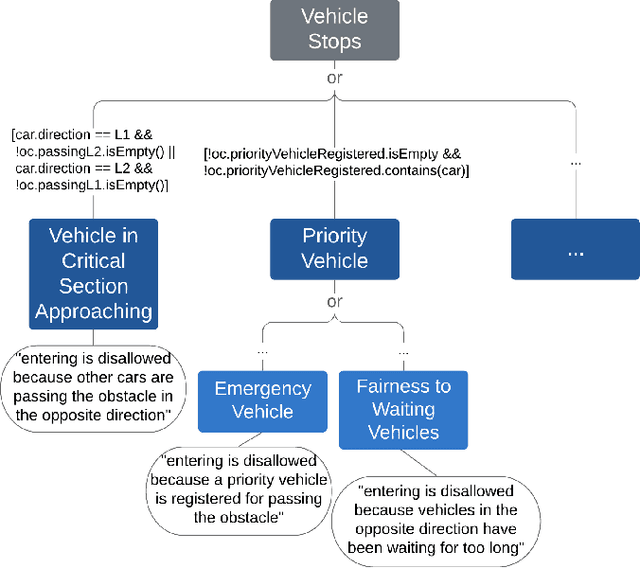

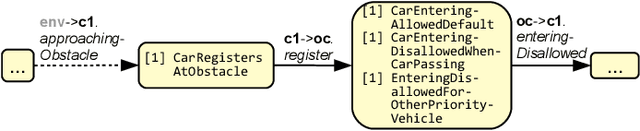

Abstract:With the increasing complexity of CPSs, their behavior and decisions become increasingly difficult to understand and comprehend for users and other stakeholders. Our vision is to build self-explainable systems that can, at run-time, answer questions about the system's past, current, and future behavior. As hitherto no design methodology or reference framework exists for building such systems, we propose the MAB-EX framework for building self-explainable systems that leverage requirements- and explainability models at run-time. The basic idea of MAB-EX is to first Monitor and Analyze a certain behavior of a system, then Build an explanation from explanation models and convey this EXplanation in a suitable way to a stakeholder. We also take into account that new explanations can be learned, by updating the explanation models, should new and yet un-explainable behavior be detected by the system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge