Mahmoud Shoush

Prescriptive Process Monitoring Under Resource Constraints: A Reinforcement Learning Approach

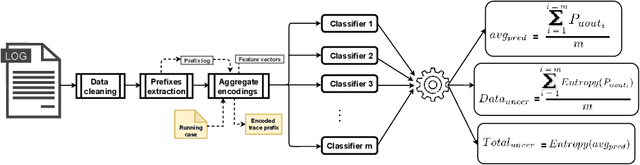

Jul 13, 2023Abstract:Prescriptive process monitoring methods seek to optimize the performance of business processes by triggering interventions at runtime, thereby increasing the probability of positive case outcomes. These interventions are triggered according to an intervention policy. Reinforcement learning has been put forward as an approach to learning intervention policies through trial and error. Existing approaches in this space assume that the number of resources available to perform interventions in a process is unlimited, an unrealistic assumption in practice. This paper argues that, in the presence of resource constraints, a key dilemma in the field of prescriptive process monitoring is to trigger interventions based not only on predictions of their necessity, timeliness, or effect but also on the uncertainty of these predictions and the level of resource utilization. Indeed, committing scarce resources to an intervention when the necessity or effects of this intervention are highly uncertain may intuitively lead to suboptimal intervention effects. Accordingly, the paper proposes a reinforcement learning approach for prescriptive process monitoring that leverages conformal prediction techniques to consider the uncertainty of the predictions upon which an intervention decision is based. An evaluation using real-life datasets demonstrates that explicitly modeling uncertainty using conformal predictions helps reinforcement learning agents converge towards policies with higher net intervention gain

Learning When to Treat Business Processes: Prescriptive Process Monitoring with Causal Inference and Reinforcement Learning

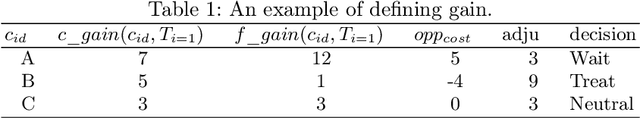

Mar 07, 2023Abstract:Increasing the success rate of a process, i.e. the percentage of cases that end in a positive outcome, is a recurrent process improvement goal. At runtime, there are often certain actions (a.k.a. treatments) that workers may execute to lift the probability that a case ends in a positive outcome. For example, in a loan origination process, a possible treatment is to issue multiple loan offers to increase the probability that the customer takes a loan. Each treatment has a cost. Thus, when defining policies for prescribing treatments to cases, managers need to consider the net gain of the treatments. Also, the effect of a treatment varies over time: treating a case earlier may be more effective than later in a case. This paper presents a prescriptive monitoring method that automates this decision-making task. The method combines causal inference and reinforcement learning to learn treatment policies that maximize the net gain. The method leverages a conformal prediction technique to speed up the convergence of the reinforcement learning mechanism by separating cases that are likely to end up in a positive or negative outcome, from uncertain cases. An evaluation on two real-life datasets shows that the proposed method outperforms a state-of-the-art baseline.

Intervening With Confidence: Conformal Prescriptive Monitoring of Business Processes

Dec 07, 2022Abstract:Prescriptive process monitoring methods seek to improve the performance of a process by selectively triggering interventions at runtime (e.g., offering a discount to a customer) to increase the probability of a desired case outcome (e.g., a customer making a purchase). The backbone of a prescriptive process monitoring method is an intervention policy, which determines for which cases and when an intervention should be executed. Existing methods in this field rely on predictive models to define intervention policies; specifically, they consider policies that trigger an intervention when the estimated probability of a negative outcome exceeds a threshold. However, the probabilities computed by a predictive model may come with a high level of uncertainty (low confidence), leading to unnecessary interventions and, thus, wasted effort. This waste is particularly problematic when the resources available to execute interventions are limited. To tackle this shortcoming, this paper proposes an approach to extend existing prescriptive process monitoring methods with so-called conformal predictions, i.e., predictions with confidence guarantees. An empirical evaluation using real-life public datasets shows that conformal predictions enhance the net gain of prescriptive process monitoring methods under limited resources.

When to intervene? Prescriptive Process Monitoring Under Uncertainty and Resource Constraints

Jun 15, 2022

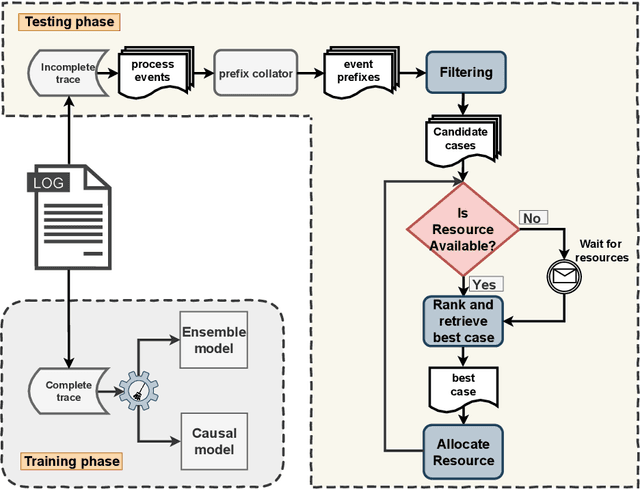

Abstract:Prescriptive process monitoring approaches leverage historical data to prescribe runtime interventions that will likely prevent negative case outcomes or improve a process's performance. A centerpiece of a prescriptive process monitoring method is its intervention policy: a decision function determining if and when to trigger an intervention on an ongoing case. Previous proposals in this field rely on intervention policies that consider only the current state of a given case. These approaches do not consider the tradeoff between triggering an intervention in the current state, given the level of uncertainty of the underlying predictive models, versus delaying the intervention to a later state. Moreover, they assume that a resource is always available to perform an intervention (infinite capacity). This paper addresses these gaps by introducing a prescriptive process monitoring method that filters and ranks ongoing cases based on prediction scores, prediction uncertainty, and causal effect of the intervention, and triggers interventions to maximize a gain function, considering the available resources. The proposal is evaluated using a real-life event log. The results show that the proposed method outperforms existing baselines regarding total gain.

Prescriptive Process Monitoring Under Resource Constraints: A Causal Inference Approach

Sep 07, 2021

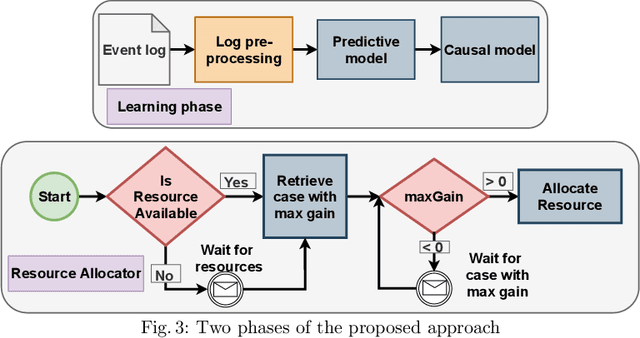

Abstract:Prescriptive process monitoring is a family of techniques to optimize the performance of a business process by triggering interventions at runtime. Existing prescriptive process monitoring techniques assume that the number of interventions that may be triggered is unbounded. In practice, though, specific interventions consume resources with finite capacity. For example, in a loan origination process, an intervention may consist of preparing an alternative loan offer to increase the applicant's chances of taking a loan. This intervention requires a certain amount of time from a credit officer, and thus, it is not possible to trigger this intervention in all cases. This paper proposes a prescriptive process monitoring technique that triggers interventions to optimize a cost function under fixed resource constraints. The proposed technique relies on predictive modeling to identify cases that are likely to lead to a negative outcome, in combination with causal inference to estimate the effect of an intervention on the outcome of the case. These outputs are then used to allocate resources to interventions to maximize a cost function. A preliminary empirical evaluation suggests that the proposed approach produces a higher net gain than a purely predictive (non-causal) baseline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge