Liu Xie

FreqINR: Frequency Consistency for Implicit Neural Representation with Adaptive DCT Frequency Loss

Aug 25, 2024

Abstract:Recent advancements in local Implicit Neural Representation (INR) demonstrate its exceptional capability in handling images at various resolutions. However, frequency discrepancies between high-resolution (HR) and ground-truth images, especially at larger scales, result in significant artifacts and blurring in HR images. This paper introduces Frequency Consistency for Implicit Neural Representation (FreqINR), an innovative Arbitrary-scale Super-resolution method aimed at enhancing detailed textures by ensuring spectral consistency throughout both training and inference. During training, we employ Adaptive Discrete Cosine Transform Frequency Loss (ADFL) to minimize the frequency gap between HR and ground-truth images, utilizing 2-Dimensional DCT bases and focusing dynamically on challenging frequencies. During inference, we extend the receptive field to preserve spectral coherence between low-resolution (LR) and ground-truth images, which is crucial for the model to generate high-frequency details from LR counterparts. Experimental results show that FreqINR, as a lightweight approach, achieves state-of-the-art performance compared to existing Arbitrary-scale Super-resolution methods and offers notable improvements in computational efficiency. The code for our method will be made publicly available.

A New Benchmark and Approach for Fine-grained Cross-media Retrieval

Jul 10, 2019

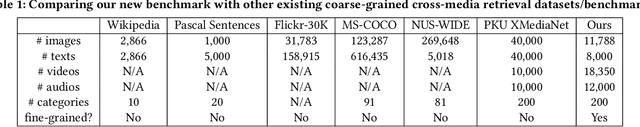

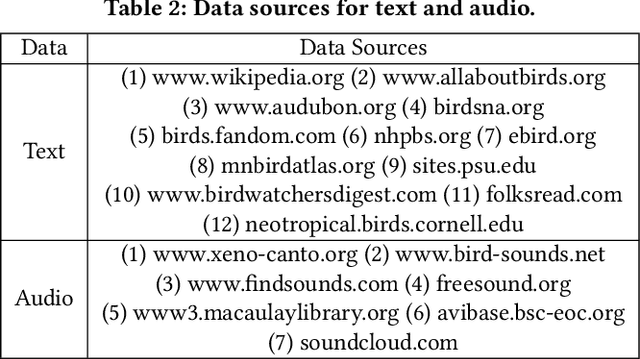

Abstract:Cross-media retrieval is to return the results of various media types corresponding to the query of any media type. Existing researches generally focus on coarse-grained cross-media retrieval. When users submit an image of "Slaty-backed Gull" as a query, coarse-grained cross-media retrieval treats it as "Bird", so that users can only get the results of "Bird", which may include other bird species with similar appearance (image and video), descriptions (text) or sounds (audio), such as "Herring Gull". Such coarse-grained cross-media retrieval is not consistent with human lifestyle, where we generally have the fine-grained requirement of returning the exactly relevant results of "Slaty-backed Gull" instead of "Herring Gull". However, few researches focus on fine-grained cross-media retrieval, which is a highly challenging and practical task. Therefore, in this paper, we first construct a new benchmark for fine-grained cross-media retrieval, which consists of 200 fine-grained subcategories of the "Bird", and contains 4 media types, including image, text, video and audio. To the best of our knowledge, it is the first benchmark with 4 media types for fine-grained cross-media retrieval. Then, we propose a uniform deep model, namely FGCrossNet, which simultaneously learns 4 types of media without discriminative treatments. We jointly consider three constraints for better common representation learning: classification constraint ensures the learning of discriminative features, center constraint ensures the compactness characteristic of the features of the same subcategory, and ranking constraint ensures the sparsity characteristic of the features of different subcategories. Extensive experiments verify the usefulness of the new benchmark and the effectiveness of our FGCrossNet. They will be made available at https://github.com/PKU-ICST-MIPL/FGCrossNet_ACMMM2019.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge