Lihong Qiu

Diversified Arbitrary Style Transfer via Deep Feature Perturbation

Sep 18, 2019

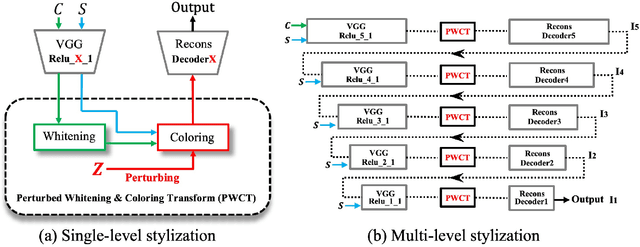

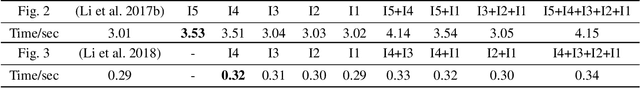

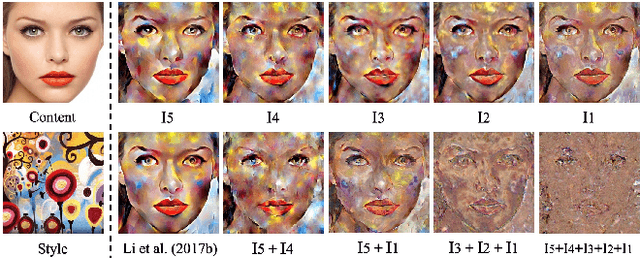

Abstract:Image style transfer is an underdetermined problem, where a large number of solutions can explain the same constraint (i.e., the content and style). Most current methods always produce visually identical outputs, which lack of diversity. Recently, some methods have introduced an alternative diversity loss to train the feed-forward networks for diverse outputs, but they still suffer from many issues. In this paper, we propose a simple yet effective method for diversified style transfer. Our method can produce diverse outputs for arbitrary styles by incorporating the whitening and coloring transforms (WCT) with a novel deep feature perturbation (DFP) operation, which uses an orthogonal random noise matrix to perturb the deep image features while keeping the original style information unchanged. In addition, our method is learning-free and could be easily integrated into many existing WCT-based methods and empower them to generate diverse results. Experimental results demonstrate that our method can greatly increase the diversity while maintaining the quality of stylization. And several new user studies show that users could obtain more satisfactory results through the diversified approaches based on our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge