Liangyu Fu

eMotions: A Large-Scale Dataset and Audio-Visual Fusion Network for Emotion Analysis in Short-form Videos

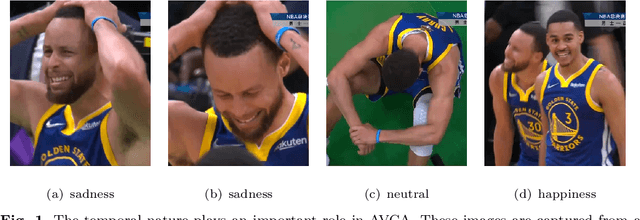

Aug 09, 2025Abstract:Short-form videos (SVs) have become a vital part of our online routine for acquiring and sharing information. Their multimodal complexity poses new challenges for video analysis, highlighting the need for video emotion analysis (VEA) within the community. Given the limited availability of SVs emotion data, we introduce eMotions, a large-scale dataset consisting of 27,996 videos with full-scale annotations. To ensure quality and reduce subjective bias, we emphasize better personnel allocation and propose a multi-stage annotation procedure. Additionally, we provide the category-balanced and test-oriented variants through targeted sampling to meet diverse needs. While there have been significant studies on videos with clear emotional cues (e.g., facial expressions), analyzing emotions in SVs remains a challenging task. The challenge arises from the broader content diversity, which introduces more distinct semantic gaps and complicates the representations learning of emotion-related features. Furthermore, the prevalence of audio-visual co-expressions in SVs leads to the local biases and collective information gaps caused by the inconsistencies in emotional expressions. To tackle this, we propose AV-CANet, an end-to-end audio-visual fusion network that leverages video transformer to capture semantically relevant representations. We further introduce the Local-Global Fusion Module designed to progressively capture the correlations of audio-visual features. Besides, EP-CE Loss is constructed to globally steer optimizations with tripolar penalties. Extensive experiments across three eMotions-related datasets and four public VEA datasets demonstrate the effectiveness of our proposed AV-CANet, while providing broad insights for future research. Moreover, we conduct ablation studies to examine the critical components of our method. Dataset and code will be made available at Github.

3A-YOLO: New Real-Time Object Detectors with Triple Discriminative Awareness and Coordinated Representations

Dec 10, 2024

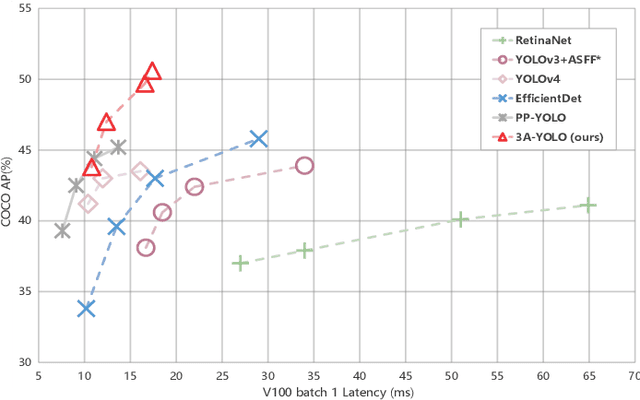

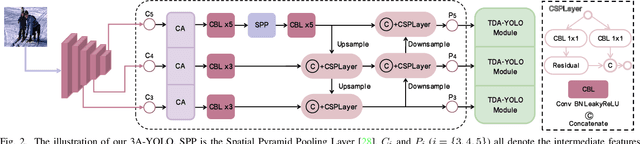

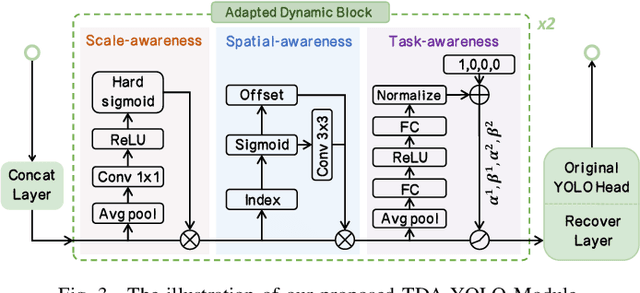

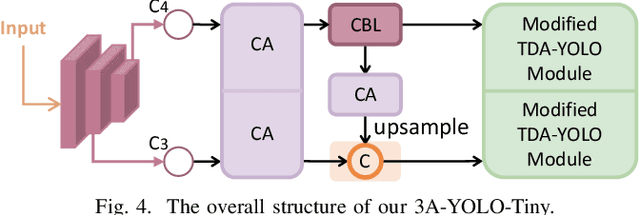

Abstract:Recent research on real-time object detectors (e.g., YOLO series) has demonstrated the effectiveness of attention mechanisms for elevating model performance. Nevertheless, existing methods neglect to unifiedly deploy hierarchical attention mechanisms to construct a more discriminative YOLO head which is enriched with more useful intermediate features. To tackle this gap, this work aims to leverage multiple attention mechanisms to hierarchically enhance the triple discriminative awareness of the YOLO detection head and complementarily learn the coordinated intermediate representations, resulting in a new series detectors denoted 3A-YOLO. Specifically, we first propose a new head denoted TDA-YOLO Module, which unifiedly enhance the representations learning of scale-awareness, spatial-awareness, and task-awareness. Secondly, we steer the intermediate features to coordinately learn the inter-channel relationships and precise positional information. Finally, we perform neck network improvements followed by introducing various tricks to boost the adaptability of 3A-YOLO. Extensive experiments across COCO and VOC benchmarks indicate the effectiveness of our detectors.

Emotion Recognition by Video: A review

Oct 26, 2023

Abstract:Video emotion recognition is an important branch of affective computing, and its solutions can be applied in different fields such as human-computer interaction (HCI) and intelligent medical treatment. Although the number of papers published in the field of emotion recognition is increasing, there are few comprehensive literature reviews covering related research on video emotion recognition. Therefore, this paper selects articles published from 2015 to 2023 to systematize the existing trends in video emotion recognition in related studies. In this paper, we first talk about two typical emotion models, then we talk about databases that are frequently utilized for video emotion recognition, including unimodal databases and multimodal databases. Next, we look at and classify the specific structure and performance of modern unimodal and multimodal video emotion recognition methods, talk about the benefits and drawbacks of each, and then we compare them in detail in the tables. Further, we sum up the primary difficulties right now looked by video emotion recognition undertakings and point out probably the most encouraging future headings, such as establishing an open benchmark database and better multimodal fusion strategys. The essential objective of this paper is to assist scholarly and modern scientists with keeping up to date with the most recent advances and new improvements in this speedy, high-influence field of video emotion recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge