Liad Erez

Sample Complexity of Agnostic Multiclass Classification: Natarajan Dimension Strikes Back

Nov 16, 2025Abstract:The fundamental theorem of statistical learning states that binary PAC learning is governed by a single parameter -- the Vapnik-Chervonenkis (VC) dimension -- which determines both learnability and sample complexity. Extending this to multiclass classification has long been challenging, since Natarajan's work in the late 80s proposing the Natarajan dimension (Nat) as a natural analogue of VC. Daniely and Shalev-Shwartz (2014) introduced the DS dimension, later shown by Brukhim et al. (2022) to characterize multiclass learnability. Brukhim et al. also showed that Nat and DS can diverge arbitrarily, suggesting that multiclass learning is governed by DS rather than Nat. We show that agnostic multiclass PAC sample complexity is in fact governed by two distinct dimensions. Specifically, we prove nearly tight agnostic sample complexity bounds that, up to log factors, take the form $\frac{DS^{1.5}}ε + \frac{Nat}{ε^2}$ where $ε$ is the excess risk. This bound is tight up to a $\sqrt{DS}$ factor in the first term, nearly matching known $Nat/ε^2$ and $DS/ε$ lower bounds. The first term reflects the DS-controlled regime, while the second shows that the Natarajan dimension still dictates asymptotic behavior for small $ε$. Thus, unlike binary or online classification -- where a single dimension (VC or Littlestone) controls both phenomena -- multiclass learning inherently involves two structural parameters. Our technical approach departs from traditional agnostic learning methods based on uniform convergence or reductions to realizable cases. A key ingredient is a novel online procedure based on a self-adaptive multiplicative-weights algorithm performing a label-space reduction, which may be of independent interest.

Bandit Multiclass List Classification

Feb 13, 2025Abstract:We study the problem of multiclass list classification with (semi-)bandit feedback, where input examples are mapped into subsets of size $m$ of a collection of $K$ possible labels, and the feedback consists of the predicted labels which lie in the set of true labels of the given example. Our main result is for the $(\varepsilon,\delta)$-PAC variant of the problem for which we design an algorithm that returns an $\varepsilon$-optimal hypothesis with high probability using a sample complexity of $O \big( (\mathrm{poly}(K/m) + sm / \varepsilon^2) \log (|H|/\delta) \big)$ where $H$ is the underlying (finite) hypothesis class and $s$ is an upper bound on the number of true labels for a given example. This bound improves upon known bounds for combinatorial semi-bandits whenever $s \ll K$. Moreover, in the regime where $s = O(1)$ the leading terms in our bound match the corresponding full-information rates, implying that bandit feedback essentially comes at no cost. Our PAC learning algorithm is also computationally efficient given access to an ERM oracle for $H$. Additionally, we consider the regret minimization setting where data can be generated adversarially, and establish a regret bound of $\widetilde O(|H| + \sqrt{smT \log |H|})$. Our results generalize and extend those of Erez et al. (2024) who consider the simpler single-label setting corresponding to $s=m=1$, and in fact hold for the more general contextual combinatorial semi-bandit problem with $s$-sparse rewards.

Fast Rates for Bandit PAC Multiclass Classification

Jun 18, 2024Abstract:We study multiclass PAC learning with bandit feedback, where inputs are classified into one of $K$ possible labels and feedback is limited to whether or not the predicted labels are correct. Our main contribution is in designing a novel learning algorithm for the agnostic $(\varepsilon,\delta)$-PAC version of the problem, with sample complexity of $O\big( (\operatorname{poly}(K) + 1 / \varepsilon^2) \log (|H| / \delta) \big)$ for any finite hypothesis class $H$. In terms of the leading dependence on $\varepsilon$, this improves upon existing bounds for the problem, that are of the form $O(K/\varepsilon^2)$. We also provide an extension of this result to general classes and establish similar sample complexity bounds in which $\log |H|$ is replaced by the Natarajan dimension. This matches the optimal rate in the full-information version of the problem and resolves an open question studied by Daniely, Sabato, Ben-David, and Shalev-Shwartz (2011) who demonstrated that the multiplicative price of bandit feedback in realizable PAC learning is $\Theta(K)$. We complement this by revealing a stark contrast with the agnostic case, where the price of bandit feedback is only $O(1)$ as $\varepsilon \to 0$. Our algorithm utilizes a stochastic optimization technique to minimize a log-barrier potential based on Frank-Wolfe updates for computing a low-variance exploration distribution over the hypotheses, and is made computationally efficient provided access to an ERM oracle over $H$.

The Real Price of Bandit Information in Multiclass Classification

May 16, 2024Abstract:We revisit the classical problem of multiclass classification with bandit feedback (Kakade, Shalev-Shwartz and Tewari, 2008), where each input classifies to one of $K$ possible labels and feedback is restricted to whether the predicted label is correct or not. Our primary inquiry is with regard to the dependency on the number of labels $K$, and whether $T$-step regret bounds in this setting can be improved beyond the $\smash{\sqrt{KT}}$ dependence exhibited by existing algorithms. Our main contribution is in showing that the minimax regret of bandit multiclass is in fact more nuanced, and is of the form $\smash{\widetilde{\Theta}\left(\min \left\{|\mathcal{H}| + \sqrt{T}, \sqrt{KT \log |{\mathcal{H}|}} \right\} \right) }$, where $\mathcal{H}$ is the underlying (finite) hypothesis class. In particular, we present a new bandit classification algorithm that guarantees regret $\smash{\widetilde{O}(|\mathcal{H}|+\sqrt{T})}$, improving over classical algorithms for moderately-sized hypothesis classes, and give a matching lower bound establishing tightness of the upper bounds (up to log-factors) in all parameter regimes.

Regret Minimization and Convergence to Equilibria in General-sum Markov Games

Aug 08, 2022

Abstract:An abundance of recent impossibility results establish that regret minimization in Markov games with adversarial opponents is both statistically and computationally intractable. Nevertheless, none of these results preclude the possibility of regret minimization under the assumption that all parties adopt the same learning procedure. In this work, we present the first (to our knowledge) algorithm for learning in general-sum Markov games that provides sublinear regret guarantees when executed by all agents. The bounds we obtain are for swap regret, and thus, along the way, imply convergence to a correlated equilibrium. Our algorithm is decentralized, computationally efficient, and does not require any communication between agents. Our key observation is that online learning via policy optimization in Markov games essentially reduces to a form of weighted regret minimization, with unknown weights determined by the path length of the agents' policy sequence. Consequently, controlling the path length leads to weighted regret objectives for which sufficiently adaptive algorithms provide sublinear regret guarantees.

Best-of-All-Worlds Bounds for Online Learning with Feedback Graphs

Jul 20, 2021

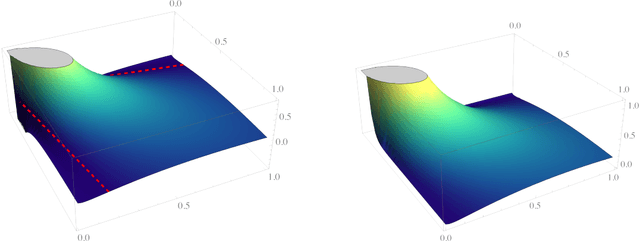

Abstract:We study the online learning with feedback graphs framework introduced by Mannor and Shamir (2011), in which the feedback received by the online learner is specified by a graph $G$ over the available actions. We develop an algorithm that simultaneously achieves regret bounds of the form: $\smash{\mathcal{O}(\sqrt{\theta(G) T})}$ with adversarial losses; $\mathcal{O}(\theta(G)\operatorname{polylog}{T})$ with stochastic losses; and $\mathcal{O}(\theta(G)\operatorname{polylog}{T} + \smash{\sqrt{\theta(G) C})}$ with stochastic losses subject to $C$ adversarial corruptions. Here, $\theta(G)$ is the clique covering number of the graph $G$. Our algorithm is an instantiation of Follow-the-Regularized-Leader with a novel regularization that can be seen as a product of a Tsallis entropy component (inspired by Zimmert and Seldin (2019)) and a Shannon entropy component (analyzed in the corrupted stochastic case by Amir et al. (2020)), thus subtly interpolating between the two forms of entropies. One of our key technical contributions is in establishing the convexity of this regularizer and controlling its inverse Hessian, despite its complex product structure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge