Leyuan Yang

Farewell to Item IDs: Unlocking the Scaling Potential of Large Ranking Models via Semantic Tokens

Jan 30, 2026Abstract:Recent studies on scaling up ranking models have achieved substantial improvement for recommendation systems and search engines. However, most large-scale ranking systems rely on item IDs, where each item is treated as an independent categorical symbol and mapped to a learned embedding. As items rapidly appear and disappear, these embeddings become difficult to train and maintain. This instability impedes effective learning of neural network parameters and limits the scalability of ranking models. In this paper, we show that semantic tokens possess greater scaling potential compared to item IDs. Our proposed framework TRM improves the token generation and application pipeline, leading to 33% reduction in sparse storage while achieving 0.85% AUC increase. Extensive experiments further show that TRM could consistently outperform state-of-the-art models when model capacity scales. Finally, TRM has been successfully deployed on large-scale personalized search engines, yielding 0.26% and 0.75% improvement on user active days and change query ratio respectively through A/B test.

Scalable and High-Quality Neural Implicit Representation for 3D Reconstruction

Jan 15, 2025Abstract:Various SDF-based neural implicit surface reconstruction methods have been proposed recently, and have demonstrated remarkable modeling capabilities. However, due to the global nature and limited representation ability of a single network, existing methods still suffer from many drawbacks, such as limited accuracy and scale of the reconstruction. In this paper, we propose a versatile, scalable and high-quality neural implicit representation to address these issues. We integrate a divide-and-conquer approach into the neural SDF-based reconstruction. Specifically, we model the object or scene as a fusion of multiple independent local neural SDFs with overlapping regions. The construction of our representation involves three key steps: (1) constructing the distribution and overlap relationship of the local radiance fields based on object structure or data distribution, (2) relative pose registration for adjacent local SDFs, and (3) SDF blending. Thanks to the independent representation of each local region, our approach can not only achieve high-fidelity surface reconstruction, but also enable scalable scene reconstruction. Extensive experimental results demonstrate the effectiveness and practicality of our proposed method.

Deformable NeRF using Recursively Subdivided Tetrahedra

Oct 06, 2024

Abstract:While neural radiance fields (NeRF) have shown promise in novel view synthesis, their implicit representation limits explicit control over object manipulation. Existing research has proposed the integration of explicit geometric proxies to enable deformation. However, these methods face two primary challenges: firstly, the time-consuming and computationally demanding tetrahedralization process; and secondly, handling complex or thin structures often leads to either excessive, storage-intensive tetrahedral meshes or poor-quality ones that impair deformation capabilities. To address these challenges, we propose DeformRF, a method that seamlessly integrates the manipulability of tetrahedral meshes with the high-quality rendering capabilities of feature grid representations. To avoid ill-shaped tetrahedra and tetrahedralization for each object, we propose a two-stage training strategy. Starting with an almost-regular tetrahedral grid, our model initially retains key tetrahedra surrounding the object and subsequently refines object details using finer-granularity mesh in the second stage. We also present the concept of recursively subdivided tetrahedra to create higher-resolution meshes implicitly. This enables multi-resolution encoding while only necessitating the storage of the coarse tetrahedral mesh generated in the first training stage. We conduct a comprehensive evaluation of our DeformRF on both synthetic and real-captured datasets. Both quantitative and qualitative results demonstrate the effectiveness of our method for novel view synthesis and deformation tasks. Project page: https://ustc3dv.github.io/DeformRF/

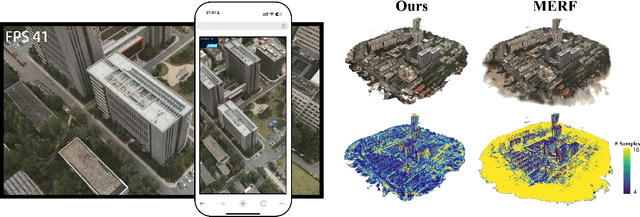

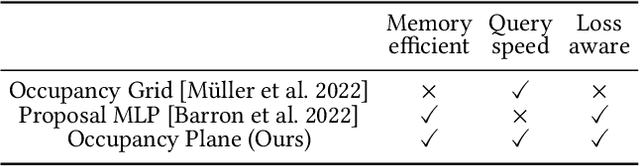

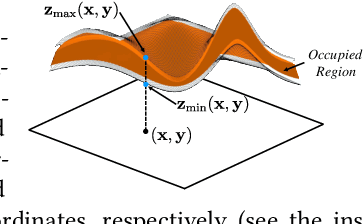

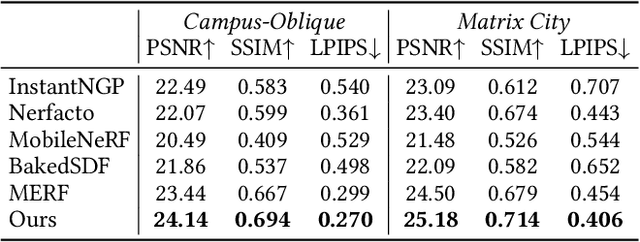

Oblique-MERF: Revisiting and Improving MERF for Oblique Photography

Apr 15, 2024

Abstract:Neural implicit fields have established a new paradigm for scene representation, with subsequent work achieving high-quality real-time rendering. However, reconstructing 3D scenes from oblique aerial photography presents unique challenges, such as varying spatial scale distributions and a constrained range of tilt angles, often resulting in high memory consumption and reduced rendering quality at extrapolated viewpoints. In this paper, we enhance MERF to accommodate these data characteristics by introducing an innovative adaptive occupancy plane optimized during the volume rendering process and a smoothness regularization term for view-dependent color to address these issues. Our approach, termed Oblique-MERF, surpasses state-of-the-art real-time methods by approximately 0.7 dB, reduces VRAM usage by about 40%, and achieves higher rendering frame rates with more realistic rendering outcomes across most viewpoints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge