Lesley Shannon

Automatic High-quality Verilog Assertion Generation through Subtask-Focused Fine-Tuned LLMs and Iterative Prompting

Nov 23, 2024

Abstract:Formal Property Verification (FPV), using SystemVerilog Assertions (SVA), is crucial for ensuring the completeness of design with respect to the specification. However, writing SVA is a laborious task and has a steep learning curve. In this work, we present a large language model (LLM) -based flow to automatically generate high-quality SVA from the design specification documents, named \ToolName. We introduce a novel sub-task-focused fine-tuning approach that effectively addresses functionally incorrect assertions produced by baseline LLMs, leading to a remarkable 7.3-fold increase in the number of functionally correct assertions. Recognizing the prevalence of syntax and semantic errors, we also developed an iterative refinement method that enhances the LLM's initial outputs by systematically re-prompting it to correct identified issues. This process is further strengthened by a custom compiler that generates meaningful error messages, guiding the LLM towards improved accuracy. The experiments demonstrate a 26\% increase in the number of assertions free from syntax errors using this approach, showcasing its potential to streamline the FPV process.

ZOBNN: Zero-Overhead Dependable Design of Binary Neural Networks with Deliberately Quantized Parameters

Jul 06, 2024

Abstract:Low-precision weights and activations in deep neural networks (DNNs) outperform their full-precision counterparts in terms of hardware efficiency. When implemented with low-precision operations, specifically in the extreme case where network parameters are binarized (i.e. BNNs), the two most frequently mentioned benefits of quantization are reduced memory consumption and a faster inference process. In this paper, we introduce a third advantage of very low-precision neural networks: improved fault-tolerance attribute. We investigate the impact of memory faults on state-of-the-art binary neural networks (BNNs) through comprehensive analysis. Despite the inclusion of floating-point parameters in BNN architectures to improve accuracy, our findings reveal that BNNs are highly sensitive to deviations in these parameters caused by memory faults. In light of this crucial finding, we propose a technique to improve BNN dependability by restricting the range of float parameters through a novel deliberately uniform quantization. The introduced quantization technique results in a reduction in the proportion of floating-point parameters utilized in the BNN, without incurring any additional computational overheads during the inference stage. The extensive experimental fault simulation on the proposed BNN architecture (i.e. ZOBNN) reveal a remarkable 5X enhancement in robustness compared to conventional floating-point DNN. Notably, this improvement is achieved without incurring any computational overhead. Crucially, this enhancement comes without computational overhead. \ToolName~excels in critical edge applications characterized by limited computational resources, prioritizing both dependability and real-time performance.

DNN Memory Footprint Reduction via Post-Training Intra-Layer Multi-Precision Quantization

Apr 03, 2024

Abstract:The imperative to deploy Deep Neural Network (DNN) models on resource-constrained edge devices, spurred by privacy concerns, has become increasingly apparent. To facilitate the transition from cloud to edge computing, this paper introduces a technique that effectively reduces the memory footprint of DNNs, accommodating the limitations of resource-constrained edge devices while preserving model accuracy. Our proposed technique, named Post-Training Intra-Layer Multi-Precision Quantization (PTILMPQ), employs a post-training quantization approach, eliminating the need for extensive training data. By estimating the importance of layers and channels within the network, the proposed method enables precise bit allocation throughout the quantization process. Experimental results demonstrate that PTILMPQ offers a promising solution for deploying DNNs on edge devices with restricted memory resources. For instance, in the case of ResNet50, it achieves an accuracy of 74.57\% with a memory footprint of 9.5 MB, representing a 25.49\% reduction compared to previous similar methods, with only a minor 1.08\% decrease in accuracy.

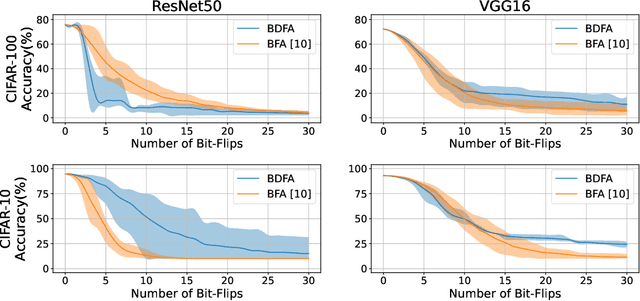

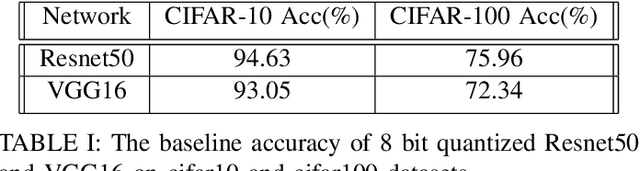

BDFA: A Blind Data Adversarial Bit-flip Attack on Deep Neural Networks

Jan 07, 2022

Abstract:Adversarial bit-flip attack (BFA) on Neural Network weights can result in catastrophic accuracy degradation by flipping a very small number of bits. A major drawback of prior bit flip attack techniques is their reliance on test data. This is frequently not possible for applications that contain sensitive or proprietary data. In this paper, we propose Blind Data Adversarial Bit-flip Attack (BDFA), a novel technique to enable BFA without any access to the training or testing data. This is achieved by optimizing for a synthetic dataset, which is engineered to match the statistics of batch normalization across different layers of the network and the targeted label. Experimental results show that BDFA could decrease the accuracy of ResNet50 significantly from 75.96\% to 13.94\% with only 4 bits flips.

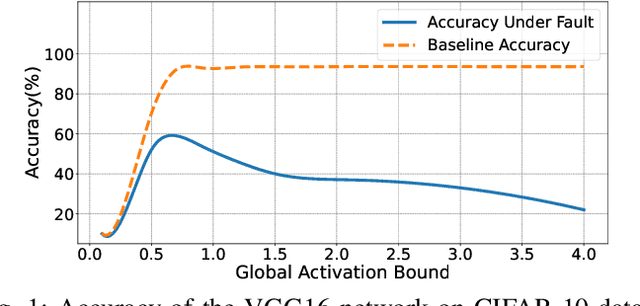

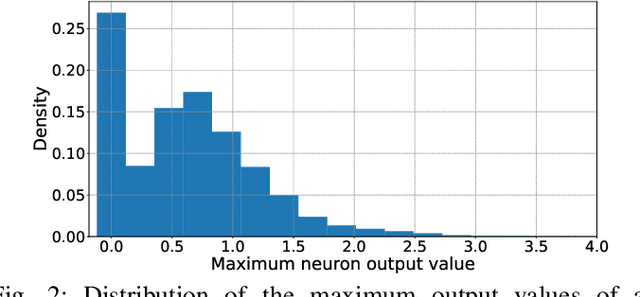

FitAct: Error Resilient Deep Neural Networks via Fine-Grained Post-Trainable Activation Functions

Dec 27, 2021

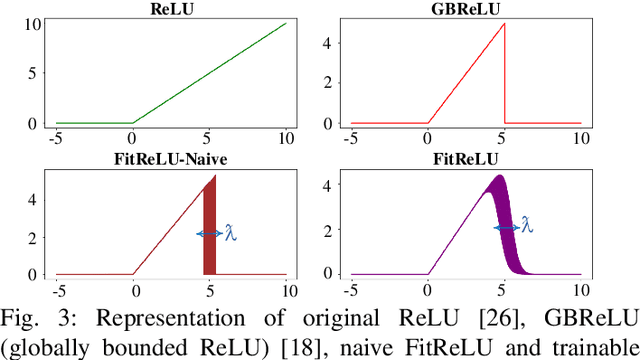

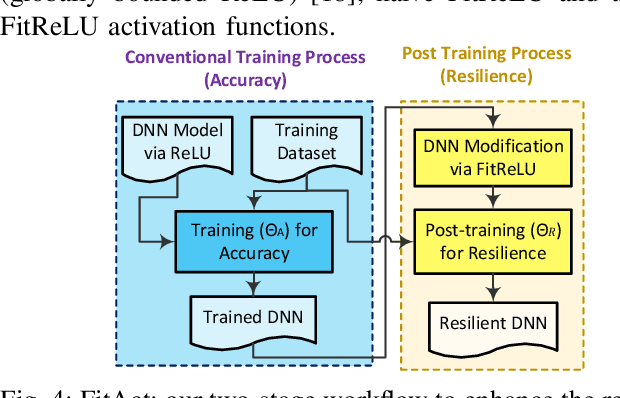

Abstract:Deep neural networks (DNNs) are increasingly being deployed in safety-critical systems such as personal healthcare devices and self-driving cars. In such DNN-based systems, error resilience is a top priority since faults in DNN inference could lead to mispredictions and safety hazards. For latency-critical DNN inference on resource-constrained edge devices, it is nontrivial to apply conventional redundancy-based fault tolerance techniques. In this paper, we propose FitAct, a low-cost approach to enhance the error resilience of DNNs by deploying fine-grained post-trainable activation functions. The main idea is to precisely bound the activation value of each individual neuron via neuron-wise bounded activation functions so that it could prevent fault propagation in the network. To avoid complex DNN model re-training, we propose to decouple the accuracy training and resilience training and develop a lightweight post-training phase to learn these activation functions with precise bound values. Experimental results on widely used DNN models such as AlexNet, VGG16, and ResNet50 demonstrate that FitAct outperforms state-of-the-art studies such as Clip-Act and Ranger in enhancing the DNN error resilience for a wide range of fault rates while adding manageable runtime and memory space overheads.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge