Leixilan Pan

Efficient CNN Architecture Design Guided by Visualization

Jul 21, 2022

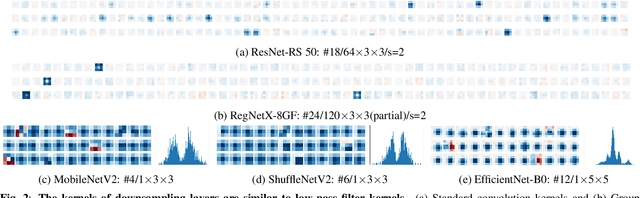

Abstract:Modern efficient Convolutional Neural Networks(CNNs) always use Depthwise Separable Convolutions(DSCs) and Neural Architecture Search(NAS) to reduce the number of parameters and the computational complexity. But some inherent characteristics of networks are overlooked. Inspired by visualizing feature maps and N$\times$N(N$>$1) convolution kernels, several guidelines are introduced in this paper to further improve parameter efficiency and inference speed. Based on these guidelines, our parameter-efficient CNN architecture, called \textit{VGNetG}, achieves better accuracy and lower latency than previous networks with about 30%$\thicksim$50% parameters reduction. Our VGNetG-1.0MP achieves 67.7% top-1 accuracy with 0.99M parameters and 69.2% top-1 accuracy with 1.14M parameters on ImageNet classification dataset. Furthermore, we demonstrate that edge detectors can replace learnable depthwise convolution layers to mix features by replacing the N$\times$N kernels with fixed edge detection kernels. And our VGNetF-1.5MP archives 64.4%(-3.2%) top-1 accuracy and 66.2%(-1.4%) top-1 accuracy with additional Gaussian kernels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge