Lee Friedman

Per Subject Complexity in Eye Movement Prediction

Dec 31, 2024

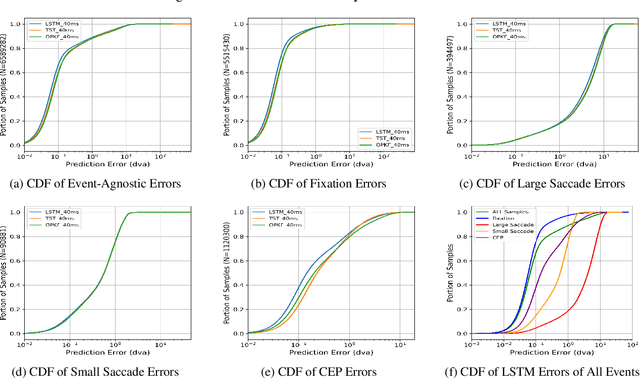

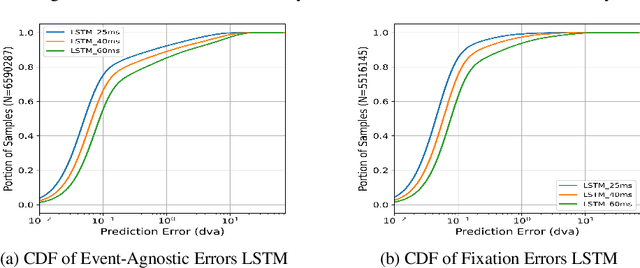

Abstract:Eye movement prediction is a promising area of research to compensate for the latency introduced by eye-tracking systems in virtual reality devices. In this study, we comprehensively analyze the complexity of the eye movement prediction task associated with subjects. We use three fundamentally different models within the analysis: the lightweight Long Short-Term Memory network (LSTM), the transformer-based network for multivariate time series representation learning (TST), and the Oculomotor Plant Mathematical Model wrapped in the Kalman Filter framework (OPKF). Each solution is assessed following a sample-to-event evaluation strategy and employing the new event-to-subject metrics. Our results show that the different models maintained similar prediction performance trends pertaining to subjects. We refer to these outcomes as per-subject complexity since some subjects' data pose a more significant challenge for models. Along with the detailed correlation analysis, this report investigates the source of the per-subject complexity and discusses potential solutions to overcome it.

Analysis of Embeddings Learned by End-to-End Machine Learning Eye Movement-driven Biometrics Pipeline

Feb 26, 2024Abstract:This paper expands on the foundational concept of temporal persistence in biometric systems, specifically focusing on the domain of eye movement biometrics facilitated by machine learning. Unlike previous studies that primarily focused on developing biometric authentication systems, our research delves into the embeddings learned by these systems, particularly examining their temporal persistence, reliability, and biometric efficacy in response to varying input data. Utilizing two publicly available eye-movement datasets, we employed the state-of-the-art Eye Know You Too machine learning pipeline for our analysis. We aim to validate whether the machine learning-derived embeddings in eye movement biometrics mirror the temporal persistence observed in traditional biometrics. Our methodology involved conducting extensive experiments to assess how different lengths and qualities of input data influence the performance of eye movement biometrics more specifically how it impacts the learned embeddings. We also explored the reliability and consistency of the embeddings under varying data conditions. Three key metrics (kendall's coefficient of concordance, intercorrelations, and equal error rate) were employed to quantitatively evaluate our findings. The results reveal while data length significantly impacts the stability of the learned embeddings, however, the intercorrelations among embeddings show minimal effect.

Filtering Eye-Tracking Data From an EyeLink 1000: Comparing Heuristic, Savitzky-Golay, IIR and FIR Digital Filters

Mar 03, 2023Abstract:In a previous report (Raju et al.,2023) we concluded that, if the goal was to preserve events such as saccades, microsaccades, and smooth pursuit in eye-tracking recordings, data with sine wave frequencies less than 100 Hz (-3db) were the signal and data above 100 Hz were noise. We compare 5 filters in their ability to preserve signal and remove noise. Specifically, we compared the proprietary STD and EXTRA heuristic filters provided by our EyeLink 1000 (SR-Research, Ottawa, Canada), a Savitzky-Golay (SG) filter, an infinite impulse response (IIR) filter (low-pass Butterworth), and a finite impulse filter (FIR). For each of the non-heuristic filters, we systematically searched for optimal parameters. Both the IIR and the FIR filters were zero-phase filters. Mean frequency response profiles and amplitude spectra for all 5 filters are provided. In addition, we examined the effect of our filters on a noisy recording. Our FIR filter had the sharpest roll-off of any filter. Therefore, it maintained the signal and removed noise more effectively than any other filter. On this basis, we recommend the use of our FIR filter. Several reports have shown that filtering increased the temporal autocorrelation of a signal. To address this, the present filters were also evaluated in terms of autocorrelation (specifically the first 3 lags). Of all our filters, the STD filter introduced the least amount of autocorrelation.

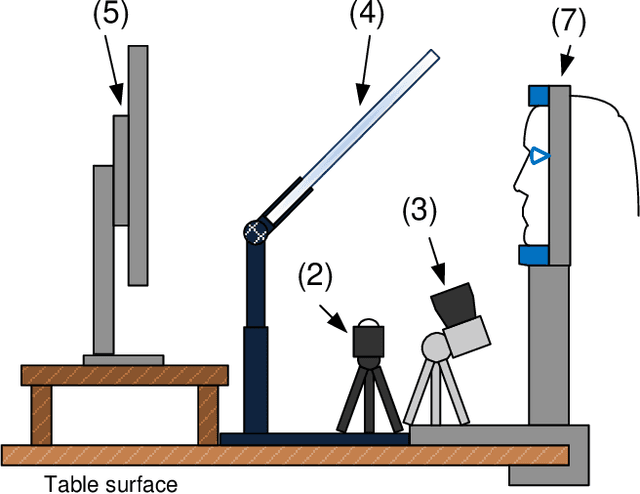

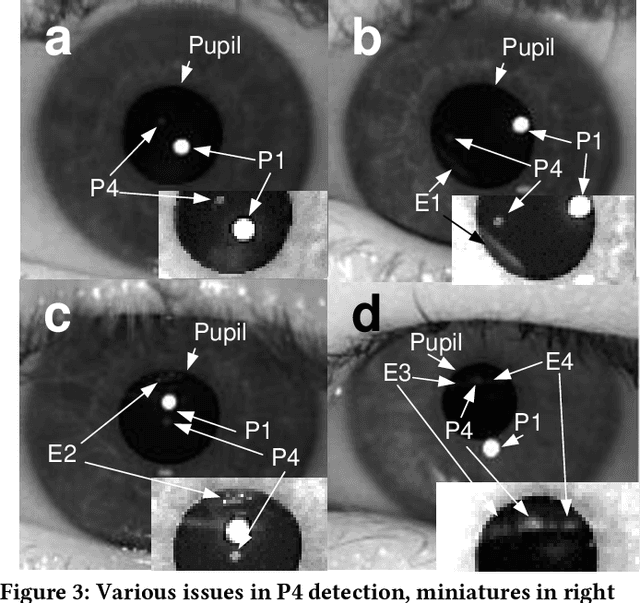

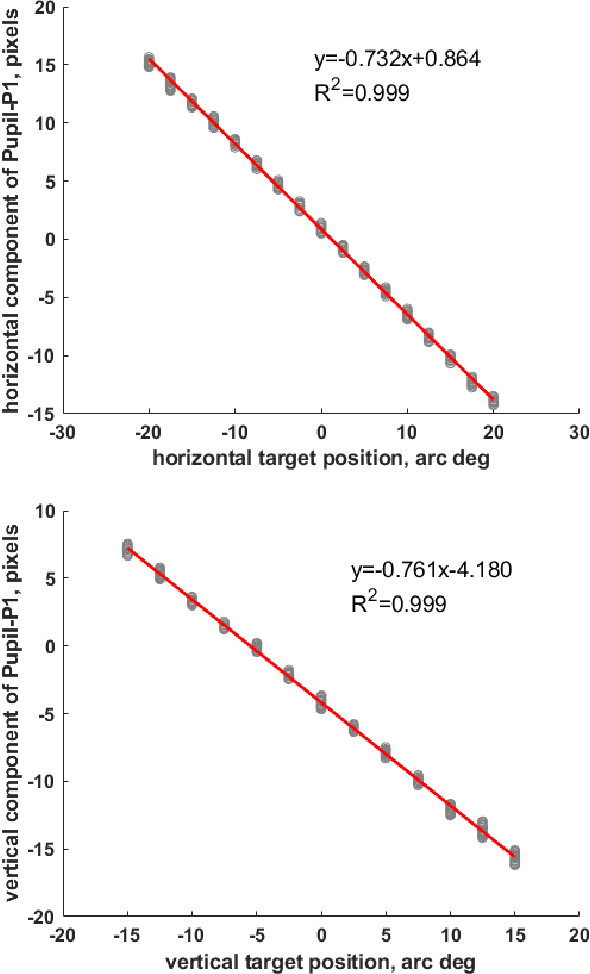

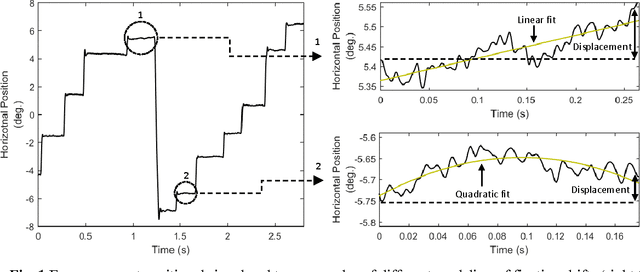

Custom Video-Oculography Device and Its Application to Fourth Purkinje Image Detection during Saccades

Apr 15, 2019

Abstract:We built a custom video-based eye-tracker that saves every video frame as a full resolution image (MJPEG). Images can be processed offline for the detection of ocular features, including the pupil and corneal reflection (First Purkinje Image, P1) position. A comparison of multiple algorithms for detection of pupil and corneal reflection can be performed. The system provides for highly flexible stimulus creation, with mixing of graphic, image, and video stimuli. We can change cameras and infrared illuminators depending on the image qualities and frame rate desired. Using this system, we have detected the position of the Fourth Purkinje image (P4) in the frames. We show that when we estimate gaze by calculating P1-P4, signal compares well with gaze estimated with a DPI eye-tracker, which natively detects and tracks the P1 and P4.

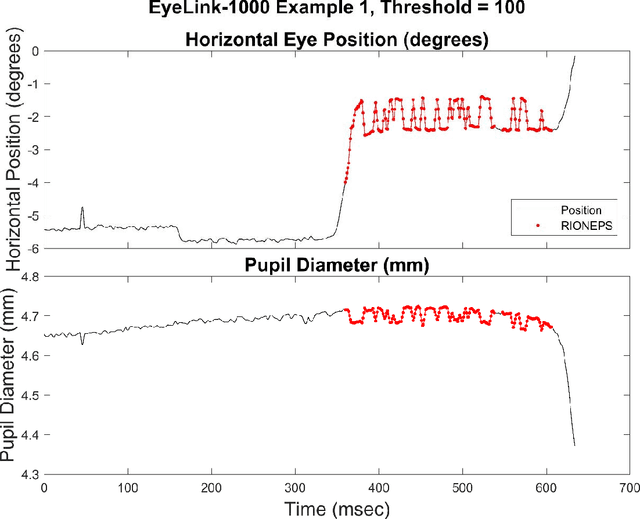

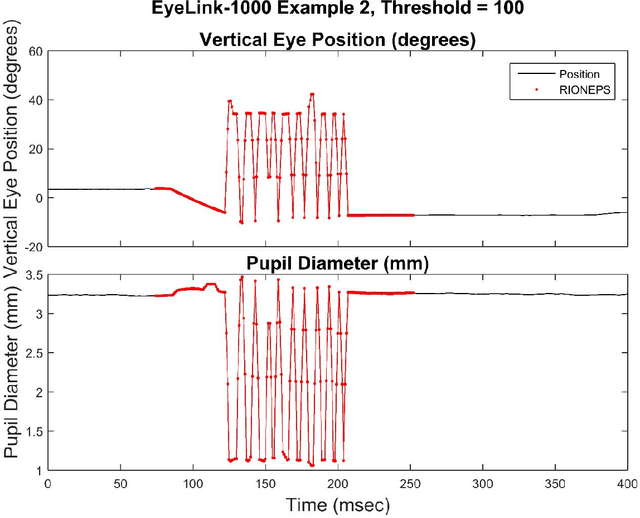

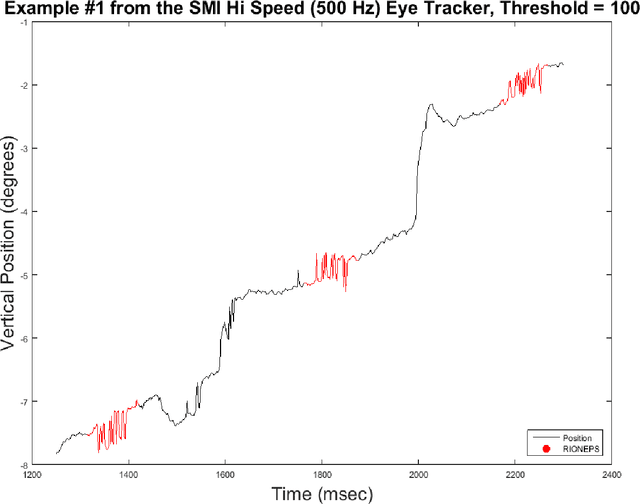

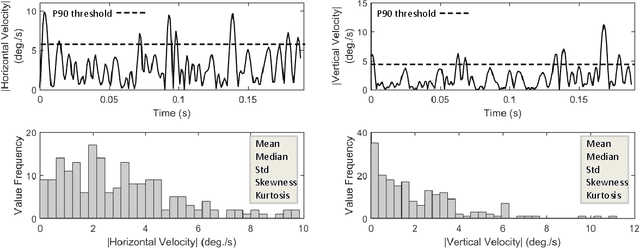

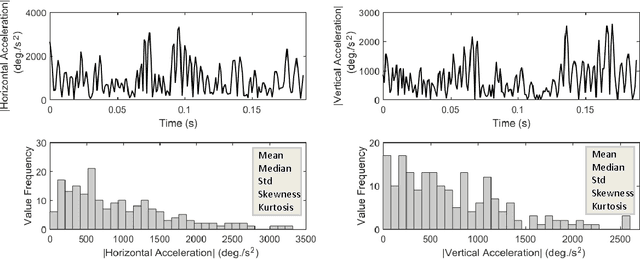

Method to Detect Eye Position Noise from Video-Oculography when Detection of Pupil or Corneal Reflection Position Fails

Sep 08, 2017

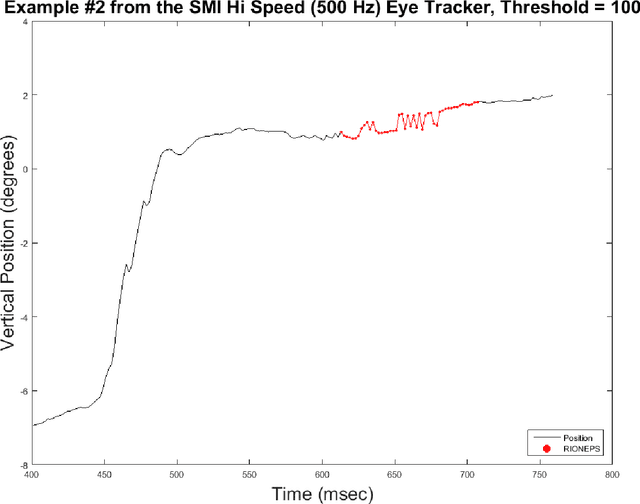

Abstract:We present software to detect noise in eye position signals from video-based eye-tracking systems that depend on accurate pupil and corneal reflection position estimation. When such systems transiently fail to properly detect the pupil or the corneal reflection due to occlusion from eyelids, eye lashes or various shadows, the estimated gaze position is false. This produces an artifactual signal in the position trace that is rapidly, irregularly oscillating between true and false gaze positions. We refer to this noise as RIONEPS (Rapid Irregularly Oscillating Noise of the Eye Position Signal). Our method for detecting these periods automatically is based on an estimate of the relative inefficiency of the eye position signal. We look for RIONEPS in the horizontal and vertical traces separately, and although we typically use it offline, it is suitable to adaptation for real time use. This method requires a threshold to be set, and although we provide some guidance, thresholds will have to be estimated empirically.

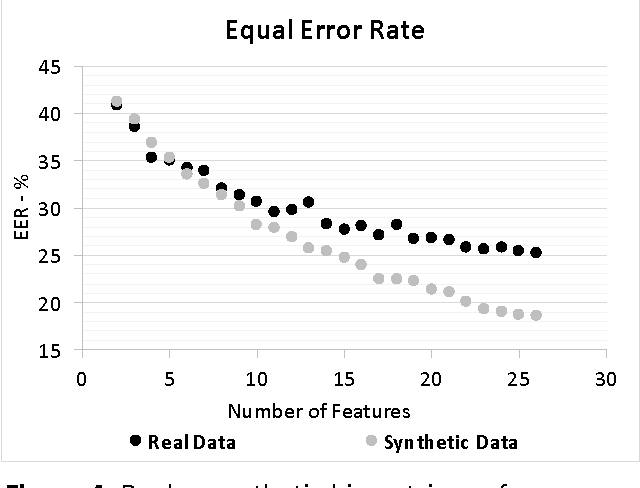

Synthetic Database for Evaluation of General, Fundamental Biometric Principles

Jul 29, 2017

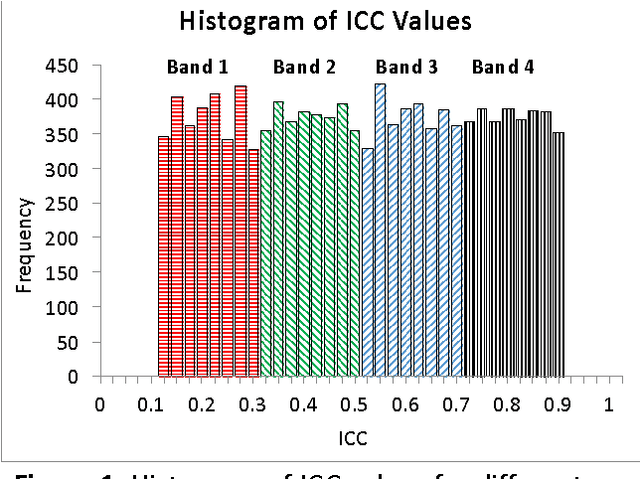

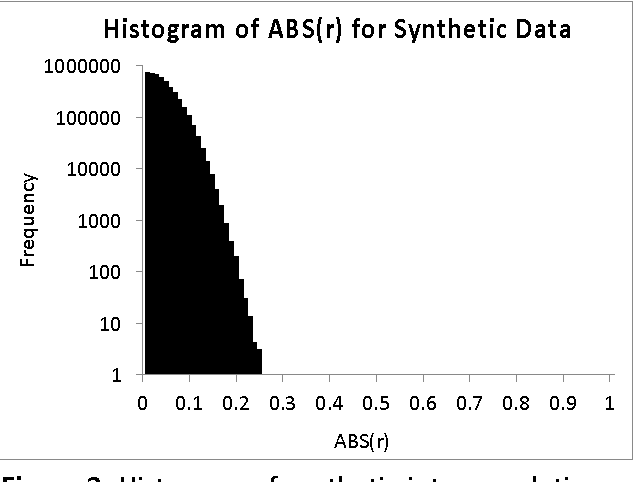

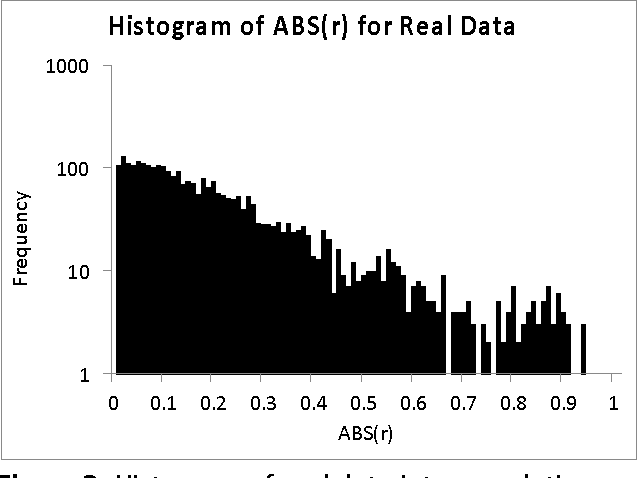

Abstract:We create synthetic biometric databases to study general, fundamental, biometric principles. First, we check the validity of the synthetic database design by comparing it to real data in terms of biometric performance. The real data used for this validity check was from an eye-movement related biometric database. Next, we employ our database to evaluate the impact of variations of temporal persistence of features on biometric performance. We index temporal persistence with the intraclass correlation coefficient (ICC). We find that variations in temporal persistence are extremely highly correlated with variations in biometric performance. Finally, we use our synthetic database strategy to determine how many features are required to achieve particular levels of performance as the number of subjects in the database increases from 100 to 10,000. An important finding is that the number of features required to achieve various EER values (2%, 0.3%, 0.15%) is essentially constant in the database sizes that we studied. We hypothesize that the insights obtained from our study would be applicable to many biometric modalities where extracted feature properties resemble the properties of the synthetic features we discuss in this work.

A Study on the Extraction and Analysis of a Large Set of Eye Movement Features during Reading

Mar 27, 2017

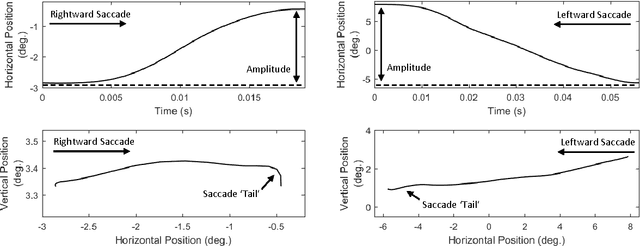

Abstract:This work presents a study on the extraction and analysis of a set of 101 categories of eye movement features from three types of eye movement events: fixations, saccades, and post-saccadic oscillations. The eye movements were recorded during a reading task. For the categories of features with multiple instances in a recording we extract corresponding feature subtypes by calculating descriptive statistics on the distributions of these instances. A unified framework of detailed descriptions and mathematical formulas are provided for the extraction of the feature set. The analysis of feature values is performed using a large database of eye movement recordings from a normative population of 298 subjects. We demonstrate the central tendency and overall variability of feature values over the experimental population, and more importantly, we quantify the test-retest reliability (repeatability) of each separate feature. The described methods and analysis can provide valuable tools in fields exploring the eye movements, such as in behavioral studies, attention and cognition research, medical research, biometric recognition, and human-computer interaction.

Method to Assess the Temporal Persistence of Potential Biometric Features: Application to Oculomotor, and Gait-Related Databases

Sep 13, 2016

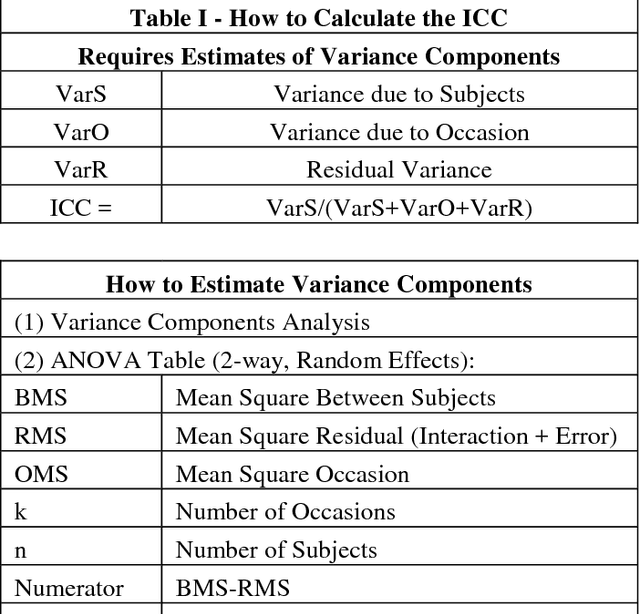

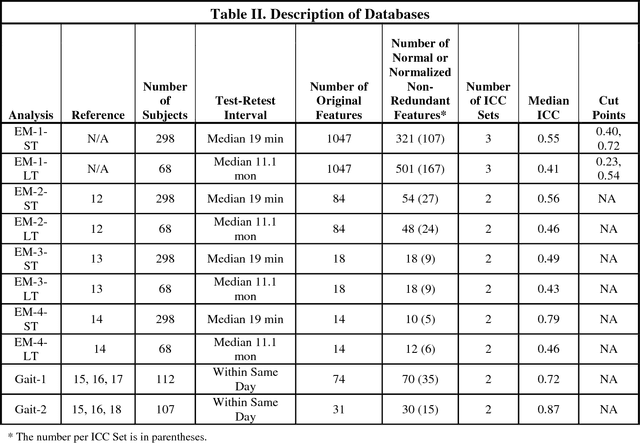

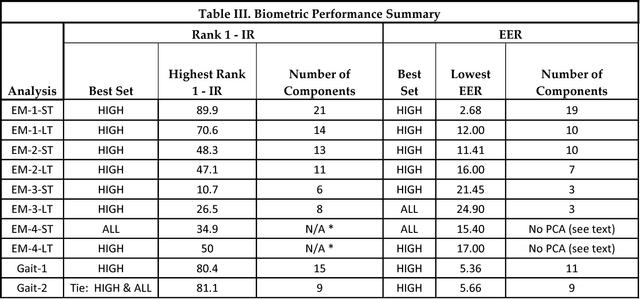

Abstract:Although temporal persistence, or permanence, is a well understood requirement for optimal biometric features, there is no general agreement on how to assess temporal persistence. We suggest that the best way to assess temporal persistence is to perform a test-retest study, and assess test-retest reliability. For ratio-scale features that are normally distributed, this is best done using the Intraclass Correlation Coefficient (ICC). For 10 distinct data sets (8 eye-movement related, and 2 gait related), we calculated the test-retest reliability ('Temporal persistence') of each feature, and compared biometric performance of high-ICC features to lower ICC features, and to the set of all features. We demonstrate that using a subset of only high-ICC features produced superior Rank-1-Identification Rate (Rank-1-IR) performance in 9 of 10 databases (p = 0.01, one-tailed). For Equal Error Rate (EER), using a subset of only high-ICC features produced superior performance in 8 of 10 databases (p = 0.055, one-tailed). In general, then, prescreening potential biometric features, and choosing only highly reliable features will yield better performance than lower ICC features or than the set of all features combined. We hypothesize that this would likely be the case for any biometric modality where the features can be expressed as quantitative values on an interval or ratio scale, assuming an adequate number of relatively independent features.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge