Laxima Niure Kandel

Cross-Model Transferability of Adversarial Patches in Real-time Segmentation for Autonomous Driving

Feb 22, 2025

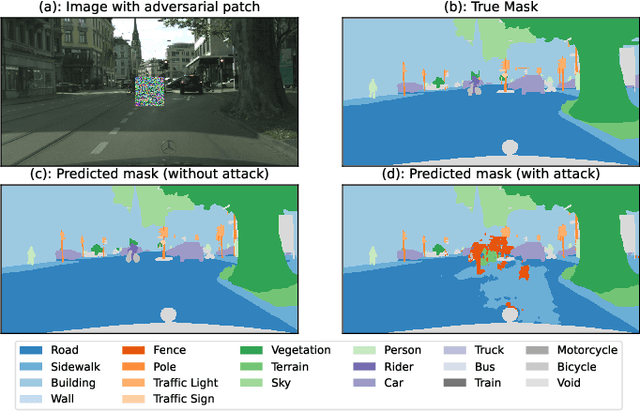

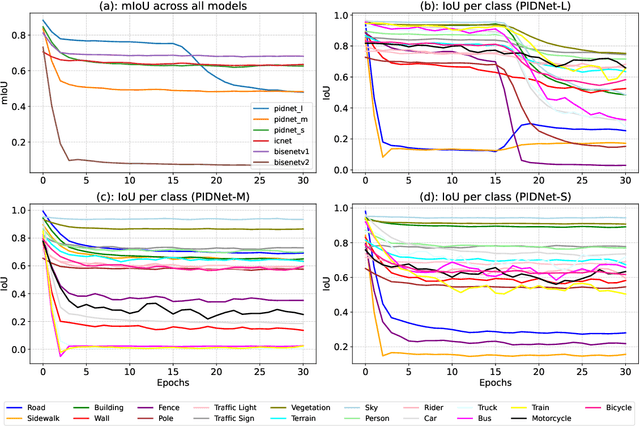

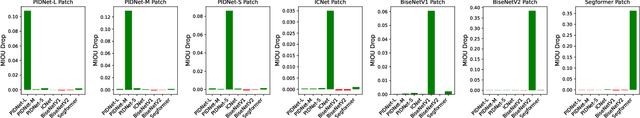

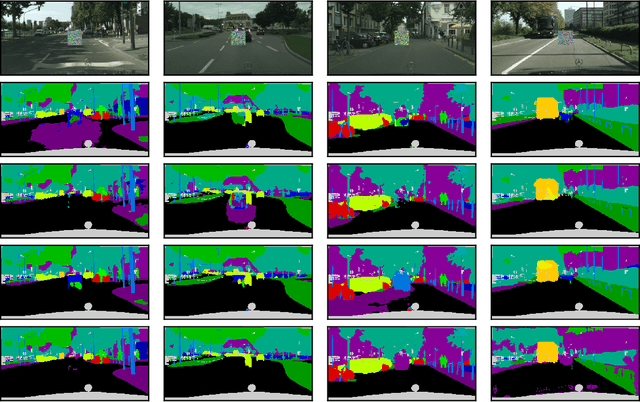

Abstract:Adversarial attacks pose a significant threat to deep learning models, particularly in safety-critical applications like healthcare and autonomous driving. Recently, patch based attacks have demonstrated effectiveness in real-time inference scenarios owing to their 'drag and drop' nature. Following this idea for Semantic Segmentation (SS), here we propose a novel Expectation Over Transformation (EOT) based adversarial patch attack that is more realistic for autonomous vehicles. To effectively train this attack we also propose a 'simplified' loss function that is easy to analyze and implement. Using this attack as our basis, we investigate whether adversarial patches once optimized on a specific SS model, can fool other models or architectures. We conduct a comprehensive cross-model transferability analysis of adversarial patches trained on SOTA Convolutional Neural Network (CNN) models such PIDNet-S, PIDNet-M and PIDNet-L, among others. Additionally, we also include the Segformer model to study transferability to Vision Transformers (ViTs). All of our analysis is conducted on the widely used Cityscapes dataset. Our study reveals key insights into how model architectures (CNN vs CNN or CNN vs. Transformer-based) influence attack susceptibility. In particular, we conclude that although the transferability (effectiveness) of attacks on unseen images of any dimension is really high, the attacks trained against one particular model are minimally effective on other models. And this was found to be true for both ViT and CNN based models. Additionally our results also indicate that for CNN-based models, the repercussions of patch attacks are local, unlike ViTs. Per-class analysis reveals that simple-classes like 'sky' suffer less misclassification than others. The code for the project is available at: https://github.com/p-shekhar/adversarial-patch-transferability

Over-the-Air Federated Learning with Enhanced Privacy

Dec 22, 2022

Abstract:Federated learning (FL) has emerged as a promising learning paradigm in which only local model parameters (gradients) are shared. Private user data never leaves the local devices thus preserving data privacy. However, recent research has shown that even when local data is never shared by a user, exchanging model parameters without protection can also leak private information. Moreover, in wireless systems, the frequent transmission of model parameters can cause tremendous bandwidth consumption and network congestion when the model is large. To address this problem, we propose a new FL framework with efficient over-the-air parameter aggregation and strong privacy protection of both user data and models. We achieve this by introducing pairwise cancellable random artificial noises (PCR-ANs) on end devices. As compared to existing over-the-air computation (AirComp) based FL schemes, our design provides stronger privacy protection. We analytically show the secrecy capacity and the convergence rate of the proposed wireless FL aggregation algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge