Lauri Suomela

Data Scaling for Navigation in Unknown Environments

Jan 14, 2026Abstract:Generalization of imitation-learned navigation policies to environments unseen in training remains a major challenge. We address this by conducting the first large-scale study of how data quantity and data diversity affect real-world generalization in end-to-end, map-free visual navigation. Using a curated 4,565-hour crowd-sourced dataset collected across 161 locations in 35 countries, we train policies for point goal navigation and evaluate their closed-loop control performance on sidewalk robots operating in four countries, covering 125 km of autonomous driving. Our results show that large-scale training data enables zero-shot navigation in unknown environments, approaching the performance of policies trained with environment-specific demonstrations. Critically, we find that data diversity is far more important than data quantity. Doubling the number of geographical locations in a training set decreases navigation errors by ~15%, while performance benefit from adding data from existing locations saturates with very little data. We also observe that, with noisy crowd-sourced data, simple regression-based models outperform generative and sequence-based architectures. We release our policies, evaluation setup and example videos on the project page.

Self-Organizing Edge Computing Distribution Framework for Visual SLAM

Jan 15, 2025

Abstract:Localization within a known environment is a crucial capability for mobile robots. Simultaneous Localization and Mapping (SLAM) is a prominent solution to this problem. SLAM is a framework that consists of a diverse set of computational tasks ranging from real-time tracking to computation-intensive map optimization. This combination can present a challenge for resource-limited mobile robots. Previously, edge-assisted SLAM methods have demonstrated promising real-time execution capabilities by offloading heavy computations while performing real-time tracking onboard. However, the common approach of utilizing a client-server architecture for offloading is sensitive to server and network failures. In this article, we propose a novel edge-assisted SLAM framework capable of self-organizing fully distributed SLAM execution across a network of devices or functioning on a single device without connectivity. The architecture consists of three layers and is designed to be device-agnostic, resilient to network failures, and minimally invasive to the core SLAM system. We have implemented and demonstrated the framework for monocular ORB SLAM3 and evaluated it in both fully distributed and standalone SLAM configurations against the ORB SLAM3. The experiment results demonstrate that the proposed design matches the accuracy and resource utilization of the monolithic approach while enabling collaborative execution.

PlaceNav: Topological Navigation through Place Recognition

Oct 05, 2023

Abstract:Recent results suggest that splitting topological navigation into robot-independent and robot-specific components improves navigation performance by enabling the robot-independent part to be trained with data collected by different robot types. However, the navigation methods are still limited by the scarcity of suitable training data and suffer from poor computational scaling. In this work, we present PlaceNav, subdividing the robot-independent part into navigation-specific and generic computer vision components. We utilize visual place recognition for the subgoal selection of the topological navigation pipeline. This makes subgoal selection more efficient and enables leveraging large-scale datasets from non-robotics sources, increasing training data availability. Bayesian filtering, enabled by place recognition, further improves navigation performance by increasing the temporal consistency of subgoals. Our experimental results verify the design and the new model obtains a 76% higher success rate in indoor and 23% higher in outdoor navigation tasks with higher computational efficiency.

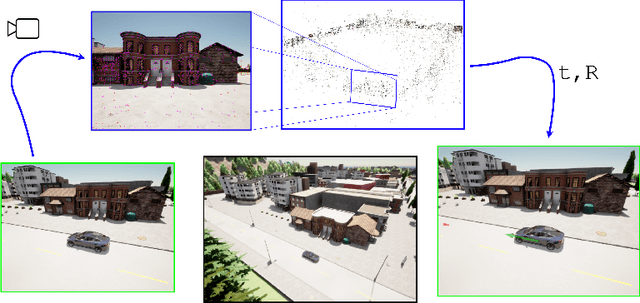

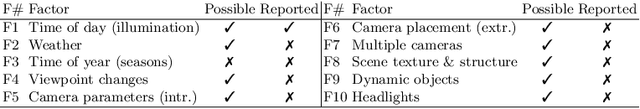

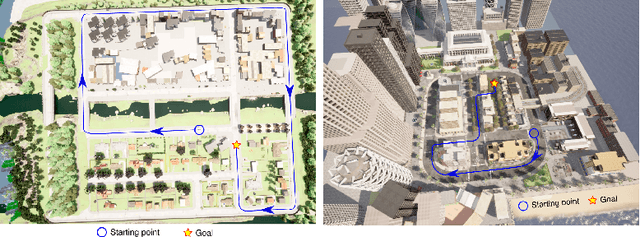

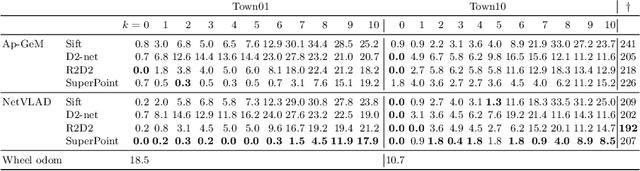

A Simulation Benchmark for Vision-based Autonomous Navigation

Apr 01, 2022

Abstract:This work introduces a simulator benchmark for vision-based autonomous navigation. The simulator offers control over real world variables such as the environment, time of day, weather and traffic. The benchmark includes a modular integration of different components of a full autonomous visual navigation stack. In the experimental part of the paper, state-of-the-art visual localization methods are evaluated as a part of the stack in realistic navigation tasks. To the authors' best knowledge, the proposed benchmark is the first to study modern visual localization methods as part of a full autonomous visual navigation stack.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge