László Antal

RWTH Aachen University

ROSAR: An Adversarial Re-Training Framework for Robust Side-Scan Sonar Object Detection

Oct 14, 2024

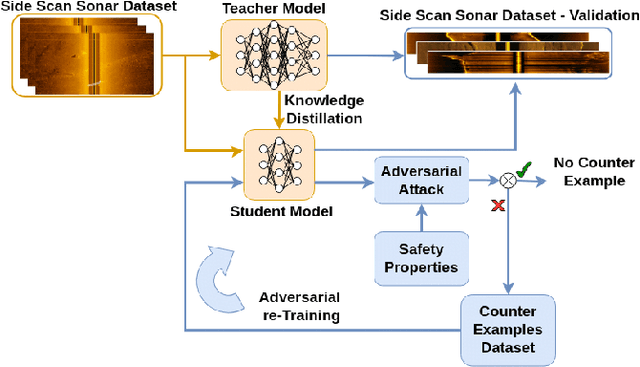

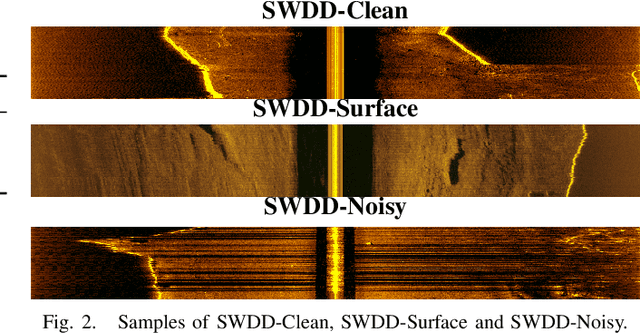

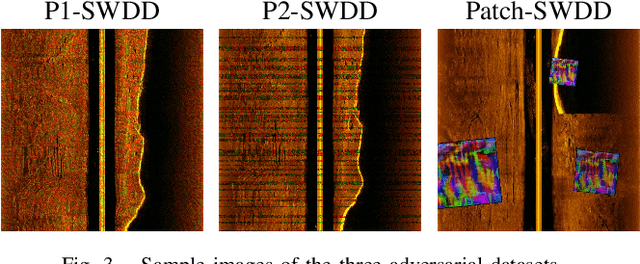

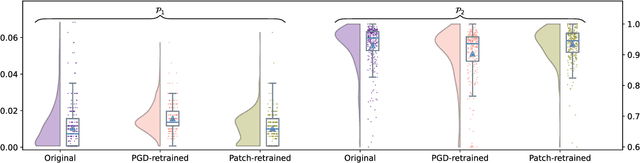

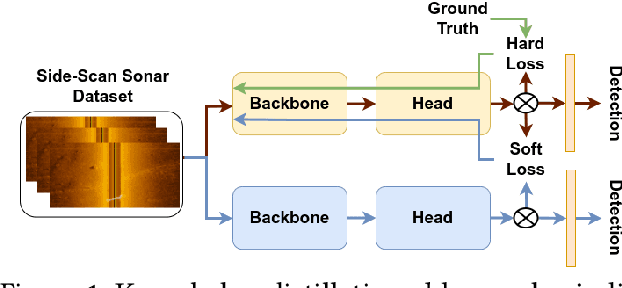

Abstract:This paper introduces ROSAR, a novel framework enhancing the robustness of deep learning object detection models tailored for side-scan sonar (SSS) images, generated by autonomous underwater vehicles using sonar sensors. By extending our prior work on knowledge distillation (KD), this framework integrates KD with adversarial retraining to address the dual challenges of model efficiency and robustness against SSS noises. We introduce three novel, publicly available SSS datasets, capturing different sonar setups and noise conditions. We propose and formalize two SSS safety properties and utilize them to generate adversarial datasets for retraining. Through a comparative analysis of projected gradient descent (PGD) and patch-based adversarial attacks, ROSAR demonstrates significant improvements in model robustness and detection accuracy under SSS-specific conditions, enhancing the model's robustness by up to 1.85%. ROSAR is available at https://github.com/remaro-network/ROSAR-framework.

Knowledge Distillation in YOLOX-ViT for Side-Scan Sonar Object Detection

Mar 14, 2024

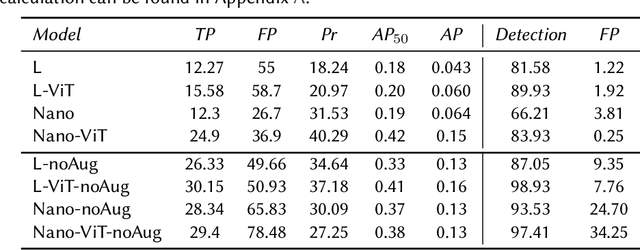

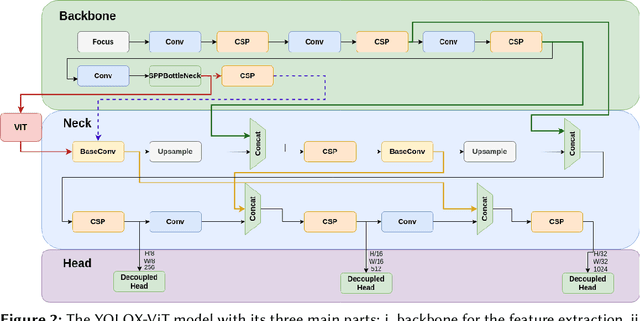

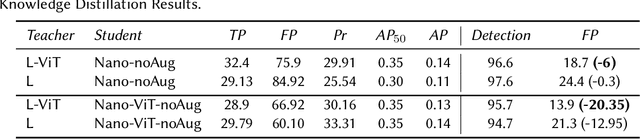

Abstract:In this paper we present YOLOX-ViT, a novel object detection model, and investigate the efficacy of knowledge distillation for model size reduction without sacrificing performance. Focused on underwater robotics, our research addresses key questions about the viability of smaller models and the impact of the visual transformer layer in YOLOX. Furthermore, we introduce a new side-scan sonar image dataset, and use it to evaluate our object detector's performance. Results show that knowledge distillation effectively reduces false positives in wall detection. Additionally, the introduced visual transformer layer significantly improves object detection accuracy in the underwater environment. The source code of the knowledge distillation in the YOLOX-ViT is at https://github.com/remaro-network/KD-YOLOX-ViT.

SubPipe: A Submarine Pipeline Inspection Dataset for Segmentation and Visual-inertial Localization

Feb 06, 2024Abstract:This paper presents SubPipe, an underwater dataset for SLAM, object detection, and image segmentation. SubPipe has been recorded using a \gls{LAUV}, operated by OceanScan MST, and carrying a sensor suite including two cameras, a side-scan sonar, and an inertial navigation system, among other sensors. The AUV has been deployed in a pipeline inspection environment with a submarine pipe partially covered by sand. The AUV's pose ground truth is estimated from the navigation sensors. The side-scan sonar and RGB images include object detection and segmentation annotations, respectively. State-of-the-art segmentation, object detection, and SLAM methods are benchmarked on SubPipe to demonstrate the dataset's challenges and opportunities for leveraging computer vision algorithms. To the authors' knowledge, this is the first annotated underwater dataset providing a real pipeline inspection scenario. The dataset and experiments are publicly available online at https://github.com/remaro-network/SubPipe-dataset

Mission Planning and Safety Assessment for Pipeline Inspection Using Autonomous Underwater Vehicles: A Framework based on Behavior Trees

Feb 06, 2024Abstract:The recent advance in autonomous underwater robotics facilitates autonomous inspection tasks of offshore infrastructure. However, current inspection missions rely on predefined plans created offline, hampering the flexibility and autonomy of the inspection vehicle and the mission's success in case of unexpected events. In this work, we address these challenges by proposing a framework encompassing the modeling and verification of mission plans through Behavior Trees (BTs). This framework leverages the modularity of BTs to model onboard reactive behaviors, thus enabling autonomous plan executions, and uses BehaVerify to verify the mission's safety. Moreover, as a use case of this framework, we present a novel AI-enabled algorithm that aims for efficient, autonomous pipeline camera data collection. In a simulated environment, we demonstrate the framework's application to our proposed pipeline inspection algorithm. Our framework marks a significant step forward in the field of autonomous underwater robotics, promising to enhance the safety and success of underwater missions in practical, real-world applications. https://github.com/remaro-network/pipe_inspection_mission

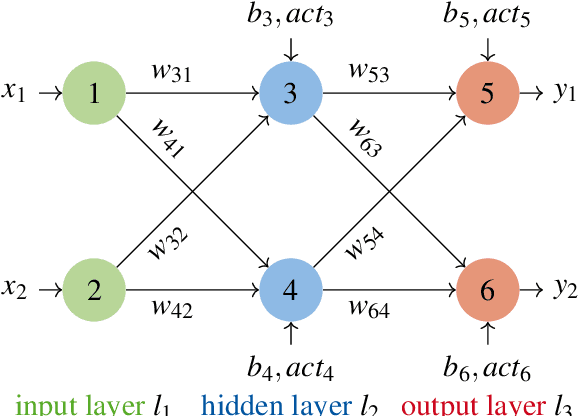

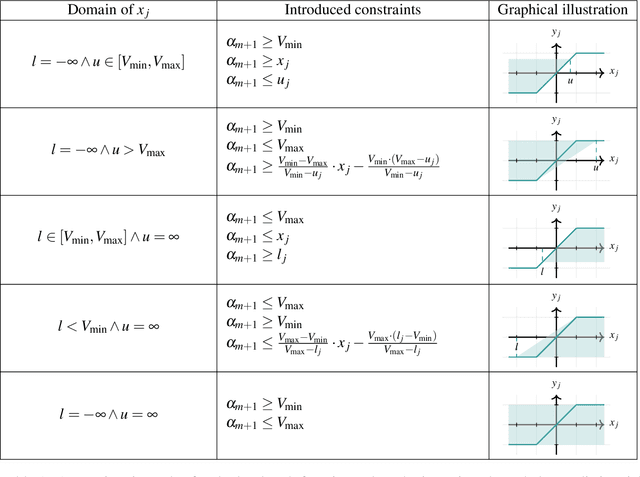

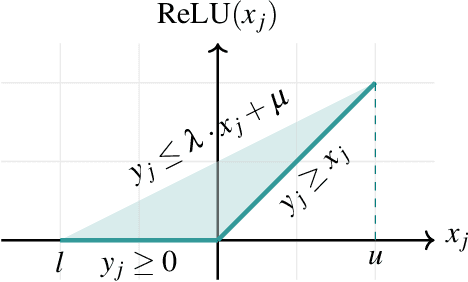

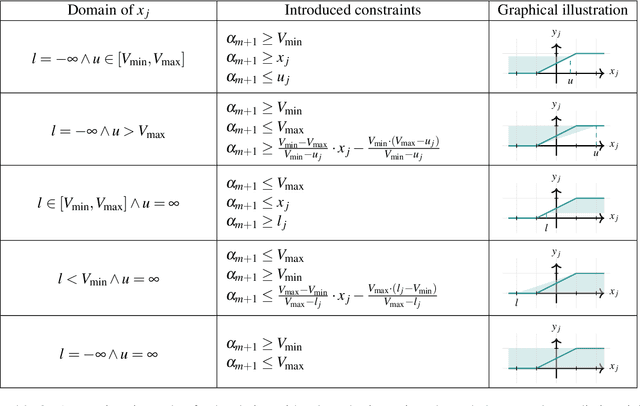

Extending Neural Network Verification to a Larger Family of Piece-wise Linear Activation Functions

Nov 16, 2023

Abstract:In this paper, we extend an available neural network verification technique to support a wider class of piece-wise linear activation functions. Furthermore, we extend the algorithms, which provide in their original form exact respectively over-approximative results for bounded input sets represented as start sets, to allow also unbounded input set. We implemented our algorithms and demonstrated their effectiveness in some case studies.

* In Proceedings FMAS 2023, arXiv:2311.08987

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge