Kuangyan Song

FrequentNet : A New Deep Learning Baseline for Image Classification

Jan 04, 2020

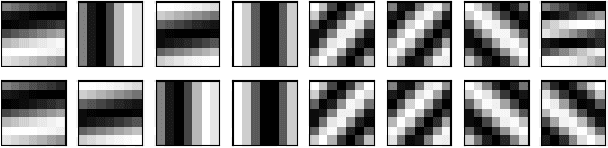

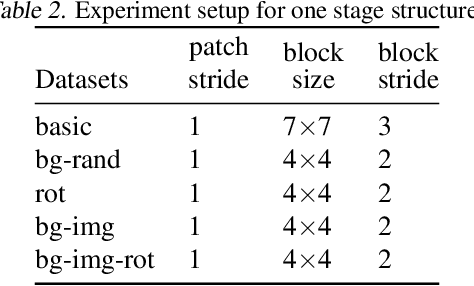

Abstract:In this paper, we generalize the idea from the method called "PCANet" to achieve a new baseline deep learning model for image classification. Instead of using principal component vectors as the filter vector in "PCANet", we use basis vectors in discrete Fourier analysis and wavelets analysis as our filter vectors. Both of them achieve comparable performance to "PCANet" in benchmark datasets. It is noticeable that our algorithms do not require any optimization techniques to get those basis.

"Why Should You Trust My Explanation?" Understanding Uncertainty in LIME Explanations

Jun 04, 2019

Abstract:Methods for interpreting machine learning black-box models increase the outcomes' transparency and in turn generates insight into the reliability and fairness of the algorithms. However, the interpretations themselves could contain significant uncertainty that undermines the trust in the outcomes and raises concern about the model's reliability. Focusing on the method "Local Interpretable Model-agnostic Explanations" (LIME), we demonstrate the presence of two sources of uncertainty, namely the randomness in its sampling procedure and the variation of interpretation quality across different input data points. Such uncertainty is present even in models with high training and test accuracy. We apply LIME to synthetic data and two public data sets, text classification in 20 Newsgroup and recidivism risk-scoring in COMPAS, to support our argument.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge