Krish Maniar

ATCON: Attention Consistency for Vision Models

Oct 18, 2022

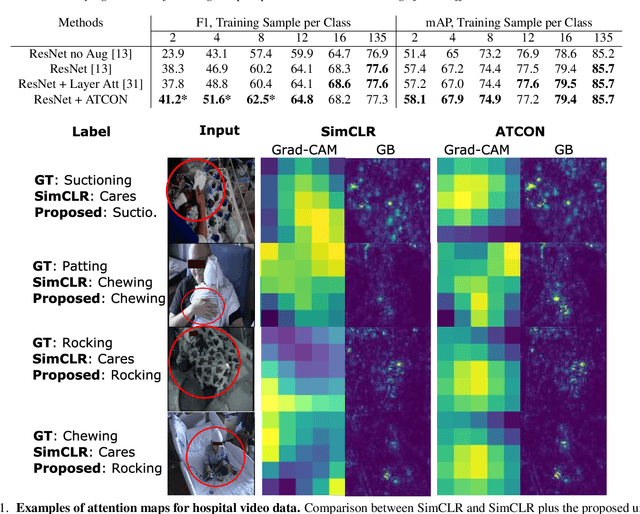

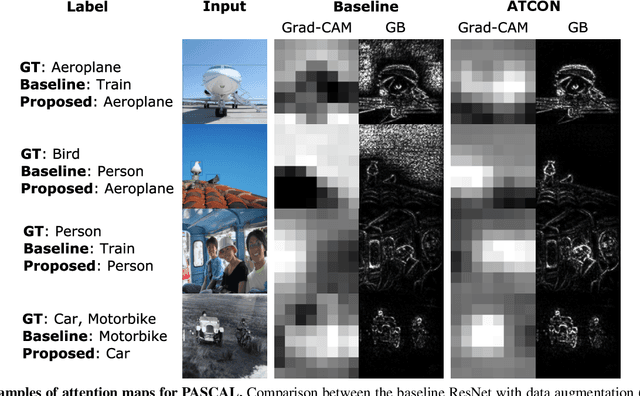

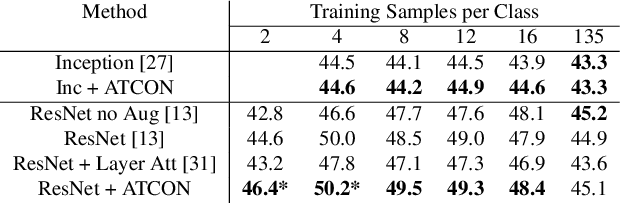

Abstract:Attention--or attribution--maps methods are methods designed to highlight regions of the model's input that were discriminative for its predictions. However, different attention maps methods can highlight different regions of the input, with sometimes contradictory explanations for a prediction. This effect is exacerbated when the training set is small. This indicates that either the model learned incorrect representations or that the attention maps methods did not accurately estimate the model's representations. We propose an unsupervised fine-tuning method that optimizes the consistency of attention maps and show that it improves both classification performance and the quality of attention maps. We propose an implementation for two state-of-the-art attention computation methods, Grad-CAM and Guided Backpropagation, which relies on an input masking technique. We also show results on Grad-CAM and Integrated Gradients in an ablation study. We evaluate this method on our own dataset of event detection in continuous video recordings of hospital patients aggregated and curated for this work. As a sanity check, we also evaluate the proposed method on PASCAL VOC and SVHN. With the proposed method, with small training sets, we achieve a 6.6 points lift of F1 score over the baselines on our video dataset, a 2.9 point lift of F1 score on PASCAL, and a 1.8 points lift of mean Intersection over Union over Grad-CAM for weakly supervised detection on PASCAL. Those improved attention maps may help clinicians better understand vision model predictions and ease the deployment of machine learning systems into clinical care. We share part of the code for this article at the following repository: https://github.com/alimirzazadeh/SemisupervisedAttention.

Improving Clinical Efficiency and Reducing Medical Errors through NLP-enabled diagnosis of Health Conditions from Transcription Reports

Jun 27, 2022

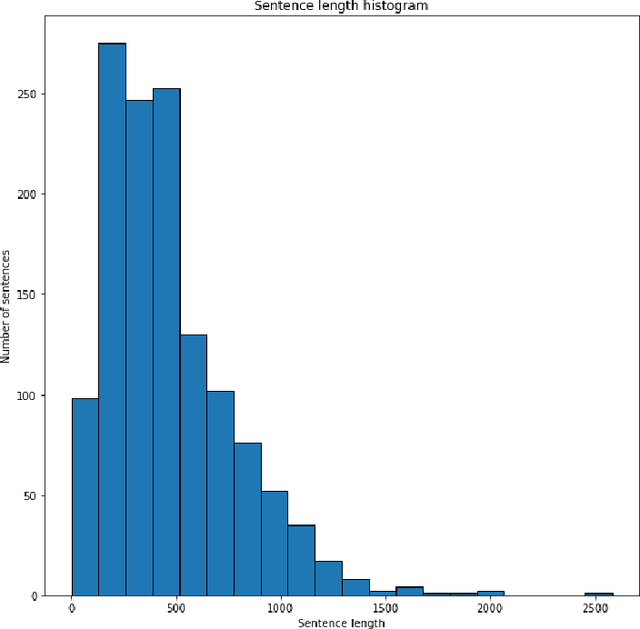

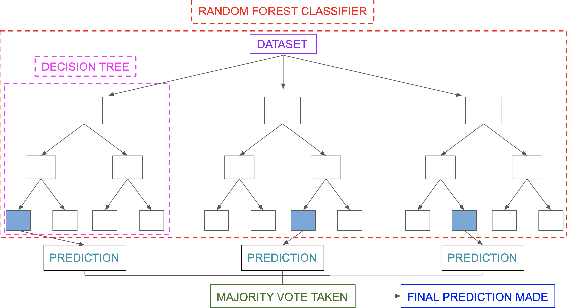

Abstract:Misdiagnosis rates are one of the leading causes of medical errors in hospitals, affecting over 12 million adults across the US. To address the high rate of misdiagnosis, this study utilizes 4 NLP-based algorithms to determine the appropriate health condition based on an unstructured transcription report. From the Logistic Regression, Random Forest, LSTM, and CNNLSTM models, the CNN-LSTM model performed the best with an accuracy of 97.89%. We packaged this model into a authenticated web platform for accessible assistance to clinicians. Overall, by standardizing health care diagnosis and structuring transcription reports, our NLP platform drastically improves the clinical efficiency and accuracy of hospitals worldwide.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge