Kohei Nomoto

Time Hopping technique for faster reinforcement learning in simulations

Sep 06, 2011

Abstract:This preprint has been withdrawn by the author for revision

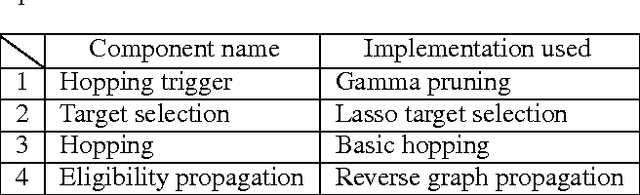

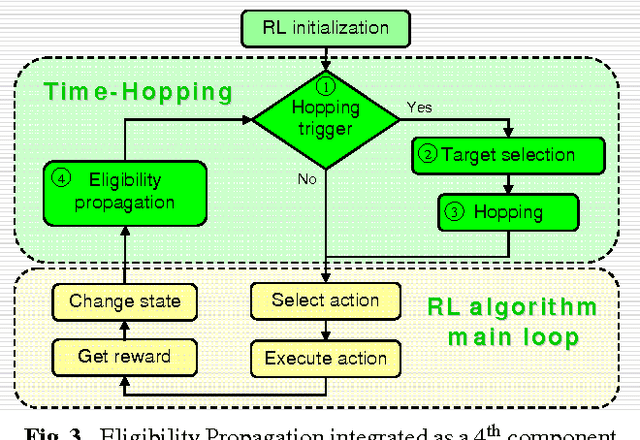

Eligibility Propagation to Speed up Time Hopping for Reinforcement Learning

Apr 03, 2009

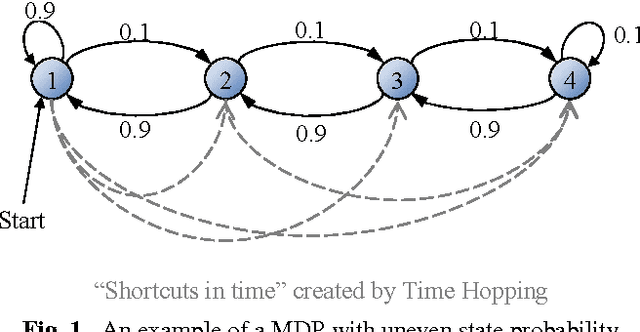

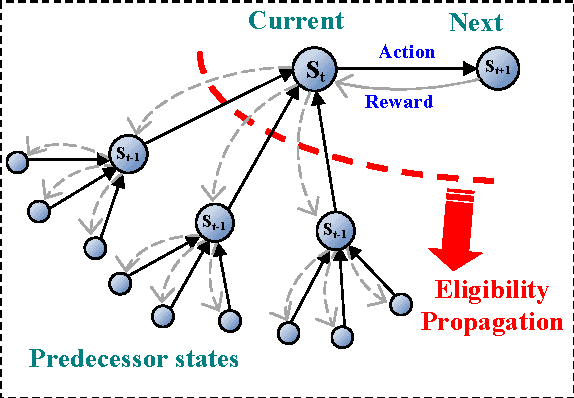

Abstract:A mechanism called Eligibility Propagation is proposed to speed up the Time Hopping technique used for faster Reinforcement Learning in simulations. Eligibility Propagation provides for Time Hopping similar abilities to what eligibility traces provide for conventional Reinforcement Learning. It propagates values from one state to all of its temporal predecessors using a state transitions graph. Experiments on a simulated biped crawling robot confirm that Eligibility Propagation accelerates the learning process more than 3 times.

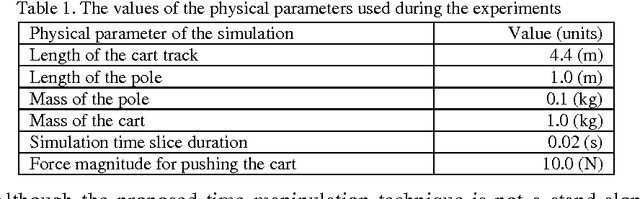

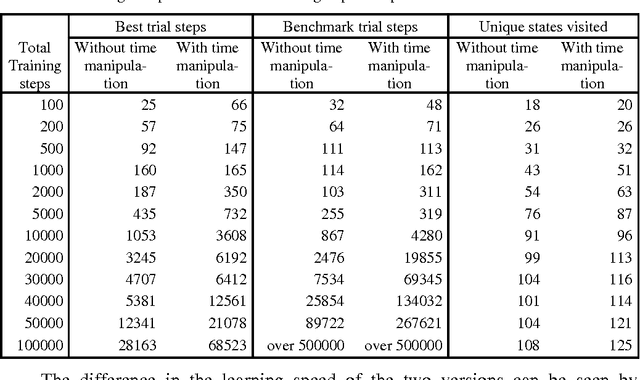

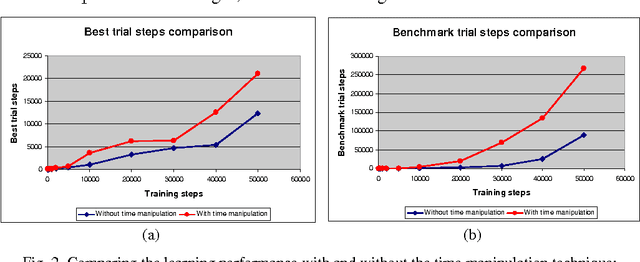

Time manipulation technique for speeding up reinforcement learning in simulations

Mar 28, 2009

Abstract:A technique for speeding up reinforcement learning algorithms by using time manipulation is proposed. It is applicable to failure-avoidance control problems running in a computer simulation. Turning the time of the simulation backwards on failure events is shown to speed up the learning by 260% and improve the state space exploration by 12% on the cart-pole balancing task, compared to the conventional Q-learning and Actor-Critic algorithms.

* 12 pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge