Kimon P. Valavanis

Reinforcement Learning Based Prediction of PID Controller Gains for Quadrotor UAVs

Feb 06, 2025

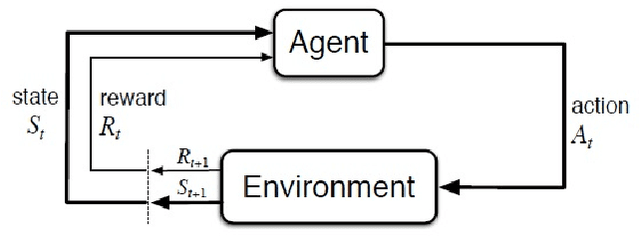

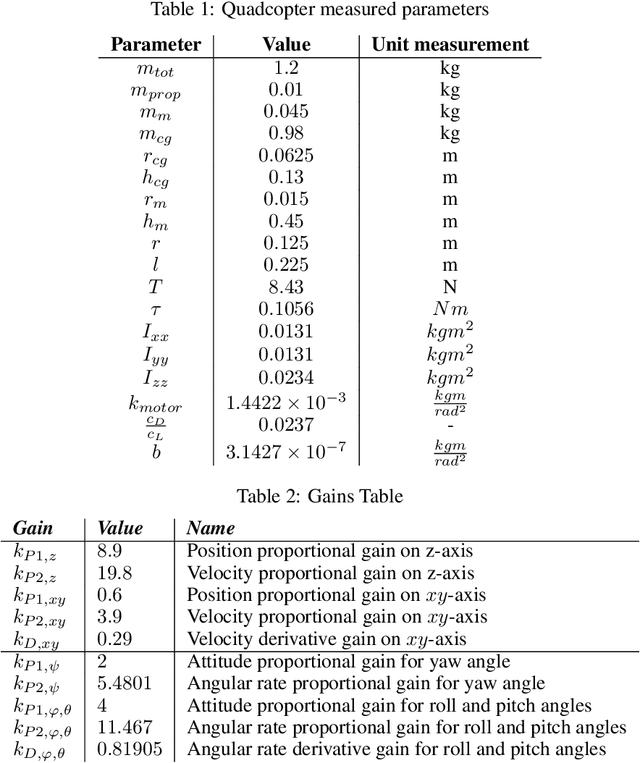

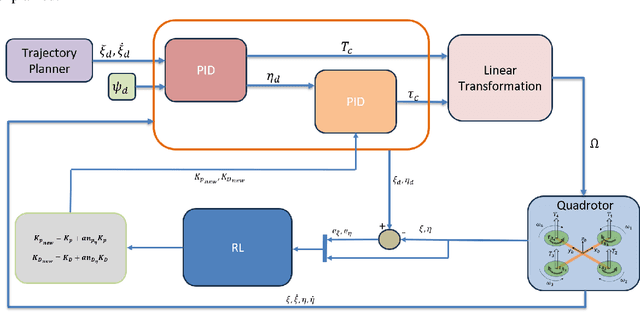

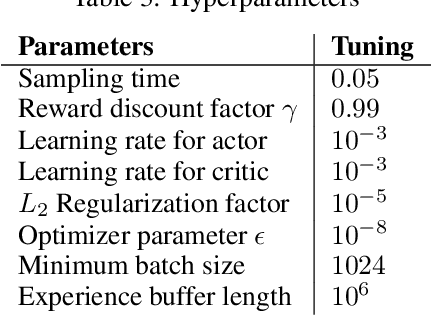

Abstract:A reinforcement learning (RL) based methodology is proposed and implemented for online fine-tuning of PID controller gains, thus, improving quadrotor effective and accurate trajectory tracking. The RL agent is first trained offline on a quadrotor PID attitude controller and then validated through simulations and experimental flights. RL exploits a Deep Deterministic Policy Gradient (DDPG) algorithm, which is an off-policy actor-critic method. Training and simulation studies are performed using Matlab/Simulink and the UAV Toolbox Support Package for PX4 Autopilots. Performance evaluation and comparison studies are performed between the hand-tuned and RL-based tuned approaches. The results show that the controller parameters based on RL are adjusted during flights, achieving the smallest attitude errors, thus significantly improving attitude tracking performance compared to the hand-tuned approach.

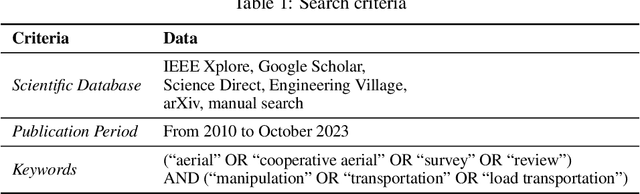

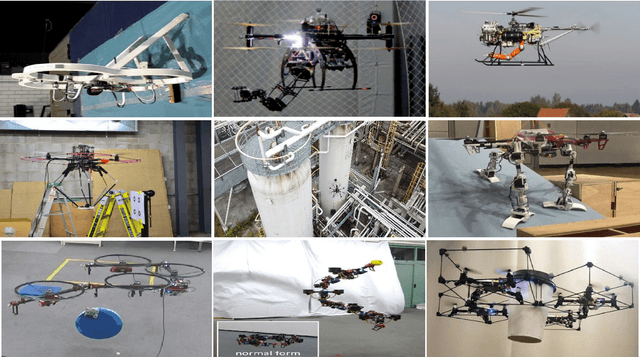

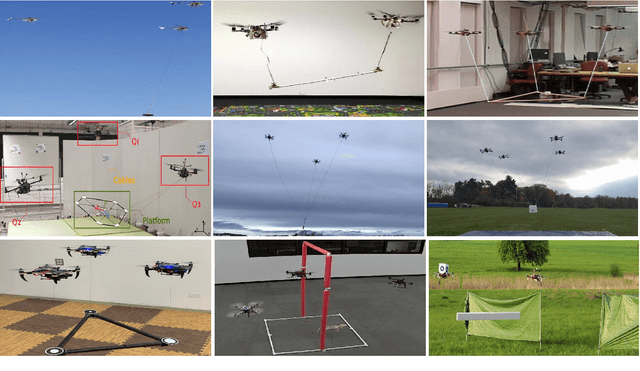

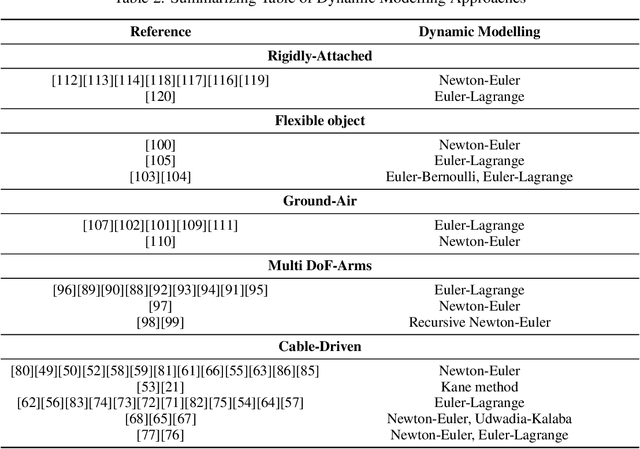

A Comparative Study of Real-Time Implementable Cooperative Aerial Manipulation Systems

Mar 21, 2024

Abstract:This survey paper focuses on quadrotor- and multirotor- based cooperative aerial manipulation. Emphasis is first given on comparing and evaluating prototype systems that have been implemented and tested in real-time in diverse application environments. Underlying modeling and control approaches are also discussed and compared. The outcome of the survey allows for understanding the motivation and rationale to develop such systems, their applicability and implementability in diverse applications and also challenges that need to be addressed and overcome. Moreover, the survey provides a guide to develop the next generation of prototype systems based on preferred characteristics, functionality, operability and application domain.

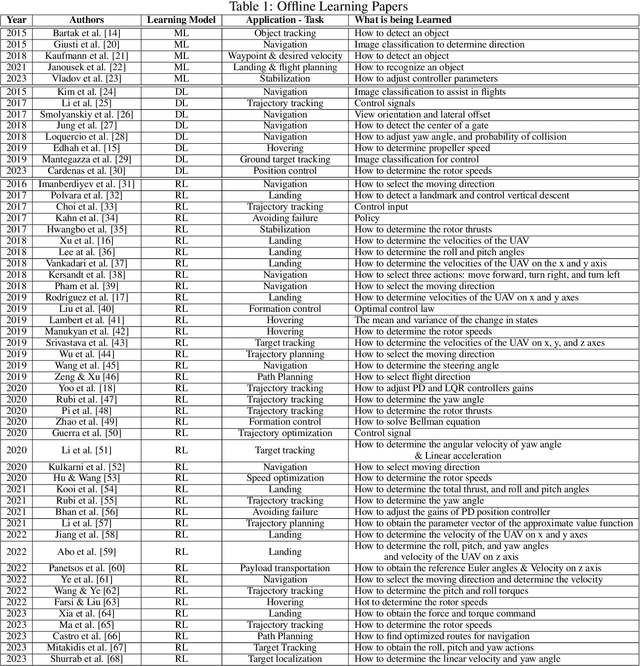

A Survey of Offline and Online Learning-Based Algorithms for Multirotor UAVs

Feb 06, 2024

Abstract:Multirotor UAVs are used for a wide spectrum of civilian and public domain applications. Navigation controllers endowed with different attributes and onboard sensor suites enable multirotor autonomous or semi-autonomous, safe flight, operation, and functionality under nominal and detrimental conditions and external disturbances, even when flying in uncertain and dynamically changing environments. During the last decade, given the faster-than-exponential increase of available computational power, different learning-based algorithms have been derived, implemented, and tested to navigate and control, among other systems, multirotor UAVs. Learning algorithms have been, and are used to derive data-driven based models, to identify parameters, to track objects, to develop navigation controllers, and to learn the environment in which multirotors operate. Learning algorithms combined with model-based control techniques have been proven beneficial when applied to multirotors. This survey summarizes published research since 2015, dividing algorithms, techniques, and methodologies into offline and online learning categories, and then, further classifying them into machine learning, deep learning, and reinforcement learning sub-categories. An integral part and focus of this survey are on online learning algorithms as applied to multirotors with the aim to register the type of learning techniques that are either hard or almost hard real-time implementable, as well as to understand what information is learned, why, and how, and how fast. The outcome of the survey offers a clear understanding of the recent state-of-the-art and of the type and kind of learning-based algorithms that may be implemented, tested, and executed in real-time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge