Ki Hwan Oh

Recognizing Intent in Collaborative Manipulation

Aug 17, 2023

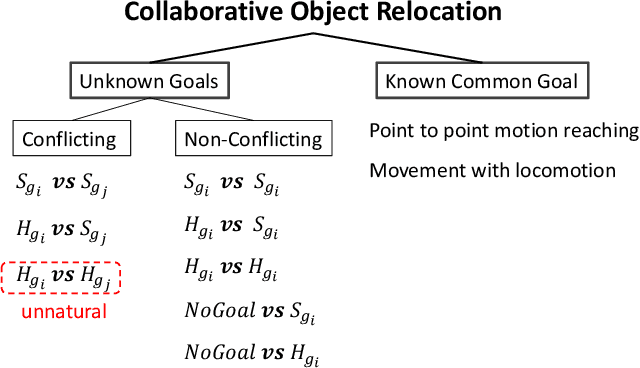

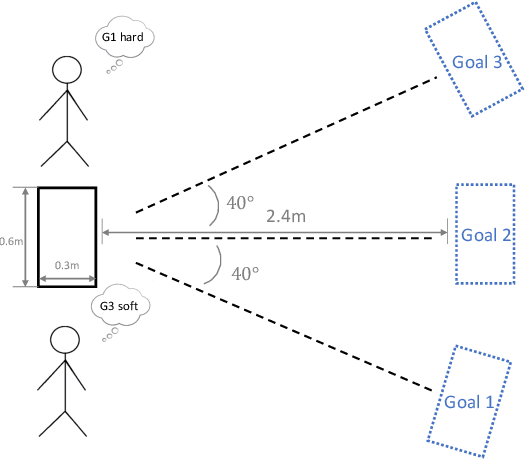

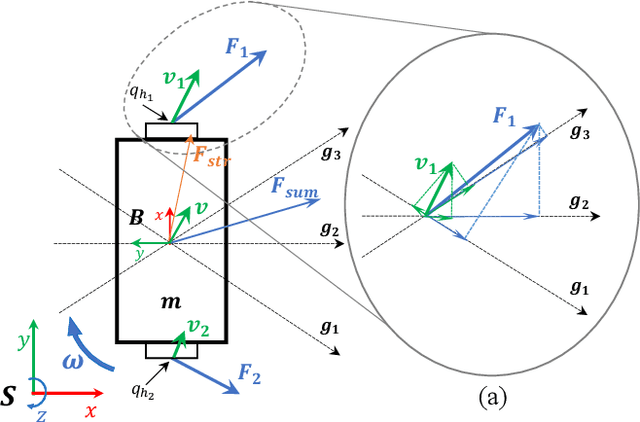

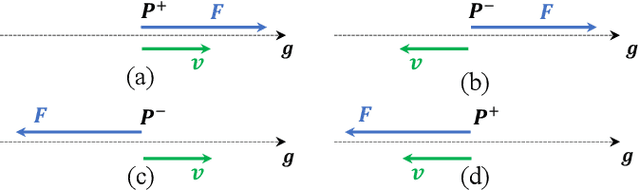

Abstract:Collaborative manipulation is inherently multimodal, with haptic communication playing a central role. When performed by humans, it involves back-and-forth force exchanges between the participants through which they resolve possible conflicts and determine their roles. Much of the existing work on collaborative human-robot manipulation assumes that the robot follows the human. But for a robot to match the performance of a human partner it needs to be able to take initiative and lead when appropriate. To achieve such human-like performance, the robot needs to have the ability to (1) determine the intent of the human, (2) clearly express its own intent, and (3) choose its actions so that the dyad reaches consensus. This work proposes a framework for recognizing human intent in collaborative manipulation tasks using force exchanges. Grounded in a dataset collected during a human study, we introduce a set of features that can be computed from the measured signals and report the results of a classifier trained on our collected human-human interaction data. Two metrics are used to evaluate the intent recognizer: overall accuracy and the ability to correctly identify transitions. The proposed recognizer shows robustness against the variations in the partner's actions and the confounding effects due to the variability in grasp forces and dynamic effects of walking. The results demonstrate that the proposed recognizer is well-suited for implementation in a physical interaction control scheme.

Robots Taking Initiative in Collaborative Object Manipulation: Lessons from Physical Human-Human Interaction

Apr 24, 2023

Abstract:Physical Human-Human Interaction (pHHI) involves the use of multiple sensory modalities. Studies of communication through spoken utterances and gestures are well established. Nevertheless, communication through force signals is not well understood. In this paper, we focus on investigating the mechanisms employed by humans during the negotiation through force signals, which is an integral part of successful collaboration. Our objective is to use the insights to inform the design of controllers for robot assistants. Specifically, we want to enable robots to take the lead in collaboration. To achieve this goal, we conducted a study to observe how humans behave during collaborative manipulation tasks. During our preliminary data analysis, we discovered several new features that help us better understand how the interaction progresses. From these features, we identified distinct patterns in the data that indicate when a participant is expressing their intent. Our study provides valuable insight into how humans collaborate physically, which can help us design robots that behave more like humans in such scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge