Ketan Anand

Classifying Simulated Gait Impairments using Privacy-preserving Explainable Artificial Intelligence and Mobile Phone Videos

Dec 02, 2024

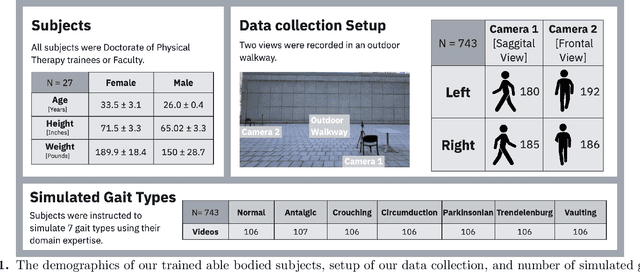

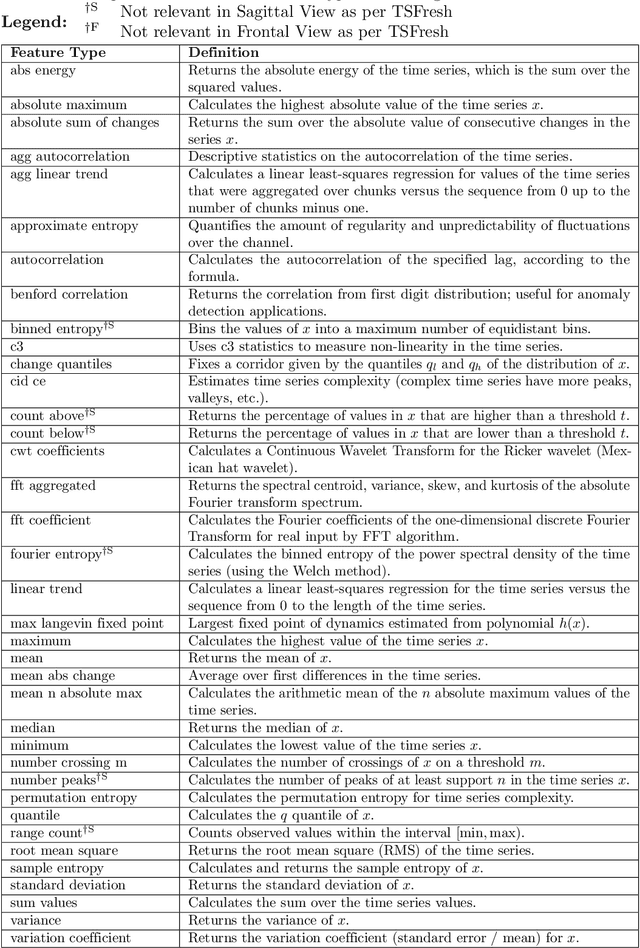

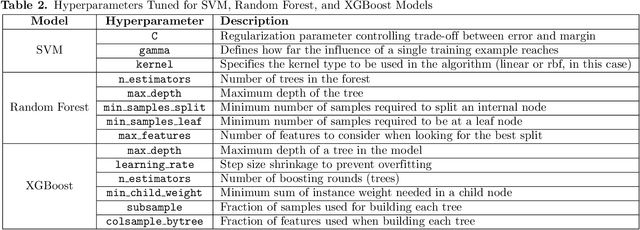

Abstract:Accurate diagnosis of gait impairments is often hindered by subjective or costly assessment methods, with current solutions requiring either expensive multi-camera equipment or relying on subjective clinical observation. There is a critical need for accessible, objective tools that can aid in gait assessment while preserving patient privacy. In this work, we present a mobile phone-based, privacy-preserving artificial intelligence (AI) system for classifying gait impairments and introduce a novel dataset of 743 videos capturing seven distinct gait patterns. The dataset consists of frontal and sagittal views of trained subjects simulating normal gait and six types of pathological gait (circumduction, Trendelenburg, antalgic, crouch, Parkinsonian, and vaulting), recorded using standard mobile phone cameras. Our system achieved 86.5% accuracy using combined frontal and sagittal views, with sagittal views generally outperforming frontal views except for specific gait patterns like Circumduction. Model feature importance analysis revealed that frequency-domain features and entropy measures were critical for classifcation performance, specifically lower limb keypoints proved most important for classification, aligning with clinical understanding of gait assessment. These findings demonstrate that mobile phone-based systems can effectively classify diverse gait patterns while preserving privacy through on-device processing. The high accuracy achieved using simulated gait data suggests their potential for rapid prototyping of gait analysis systems, though clinical validation with patient data remains necessary. This work represents a significant step toward accessible, objective gait assessment tools for clinical, community, and tele-rehabilitation settings

Towards Precision in Appearance-based Gaze Estimation in the Wild

Feb 14, 2023Abstract:Appearance-based gaze estimation systems have shown great progress recently, yet the performance of these techniques depend on the datasets used for training. Most of the existing gaze estimation datasets setup in interactive settings were recorded in laboratory conditions and those recorded in the wild conditions display limited head pose and illumination variations. Further, we observed little attention so far towards precision evaluations of existing gaze estimation approaches. In this work, we present a large gaze estimation dataset, PARKS-Gaze, with wider head pose and illumination variation and with multiple samples for a single Point of Gaze (PoG). The dataset contains 974 minutes of data from 28 participants with a head pose range of 60 degrees in both yaw and pitch directions. Our within-dataset and cross-dataset evaluations and precision evaluations indicate that the proposed dataset is more challenging and enable models to generalize on unseen participants better than the existing in-the-wild datasets. The project page can be accessed here: https://github.com/lrdmurthy/PARKS-Gaze

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge