Keshav Bansal

Shapes of Emotions: Multimodal Emotion Recognition in Conversations via Emotion Shifts

Dec 03, 2021

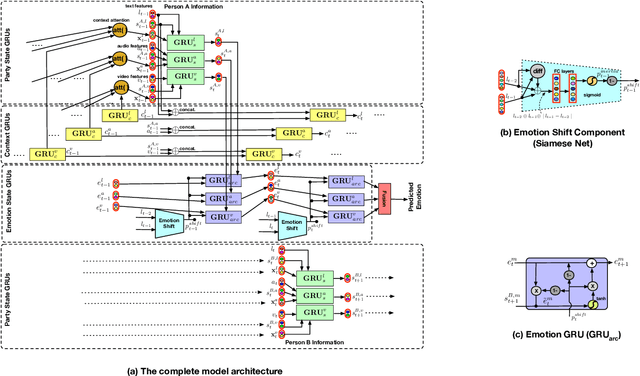

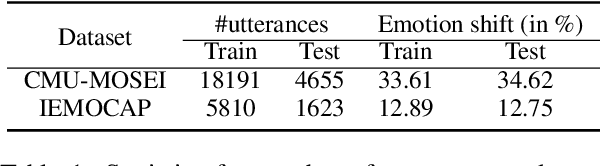

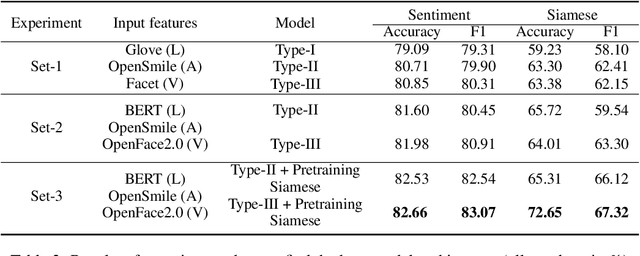

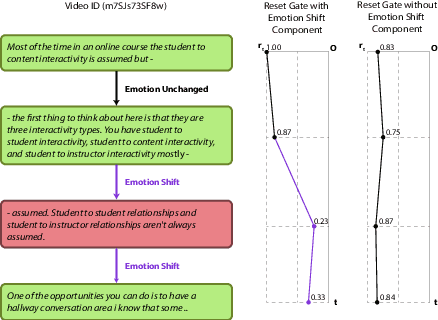

Abstract:Emotion Recognition in Conversations (ERC) is an important and active research problem. Recent work has shown the benefits of using multiple modalities (e.g., text, audio, and video) for the ERC task. In a conversation, participants tend to maintain a particular emotional state unless some external stimuli evokes a change. There is a continuous ebb and flow of emotions in a conversation. Inspired by this observation, we propose a multimodal ERC model and augment it with an emotion-shift component. The proposed emotion-shift component is modular and can be added to any existing multimodal ERC model (with a few modifications), to improve emotion recognition. We experiment with different variants of the model, and results show that the inclusion of emotion shift signal helps the model to outperform existing multimodal models for ERC and hence showing the state-of-the-art performance on MOSEI and IEMOCAP datasets.

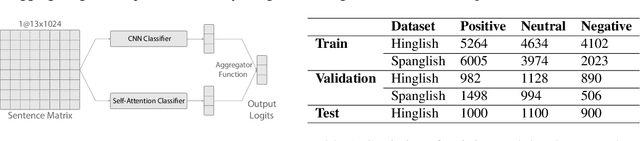

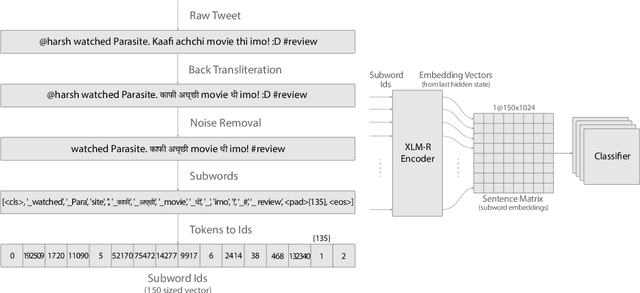

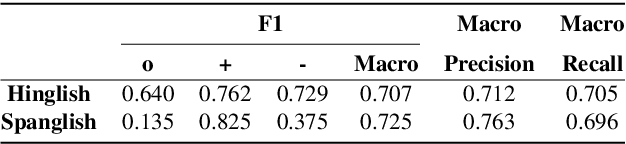

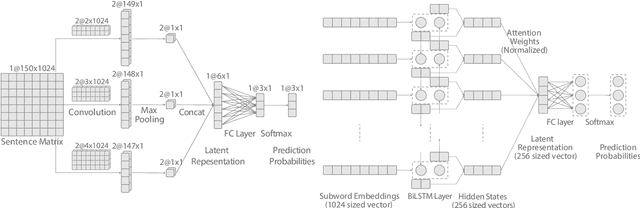

BAKSA at SemEval-2020 Task 9: Bolstering CNN with Self-Attention for Sentiment Analysis of Code Mixed Text

Jul 21, 2020

Abstract:Sentiment Analysis of code-mixed text has diversified applications in opinion mining ranging from tagging user reviews to identifying social or political sentiments of a sub-population. In this paper, we present an ensemble architecture of convolutional neural net (CNN) and self-attention based LSTM for sentiment analysis of code-mixed tweets. While the CNN component helps in the classification of positive and negative tweets, the self-attention based LSTM, helps in the classification of neutral tweets, because of its ability to identify correct sentiment among multiple sentiment bearing units. We achieved F1 scores of 0.707 (ranked 5th) and 0.725 (ranked 13th) on Hindi-English (Hinglish) and Spanish-English (Spanglish) datasets, respectively. The submissions for Hinglish and Spanglish tasks were made under the usernames ayushk and harsh_6 respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge