Keigo Hirakawa

EBS-EKF: Accurate and High Frequency Event-based Star Tracking

Mar 25, 2025

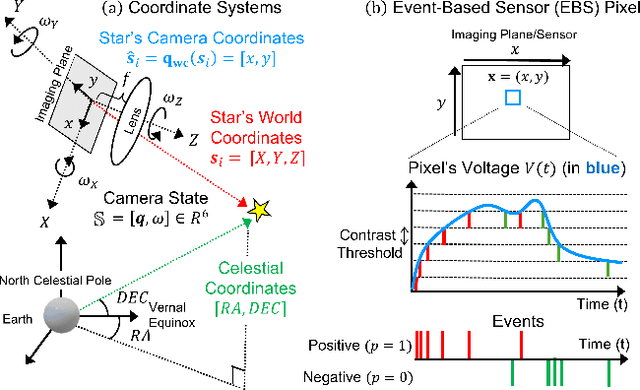

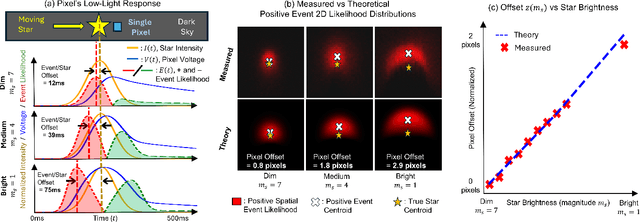

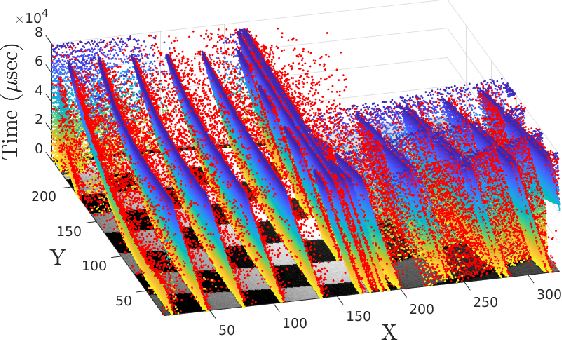

Abstract:Event-based sensors (EBS) are a promising new technology for star tracking due to their low latency and power efficiency, but prior work has thus far been evaluated exclusively in simulation with simplified signal models. We propose a novel algorithm for event-based star tracking, grounded in an analysis of the EBS circuit and an extended Kalman filter (EKF). We quantitatively evaluate our method using real night sky data, comparing its results with those from a space-ready active-pixel sensor (APS) star tracker. We demonstrate that our method is an order-of-magnitude more accurate than existing methods due to improved signal modeling and state estimation, while providing more frequent updates and greater motion tolerance than conventional APS trackers. We provide all code and the first dataset of events synchronized with APS solutions.

Sign-Coded Exposure Sensing for Noise-Robust High-Speed Imaging

May 05, 2023

Abstract:We present a novel Fourier camera, an in-hardware optical compression of high-speed frames employing pixel-level sign-coded exposure where pixel intensities temporally modulated as positive and negative exposure are combined to yield Hadamard coefficients. The orthogonality of Walsh functions ensures that the noise is not amplified during high-speed frame reconstruction, making it a much more attractive option for coded exposure systems aimed at very high frame rate operation. Frame reconstruction is carried out by a single-pass demosaicking of the spatially multiplexed Walsh functions in a lattice arrangement, significantly reducing the computational complexity. The simulation prototype confirms the improved robustness to noise compared to the binary-coded exposure patterns, such as one-hot encoding and pseudo-random encoding. Our hardware prototype demonstrated the reconstruction of 4kHz frames of a moving scene lit by ambient light only.

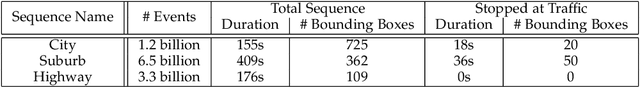

EBSnoR: Event-Based Snow Removal by Optimal Dwell Time Thresholding

Aug 22, 2022

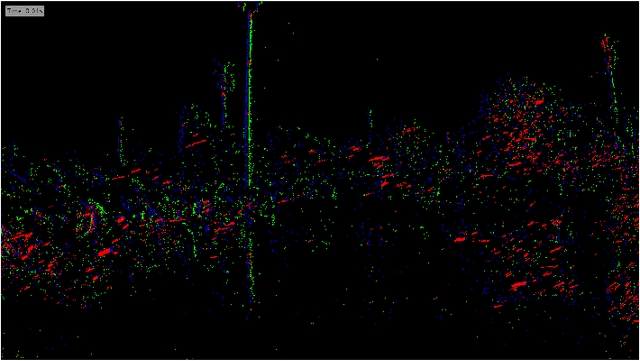

Abstract:We propose an Event-Based Snow Removal algorithm called EBSnoR. We developed a technique to measure the dwell time of snowflakes on a pixel using event-based camera data, which is used to carry out a Neyman-Pearson hypothesis test to partition event stream into snowflake and background events. The effectiveness of the proposed EBSnoR was verified on a new dataset called UDayton22EBSnow, comprised of front-facing event-based camera in a car driving through snow with manually annotated bounding boxes around surrounding vehicles. Qualitatively, EBSnoR correctly identifies events corresponding to snowflakes; and quantitatively, EBSnoR-preprocessed event data improved the performance of event-based car detection algorithms.

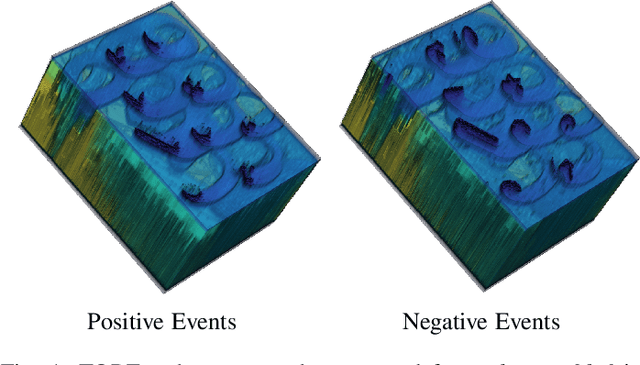

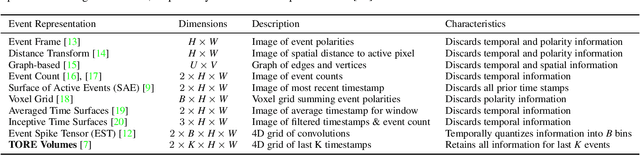

Time-Ordered Recent Event Volumes for Event Cameras

Mar 10, 2021

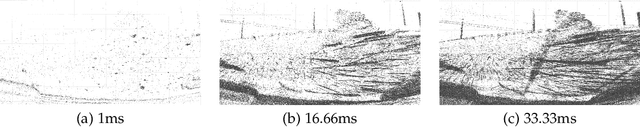

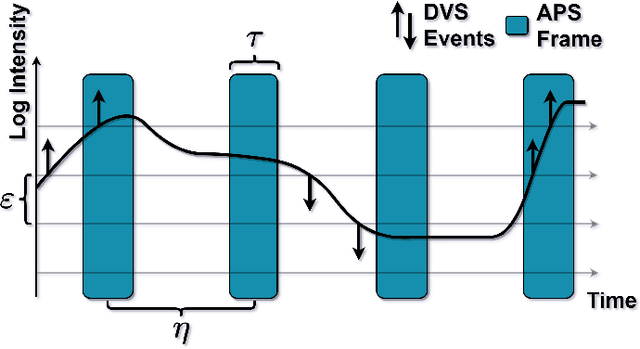

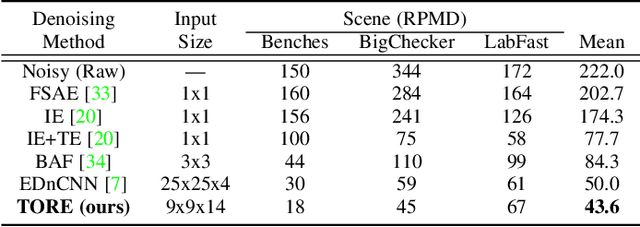

Abstract:Event cameras are an exciting, new sensor modality enabling high-speed imaging with extremely low-latency and wide dynamic range. Unfortunately, most machine learning architectures are not designed to directly handle sparse data, like that generated from event cameras. Many state-of-the-art algorithms for event cameras rely on interpolated event representations - obscuring crucial timing information, increasing the data volume, and limiting overall network performance. This paper details an event representation called Time-Ordered Recent Event (TORE) volumes. TORE volumes are designed to compactly store raw spike timing information with minimal information loss. This bio-inspired design is memory efficient, computationally fast, avoids time-blocking (i.e. fixed and predefined frame rates), and contains "local memory" from past data. The design is evaluated on a wide range of challenging tasks (e.g. event denoising, image reconstruction, classification, and human pose estimation) and is shown to dramatically improve state-of-the-art performance. TORE volumes are an easy-to-implement replacement for any algorithm currently utilizing event representations.

Lossless White Balance For Improved Lossless CFA Image and Video Compression

Sep 19, 2020

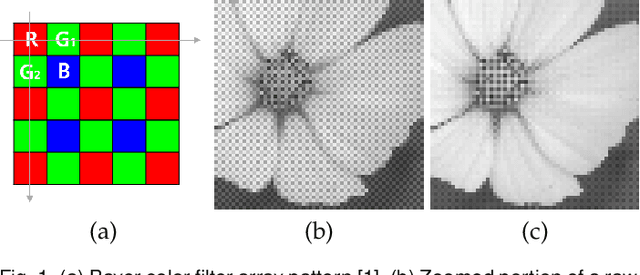

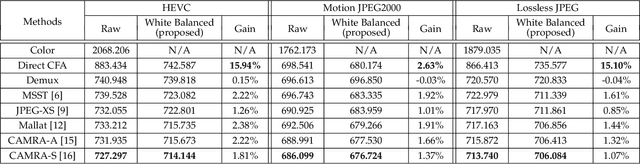

Abstract:Color filter array is spatial multiplexing of pixel-sized filters placed over pixel detectors in camera sensors. The state-of-the-art lossless coding techniques of raw sensor data captured by such sensors leverage spatial or cross-color correlation using lifting schemes. In this paper, we propose a lifting-based lossless white balance algorithm. When applied to the raw sensor data, the spatial bandwidth of the implied chrominance signals decreases. We propose to use this white balance as a pre-processing step to lossless CFA subsampled image/video compression, improving the overall coding efficiency of the raw sensor data.

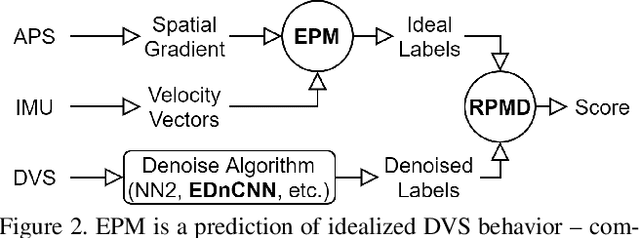

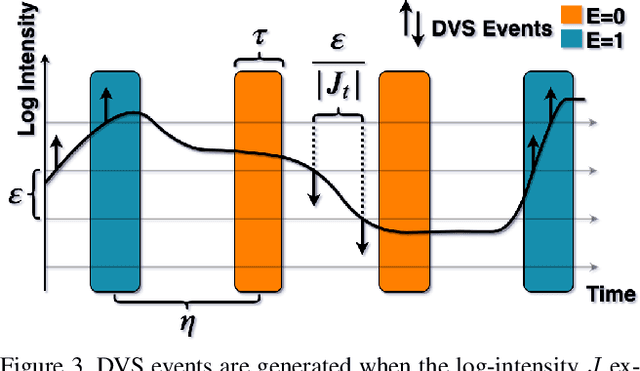

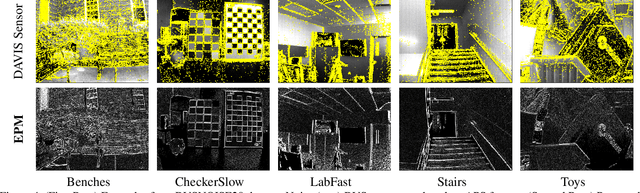

Event Probability Mask (EPM) and Event Denoising Convolutional Neural Network (EDnCNN) for Neuromorphic Cameras

Mar 23, 2020

Abstract:This paper presents a novel method for labeling real-world neuromorphic camera sensor data by calculating the likelihood of generating an event at each pixel within a short time window, which we refer to as "event probability mask" or EPM. Its applications include (i) objective benchmarking of event denoising performance, (ii) training convolutional neural networks for noise removal called "event denoising convolutional neural network" (EDnCNN), and (iii) estimating internal neuromorphic camera parameters. We provide the first dataset (DVSNOISE20) of real-world labeled neuromorphic camera events for noise removal.

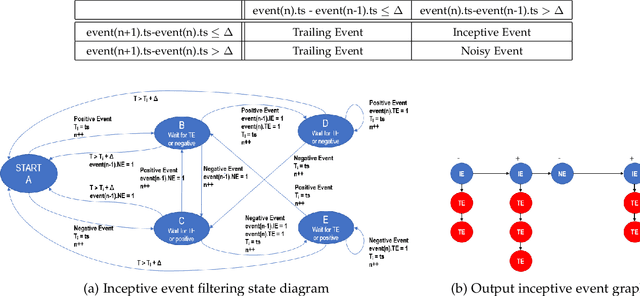

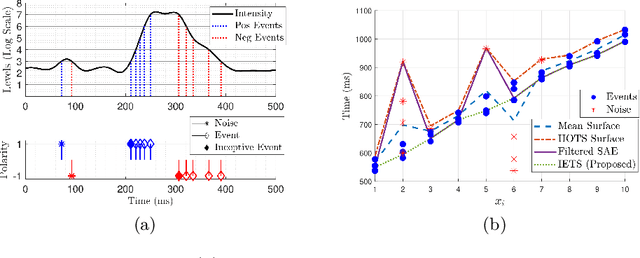

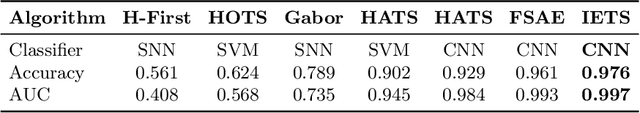

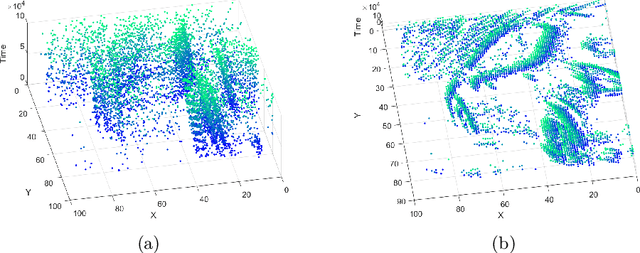

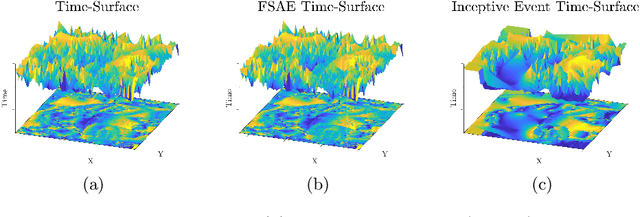

Inceptive Event Time-Surfaces for Object Classification Using Neuromorphic Cameras

Feb 26, 2020

Abstract:This paper presents a novel fusion of low-level approaches for dimensionality reduction into an effective approach for high-level objects in neuromorphic camera data called Inceptive Event Time-Surfaces (IETS). IETSs overcome several limitations of conventional time-surfaces by increasing robustness to noise, promoting spatial consistency, and improving the temporal localization of (moving) edges. Combining IETS with transfer learning improves state-of-the-art performance on the challenging problem of object classification utilizing event camera data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge