Katharina S. Riesterer

General Hand Guidance Framework using Microsoft HoloLens

Aug 13, 2019

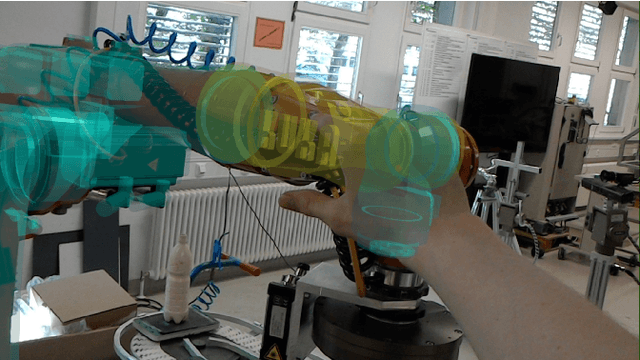

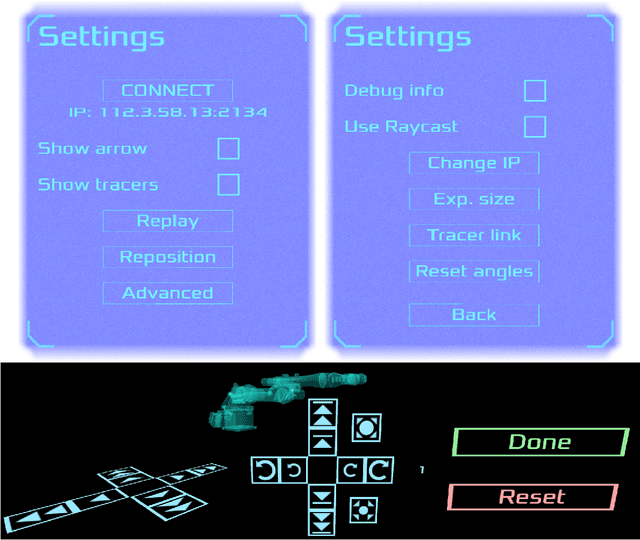

Abstract:Hand guidance emerged from the safety requirements for collaborative robots, namely possessing joint-torque sensors. Since then it has proven to be a powerful tool for easy trajectory programming, allowing lay-users to reprogram robots intuitively. Going beyond, a robot can learn tasks by user demonstrations through kinesthetic teaching, enabling robots to generalise tasks and further reducing the need for reprogramming. However, hand guidance is still mostly relegated to collaborative robots. Here we propose a method that doesn't require any sensors on the robot or in the robot cell, by using a Microsoft HoloLens augmented reality head mounted display. We reference the robot using a registration algorithm to match the robot model to the spatial mesh. The in-built hand tracking and localisation capabilities are then used to calculate the position of the hands relative to the robot. By decomposing the hand movements into orthogonal rotations and propagating it down through the kinematic chain, we achieve a generalised hand guidance without the need to build a dynamic model of the robot itself. We tested our approach on a commonly used industrial manipulator, the KUKA KR-5.

Referencing between a Head-Mounted Device and Robotic Manipulators

Apr 04, 2019

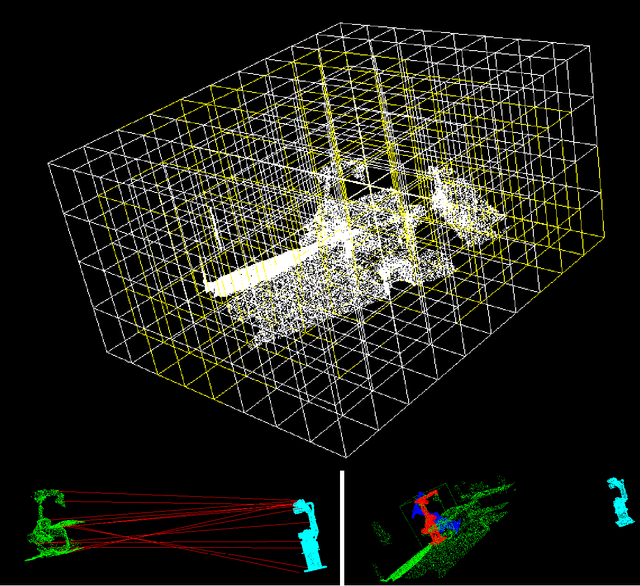

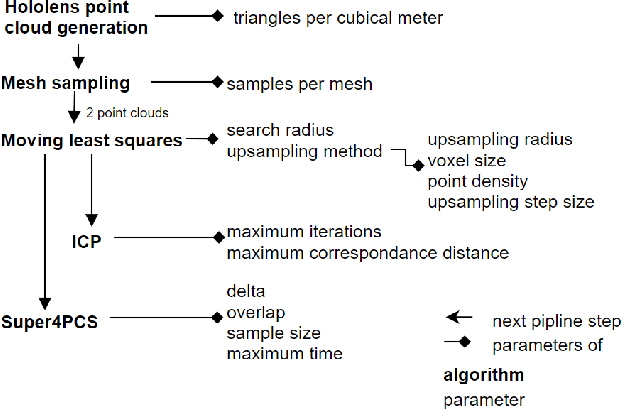

Abstract:Having a precise and robust transformation between the robot coordinate system and the AR-device coordinate system is paramount during human-robot interaction (HRI) based on augmented reality using Head mounted displays (HMD), both for intuitive information display and for the tracking of human motions. Most current solutions in this area rely either on the tracking of visual markers, e.g. QR codes, or on manual referencing, both of which provide unsatisfying results. Meanwhile a plethora of object detection and referencing methods exist in the wider robotic and machine vision communities. The precision of the referencing is likewise almost never measured. Here we would like to address this issue by firstly presenting an overview of currently used referencing methods between robots and HMDs. This is followed by a brief overview of object detection and referencing methods used in the field of robotics. Based on these methods we suggest three classes of referencing algorithms we intend to pursue - semi-automatic, on-shot; automatic, one-shot; and automatic continuous. We describe the general workflows of these three classes as well as describing our proposed algorithms in each of these classes. Finally we present the first experimental results of a semi-automatic referencing algorithm, tested on an industrial KUKA KR-5 manipulator.

Sensorless Hand Guidance using Microsoft Hololens

Jan 15, 2019

Abstract:Hand guidance of robots has proven to be a useful tool both for programming trajectories and in kinesthetic teaching. However hand guidance is usually relegated to robots possessing joint-torque sensors (JTS). Here we propose to extend hand guidance to robots lacking those sensors through the use of an Augmented Reality (AR) device, namely Microsoft's Hololens. Augmented reality devices have been envisioned as a helpful addition to ease both robot programming and increase situational awareness of humans working in close proximity to robots. We reference the robot by using a registration algorithm to match a robot model to the spatial mesh. The in-built hand tracking capabilities are then used to calculate the position of the hands relative to the robot. By decomposing the hand movements into orthogonal rotations we achieve a completely sensorless hand guidance without any need to build a dynamic model of the robot itself. We did the first tests our approach on a commonly used industrial manipulator, the KUKA KR-5.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge