Karin Nielsen-Saines

Deep learning empowered sensor fusion to improve infant movement classification

Jun 14, 2024Abstract:There is a recent boom in the development of AI solutions to facilitate and enhance diagnostic procedures for established clinical tools. To assess the integrity of the developing nervous system, the Prechtl general movement assessment (GMA) is recognized for its clinical value in diagnosing neurological impairments in early infancy. GMA has been increasingly augmented through machine learning approaches intending to scale-up its application, circumvent costs in the training of human assessors and further standardize classification of spontaneous motor patterns. Available deep learning tools, all of which are based on single sensor modalities, are however still considerably inferior to that of well-trained human assessors. These approaches are hardly comparable as all models are designed, trained and evaluated on proprietary/silo-data sets. With this study we propose a sensor fusion approach for assessing fidgety movements (FMs) comparing three different sensor modalities (pressure, inertial, and visual sensors). Various combinations and two sensor fusion approaches (late and early fusion) for infant movement classification were tested to evaluate whether a multi-sensor system outperforms single modality assessments. The performance of the three-sensor fusion (classification accuracy of 94.5\%) was significantly higher than that of any single modality evaluated, suggesting the sensor fusion approach is a promising avenue for automated classification of infant motor patterns. The development of a robust sensor fusion system may significantly enhance AI-based early recognition of neurofunctions, ultimately facilitating automated early detection of neurodevelopmental conditions.

Infant movement classification through pressure distribution analysis -- added value for research and clinical implementation

Jul 26, 2022

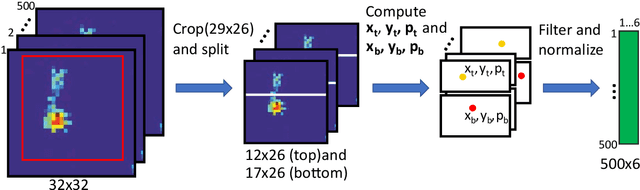

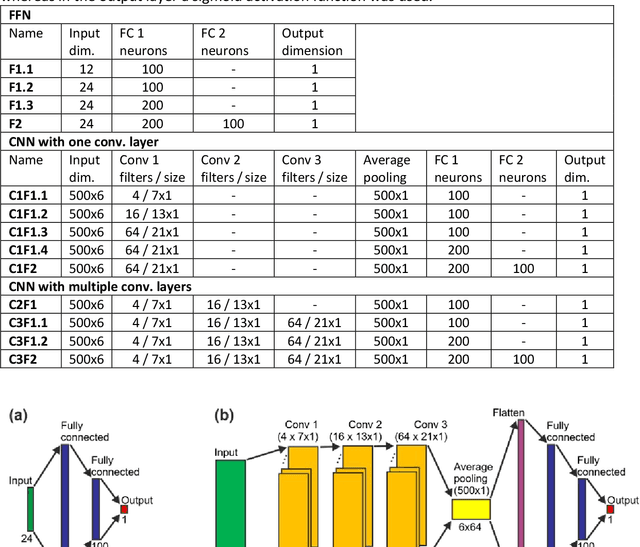

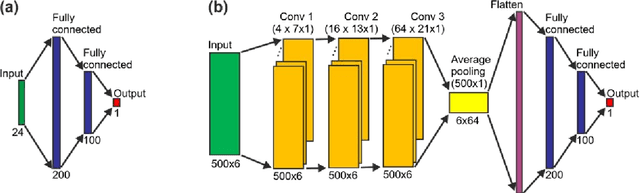

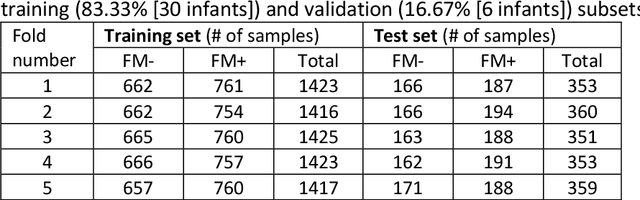

Abstract:In recent years, numerous automated approaches complementing the human Prechtl's general movements assessment (GMA) were developed. Most approaches utilised RGB or RGB-D cameras to obtain motion data, while a few employed accelerometers or inertial measurement units. In this paper, within a prospective longitudinal infant cohort study applying a multimodal approach for movement tracking and analyses, we examined for the first time the performance of pressure sensors for classifying an infant general movements pattern, the fidgety movements. We developed an algorithm to encode movements with pressure data from a 32x32 grid mat with 1024 sensors. Multiple neural network architectures were investigated to distinguish presence vs. absence of the fidgety movements, including the feed-forward networks (FFNs) with manually defined statistical features and the convolutional neural networks (CNNs) with learned features. The CNN with multiple convolutional layers and learned features outperformed the FFN with manually defined statistical features, with classification accuracy of $81.4\%$ and $75.6\%$, respectively. We compared the pros and cons of the pressure sensing approach to the video-based and inertial motion senor-based approaches for analysing infant movements. The non-intrusive, extremely easy-to-use pressure sensing approach has great potential for efficient large-scaled movement data acquisition across cites and for application in busy daily clinical routines for evaluating infant neuromotor functions. The pressure sensors can be combined with other sensor modalities to enhance infant movement analyses in research and practice, as proposed in our multimodal sensor fusion model.

Open video data sharing in developmental and behavioural science

Jul 22, 2022

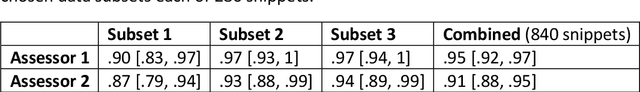

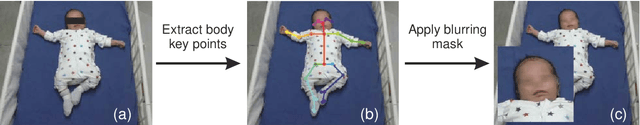

Abstract:Video recording is a widely used method for documenting infant and child behaviours in research and clinical practice. Video data has rarely been shared due to ethical concerns of confidentiality, although the need of shared large-scaled datasets remains increasing. This demand is even more imperative when data-driven computer-based approaches are involved, such as screening tools to complement clinical assessments. To share data while abiding by privacy protection rules, a critical question arises whether efforts at data de-identification reduce data utility? We addressed this question by showcasing the Prechtl's general movements assessment (GMA), an established and globally practised video-based diagnostic tool in early infancy for detecting neurological deficits, such as cerebral palsy. To date, no shared expert-annotated large data repositories for infant movement analyses exist. Such datasets would massively benefit training and recalibration of human assessors and the development of computer-based approaches. In the current study, sequences from a prospective longitudinal infant cohort with a total of 19451 available general movements video snippets were randomly selected for human clinical reasoning and computer-based analysis. We demonstrated for the first time that pseudonymisation by face-blurring video recordings is a viable approach. The video redaction did not affect classification accuracy for either human assessors or computer vision methods, suggesting an adequate and easy-to-apply solution for sharing movement video data. We call for further explorations into efficient and privacy rule-conforming approaches for deidentifying video data in scientific and clinical fields beyond movement assessments. These approaches shall enable sharing and merging stand-alone video datasets into large data pools to advance science and public health.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge