Lennart Jahn

Comparison of marker-less 2D image-based methods for infant pose estimation

Oct 07, 2024Abstract:There are increasing efforts to automate clinical methods for early diagnosis of developmental disorders, among them the General Movement Assessment (GMA), a video-based tool to classify infant motor functioning. Optimal pose estimation is a crucial part of the automated GMA. In this study we compare the performance of available generic- and infant-pose estimators, and the choice of viewing angle for optimal recordings, i.e., conventional diagonal view used in GMA vs. top-down view. For this study, we used 4500 annotated video-frames from 75 recordings of infant spontaneous motor functions from 4 to 26 weeks. To determine which available pose estimation method and camera angle yield the best pose estimation accuracy on infants in a GMA related setting, the distance to human annotations as well as the percentage of correct key-points (PCK) were computed and compared. The results show that the best performing generic model trained on adults, ViTPose, also performs best on infants. We see no improvement from using specialized infant-pose estimators over the generic pose estimators on our own infant dataset. However, when retraining a generic model on our data, there is a significant improvement in pose estimation accuracy. The pose estimation accuracy obtained from the top-down view is significantly better than that obtained from the diagonal view, especially for the detection of the hip key-points. The results also indicate only limited generalization capabilities of infant-pose estimators to other infant datasets, which hints that one should be careful when choosing infant pose estimators and using them on infant datasets which they were not trained on. While the standard GMA method uses a diagonal view for assessment, pose estimation accuracy significantly improves using a top-down view. This suggests that a top-down view should be included in recording setups for automated GMA research.

Deep learning empowered sensor fusion to improve infant movement classification

Jun 14, 2024Abstract:There is a recent boom in the development of AI solutions to facilitate and enhance diagnostic procedures for established clinical tools. To assess the integrity of the developing nervous system, the Prechtl general movement assessment (GMA) is recognized for its clinical value in diagnosing neurological impairments in early infancy. GMA has been increasingly augmented through machine learning approaches intending to scale-up its application, circumvent costs in the training of human assessors and further standardize classification of spontaneous motor patterns. Available deep learning tools, all of which are based on single sensor modalities, are however still considerably inferior to that of well-trained human assessors. These approaches are hardly comparable as all models are designed, trained and evaluated on proprietary/silo-data sets. With this study we propose a sensor fusion approach for assessing fidgety movements (FMs) comparing three different sensor modalities (pressure, inertial, and visual sensors). Various combinations and two sensor fusion approaches (late and early fusion) for infant movement classification were tested to evaluate whether a multi-sensor system outperforms single modality assessments. The performance of the three-sensor fusion (classification accuracy of 94.5\%) was significantly higher than that of any single modality evaluated, suggesting the sensor fusion approach is a promising avenue for automated classification of infant motor patterns. The development of a robust sensor fusion system may significantly enhance AI-based early recognition of neurofunctions, ultimately facilitating automated early detection of neurodevelopmental conditions.

Comparison of Motion Encoding Frameworks on Human Manipulation Actions

Nov 23, 2022

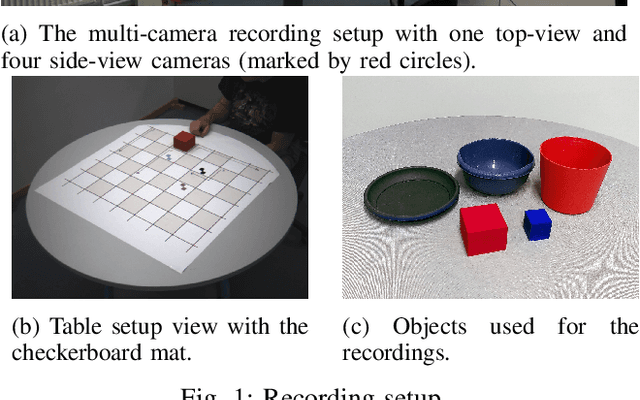

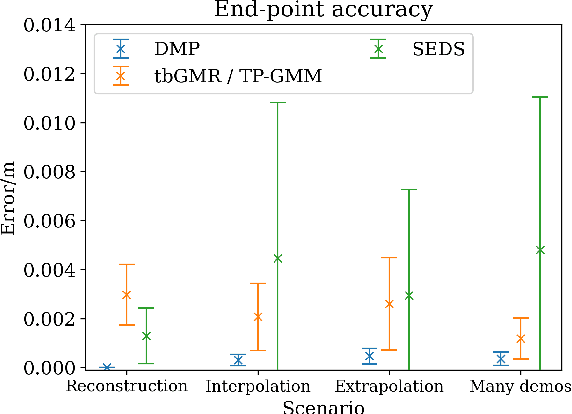

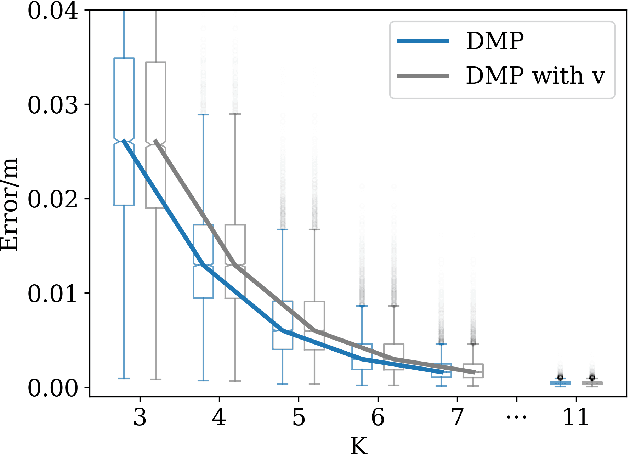

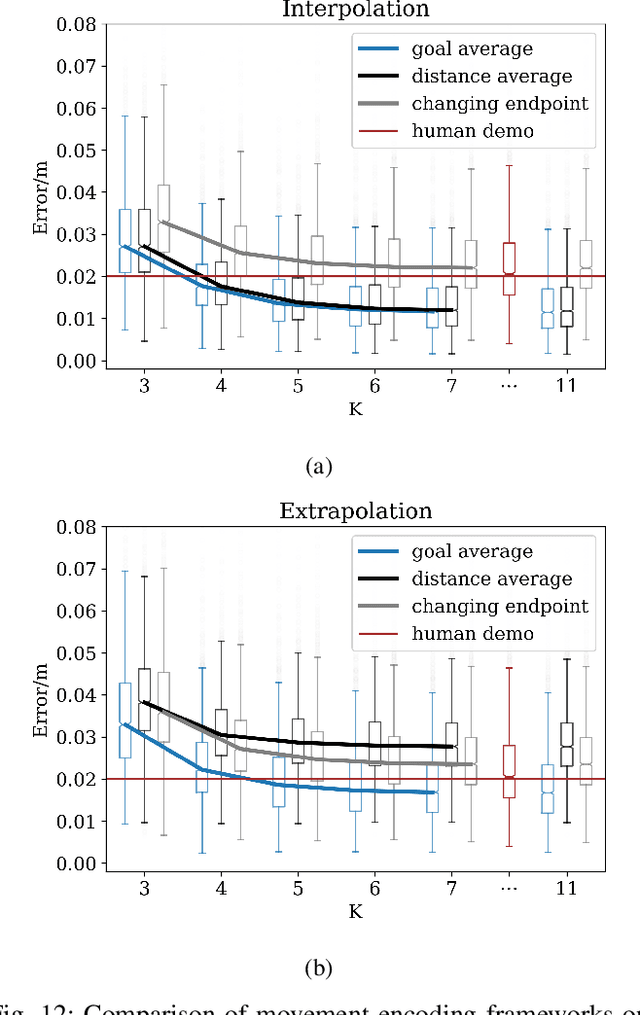

Abstract:Movement generation, and especially generalisation to unseen situations, plays an important role in robotics. Different types of movement generation methods exist such as spline based methods, dynamical system based methods, and methods based on Gaussian mixture models (GMMs). Using a large, new dataset on human manipulations, in this paper we provide a highly detailed comparison of three most widely used movement encoding and generation frameworks: dynamic movement primitives (DMPs), time based Gaussian mixture regression (tbGMR) and stable estimator of dynamical systems (SEDS). We compare these frameworks with respect to their movement encoding efficiency, reconstruction accuracy, and movement generalisation capabilities. The new dataset consists of nine object manipulation actions performed by 12 humans: pick and place, put on top/take down, put inside/take out, hide/uncover, and push/pull with a total of 7,652 movement examples. Our analysis shows that for movement encoding and reconstruction DMPs are the most efficient framework with respect to the number of parameters and reconstruction accuracy if a sufficient number of kernels is used. In case of movement generalisation to new start- and end-point situations, DMPs and task parameterized GMM (TP-GMM, movement generalisation framework based on tbGMR) lead to similar performance and outperform SEDS. Furthermore we observe that TP-GMM and SEDS suffer from inaccurate convergence to the end-point as compared to DMPs. These different quantitative results will help designing trajectory representations in an improved task-dependent way in future robotic applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge