Kaixi Hu

Certified Signed Graph Unlearning

Nov 18, 2025Abstract:Signed graphs model complex relationships through positive and negative edges, with widespread real-world applications. Given the sensitive nature of such data, selective removal mechanisms have become essential for privacy protection. While graph unlearning enables the removal of specific data influences from Graph Neural Networks (GNNs), existing methods are designed for conventional GNNs and overlook the unique heterogeneous properties of signed graphs. When applied to Signed Graph Neural Networks (SGNNs), these methods lose critical sign information, degrading both model utility and unlearning effectiveness. To address these challenges, we propose Certified Signed Graph Unlearning (CSGU), which provides provable privacy guarantees while preserving the sociological principles underlying SGNNs. CSGU employs a three-stage method: (1) efficiently identifying minimal influenced neighborhoods via triangular structures, (2) applying sociological theories to quantify node importance for optimal privacy budget allocation, and (3) performing importance-weighted parameter updates to achieve certified modifications with minimal utility degradation. Extensive experiments demonstrate that CSGU outperforms existing methods, achieving superior performance in both utility preservation and unlearning effectiveness on SGNNs.

CrimeAlarm: Towards Intensive Intent Dynamics in Fine-grained Crime Prediction

Apr 10, 2024

Abstract:Granularity and accuracy are two crucial factors for crime event prediction. Within fine-grained event classification, multiple criminal intents may alternately exhibit in preceding sequential events, and progress differently in next. Such intensive intent dynamics makes training models hard to capture unobserved intents, and thus leads to sub-optimal generalization performance, especially in the intertwining of numerous potential events. To capture comprehensive criminal intents, this paper proposes a fine-grained sequential crime prediction framework, CrimeAlarm, that equips with a novel mutual distillation strategy inspired by curriculum learning. During the early training phase, spot-shared criminal intents are captured through high-confidence sequence samples. In the later phase, spot-specific intents are gradually learned by increasing the contribution of low-confidence sequences. Meanwhile, the output probability distributions are reciprocally learned between prediction networks to model unobserved criminal intents. Extensive experiments show that CrimeAlarm outperforms state-of-the-art methods in terms of NDCG@5, with improvements of 4.51% for the NYC16 and 7.73% for the CHI18 in accuracy measures.

What is Next when Sequential Prediction Meets Implicitly Hard Interaction?

Feb 14, 2022

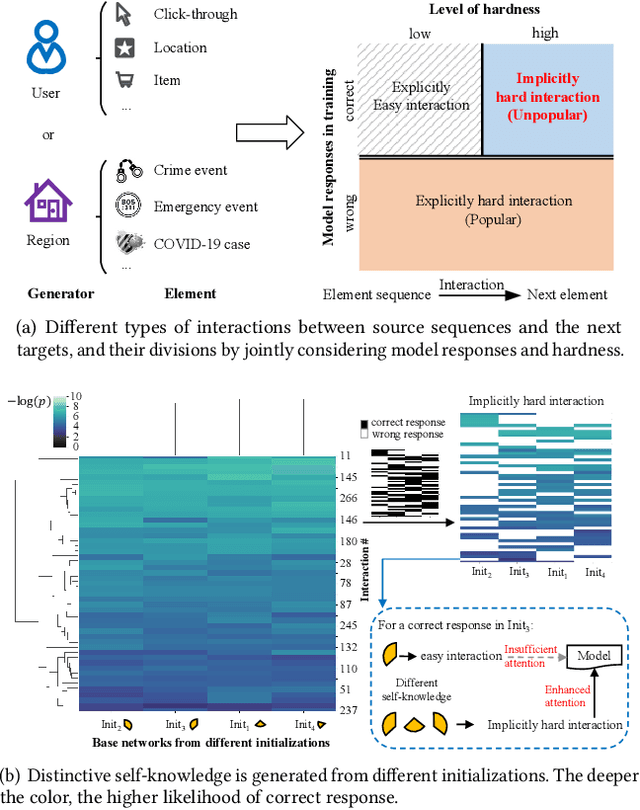

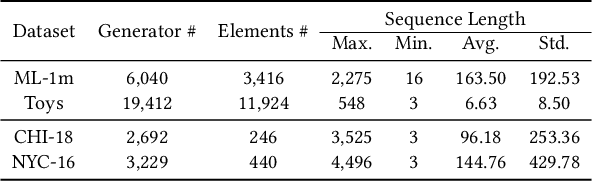

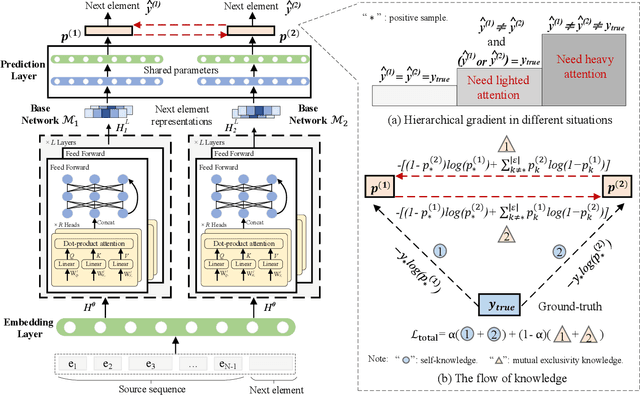

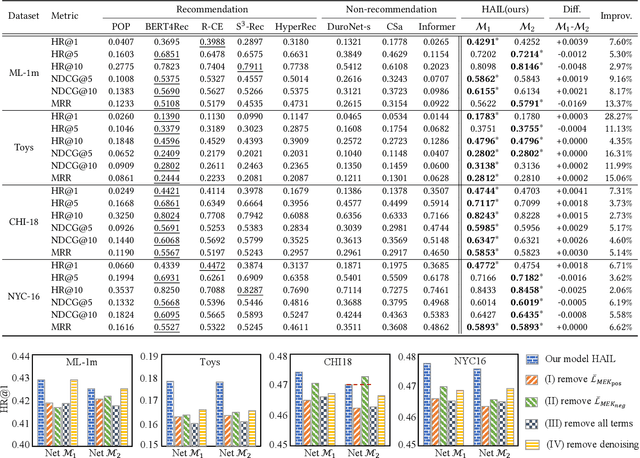

Abstract:Hard interaction learning between source sequences and their next targets is challenging, which exists in a myriad of sequential prediction tasks. During the training process, most existing methods focus on explicitly hard interactions caused by wrong responses. However, a model might conduct correct responses by capturing a subset of learnable patterns, which results in implicitly hard interactions with some unlearned patterns. As such, its generalization performance is weakened. The problem gets more serious in sequential prediction due to the interference of substantial similar candidate targets. To this end, we propose a Hardness Aware Interaction Learning framework (HAIL) that mainly consists of two base sequential learning networks and mutual exclusivity distillation (MED). The base networks are initialized differently to learn distinctive view patterns, thus gaining different training experiences. The experiences in the form of the unlikelihood of correct responses are drawn from each other by MED, which provides mutual exclusivity knowledge to figure out implicitly hard interactions. Moreover, we deduce that the unlikelihood essentially introduces additional gradients to push the pattern learning of correct responses. Our framework can be easily extended to more peer base networks. Evaluation is conducted on four datasets covering cyber and physical spaces. The experimental results demonstrate that our framework outperforms several state-of-the-art methods in terms of top-k based metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge