Kainan Liu

PMT Waveform Simulation and Reconstruction with Conditional Diffusion Network

Feb 05, 2026Abstract:Photomultiplier tubes (PMTs) are widely employed in particle and nuclear physics experiments. The accuracy of PMT waveform reconstruction directly impacts the detector's spatial and energy resolution. A key challenge arises when multiple photons arrive within a few nanoseconds, making it difficult to resolve individual photoelectrons (PEs). Although supervised deep learning methods have surpassed traditional methods in performance, their practical applicability is limited by the lack of ground-truth PE labels in real data. To address this issue, we propose an innovative weakly supervised waveform simulation and reconstruction approach based on a bidirectional conditional diffusion network framework. The method is fully data-driven and requires only raw waveforms and coarse estimates of PE information as input. It first employs a PE-conditioned diffusion model to simulate realistic waveforms from PE sequences, thereby learning the features of overlapping waveforms. Subsequently, these simulated waveforms are used to train a waveform-conditioned diffusion model to reconstruct the PE sequences from waveforms, reinforcing the learning of features of overlapping waveforms. Through iterative refinement between the two conditional diffusion processes, the model progressively improves reconstruction accuracy. Experimental results demonstrate that the proposed method achieves 99% of the normalized PE-number resolution averaged over 1-5 p.e. and 80% of the timing resolution attained by fully supervised learning.

Rethinking Layer Removal: Preserving Critical Components with Task-Aware Singular Value Decomposition

Dec 31, 2024

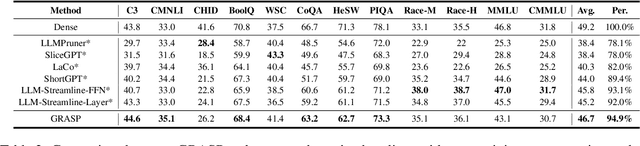

Abstract:Layer removal has emerged as a promising approach for compressing large language models (LLMs) by leveraging redundancy within layers to reduce model size and accelerate inference. However, this technique often compromises internal consistency, leading to performance degradation and instability, with varying impacts across different model architectures. In this work, we propose Taco-SVD, a task-aware framework that retains task-critical singular value directions, preserving internal consistency while enabling efficient compression. Unlike direct layer removal, Taco-SVD preserves task-critical transformations to mitigate performance degradation. By leveraging gradient-based attribution methods, Taco-SVD aligns singular values with downstream task objectives. Extensive evaluations demonstrate that Taco-SVD outperforms existing methods in perplexity and task performance across different architectures while ensuring minimal computational overhead.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge