Jun-ichiro Hirayama

SPD domain-specific batch normalization to crack interpretable unsupervised domain adaptation in EEG

Jun 02, 2022

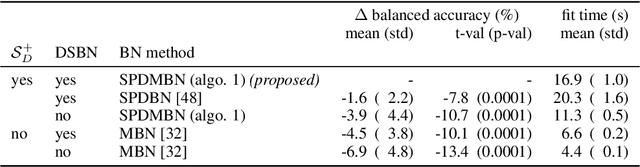

Abstract:Electroencephalography (EEG) provides access to neuronal dynamics non-invasively with millisecond resolution, rendering it a viable method in neuroscience and healthcare. However, its utility is limited as current EEG technology does not generalize well across domains (i.e., sessions and subjects) without expensive supervised re-calibration. Contemporary methods cast this transfer learning (TL) problem as a multi-source/-target unsupervised domain adaptation (UDA) problem and address it with deep learning or shallow, Riemannian geometry aware alignment methods. Both directions have, so far, failed to consistently close the performance gap to state-of-the-art domain-specific methods based on tangent space mapping (TSM) on the symmetric positive definite (SPD) manifold. Here, we propose a theory-based machine learning framework that enables, for the first time, learning domain-invariant TSM models in an end-to-end fashion. To achieve this, we propose a new building block for geometric deep learning, which we denote SPD domain-specific momentum batch normalization (SPDDSMBN). A SPDDSMBN layer can transform domain-specific SPD inputs into domain-invariant SPD outputs, and can be readily applied to multi-source/-target and online UDA scenarios. In extensive experiments with 6 diverse EEG brain-computer interface (BCI) datasets, we obtain state-of-the-art performance in inter-session and -subject TL with a simple, intrinsically interpretable network architecture, which we denote TSMNet.

Neural dSCA: demixing multimodal interaction among brain areas during naturalistic experiments

Jun 05, 2021

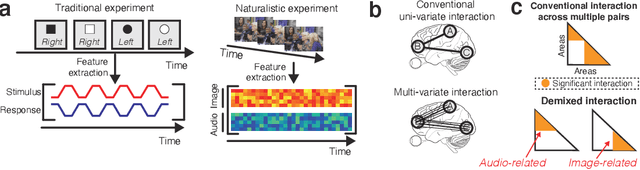

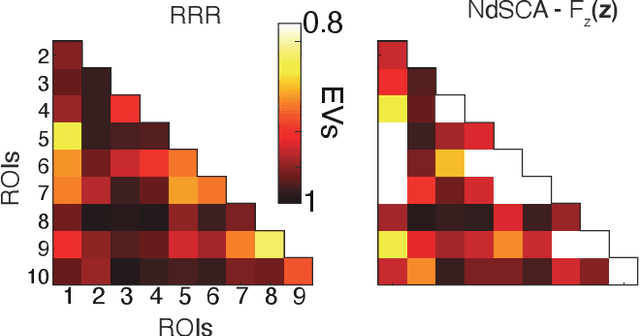

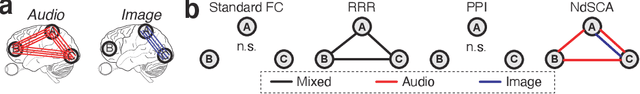

Abstract:Multi-regional interaction among neuronal populations underlies the brain's processing of rich sensory information in our daily lives. Recent neuroscience and neuroimaging studies have increasingly used naturalistic stimuli and experimental design to identify such realistic sensory computation in the brain. However, existing methods for cross-areal interaction analysis with dimensionality reduction, such as reduced-rank regression and canonical correlation analysis, have limited applicability and interpretability in naturalistic settings because they usually do not appropriately 'demix' neural interactions into those associated with different types of task parameters or stimulus features (e.g., visual or audio). In this paper, we develop a new method for cross-areal interaction analysis that uses the rich task or stimulus parameters to reveal how and what types of information are shared by different neural populations. The proposed neural demixed shared component analysis combines existing dimensionality reduction methods with a practical neural network implementation of functional analysis of variance with latent variables, thereby efficiently demixing nonlinear effects of continuous and multimodal stimuli. We also propose a simplifying alternative under the assumptions of linear effects and unimodal stimuli. To demonstrate our methods, we analyzed two human neuroimaging datasets of participants watching naturalistic videos of movies and dance movements. The results demonstrate that our methods provide new insights into multi-regional interaction in the brain during naturalistic sensory inputs, which cannot be captured by conventional techniques.

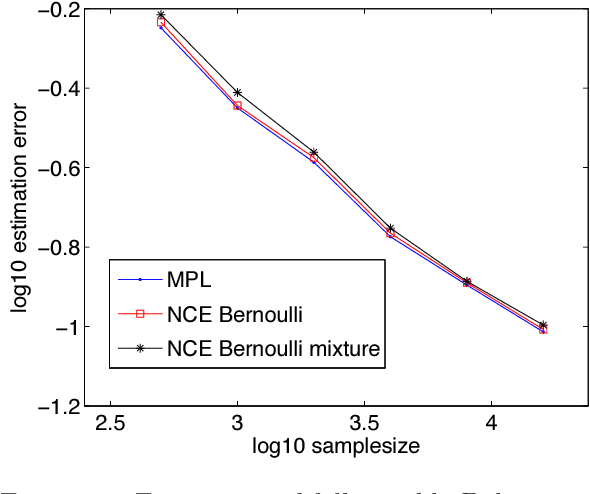

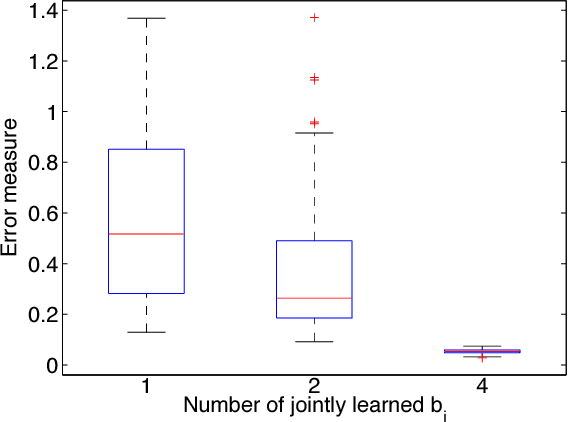

Bregman divergence as general framework to estimate unnormalized statistical models

Feb 14, 2012

Abstract:We show that the Bregman divergence provides a rich framework to estimate unnormalized statistical models for continuous or discrete random variables, that is, models which do not integrate or sum to one, respectively. We prove that recent estimation methods such as noise-contrastive estimation, ratio matching, and score matching belong to the proposed framework, and explain their interconnection based on supervised learning. Further, we discuss the role of boosting in unsupervised learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge