Jorge Hurtado-Grueso

Elastic Monte Carlo Tree Search with State Abstraction for Strategy Game Playing

May 30, 2022

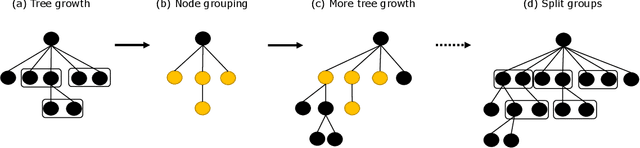

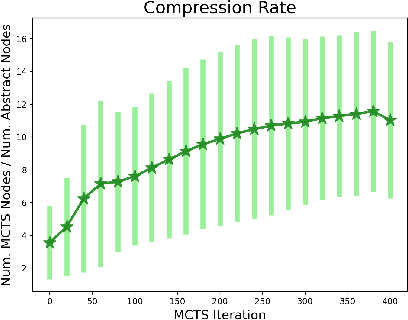

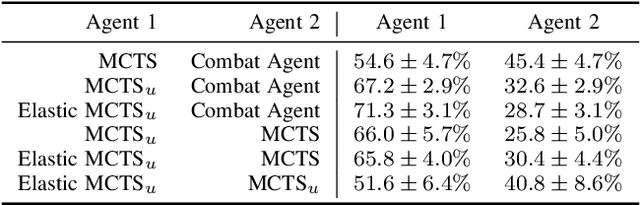

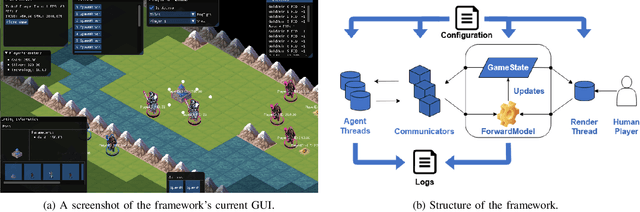

Abstract:Strategy video games challenge AI agents with their combinatorial search space caused by complex game elements. State abstraction is a popular technique that reduces the state space complexity. However, current state abstraction methods for games depend on domain knowledge, making their application to new games expensive. State abstraction methods that require no domain knowledge are studied extensively in the planning domain. However, no evidence shows they scale well with the complexity of strategy games. In this paper, we propose Elastic MCTS, an algorithm that uses state abstraction to play strategy games. In Elastic MCTS, the nodes of the tree are clustered dynamically, first grouped together progressively by state abstraction, and then separated when an iteration threshold is reached. The elastic changes benefit from efficient searching brought by state abstraction but avoid the negative influence of using state abstraction for the whole search. To evaluate our method, we make use of the general strategy games platform Stratega to generate scenarios of varying complexity. Results show that Elastic MCTS outperforms MCTS baselines with a large margin, while reducing the tree size by a factor of $10$. Code can be found at: https://github.com/egg-west/Stratega

Portfolio Search and Optimization for General Strategy Game-Playing

Apr 21, 2021

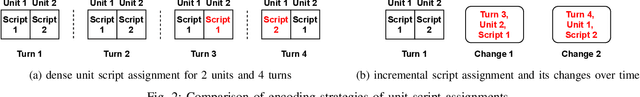

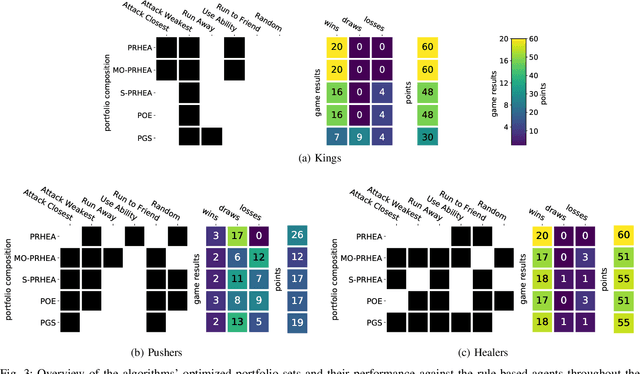

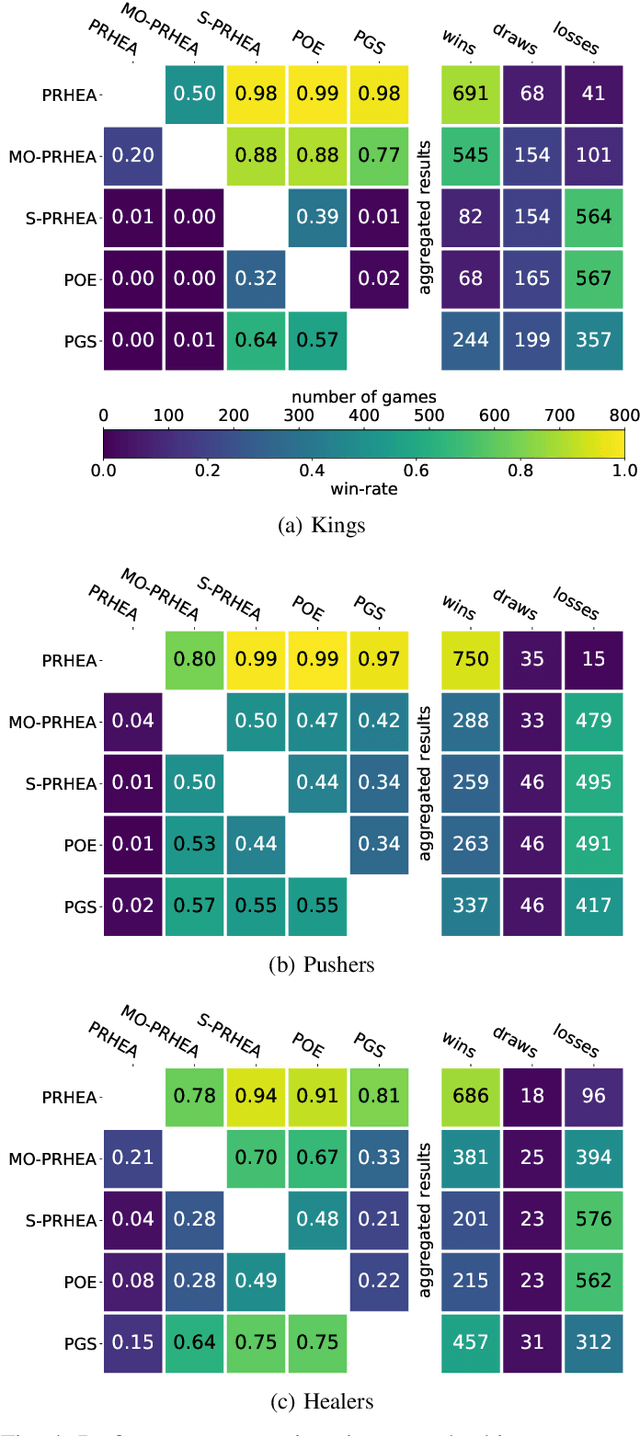

Abstract:Portfolio methods represent a simple but efficient type of action abstraction which has shown to improve the performance of search-based agents in a range of strategy games. We first review existing portfolio techniques and propose a new algorithm for optimization and action-selection based on the Rolling Horizon Evolutionary Algorithm. Moreover, a series of variants are developed to solve problems in different aspects. We further analyze the performance of discussed agents in a general strategy game-playing task. For this purpose, we run experiments on three different game-modes of the Stratega framework. For the optimization of the agents' parameters and portfolio sets we study the use of the N-tuple Bandit Evolutionary Algorithm. The resulting portfolio sets suggest a high diversity in play-styles while being able to consistently beat the sample agents. An analysis of the agents' performance shows that the proposed algorithm generalizes well to all game-modes and is able to outperform other portfolio methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge